Closed

Conversation

…egistration to test conditionally

### What changes were proposed in this pull request?

This PR proposes to move the doctests in `registerJavaUDAF` and `registerJavaFunction` to the proper unittests that run conditionally when the test classes are present.

Both tests are dependent on the test classes in JVM side, `test.org.apache.spark.sql.JavaStringLength` and `test.org.apache.spark.sql.MyDoubleAvg`. So if you run the tests against the plain `sbt package`, it fails as below:

```

**********************************************************************

File "/.../spark/python/pyspark/sql/udf.py", line 366, in pyspark.sql.udf.UDFRegistration.registerJavaFunction

Failed example:

spark.udf.registerJavaFunction(

"javaStringLength", "test.org.apache.spark.sql.JavaStringLength", IntegerType())

Exception raised:

Traceback (most recent call last):

...

test.org.apache.spark.sql.JavaStringLength, please make sure it is on the classpath;

...

6 of 7 in pyspark.sql.udf.UDFRegistration.registerJavaFunction

2 of 4 in pyspark.sql.udf.UDFRegistration.registerJavaUDAF

***Test Failed*** 8 failures.

```

### Why are the changes needed?

In order to support to run the tests against the plain SBT build. See also https://spark.apache.org/developer-tools.html

### Does this PR introduce _any_ user-facing change?

No, it's test-only.

### How was this patch tested?

Manually tested as below:

```bash

./build/sbt -DskipTests -Phive-thriftserver clean package

cd python

./run-tests --python-executable=python3 --testname="pyspark.sql.udf UserDefinedFunction"

./run-tests --python-executable=python3 --testname="pyspark.sql.tests.test_udf UDFTests"

```

```bash

./build/sbt -DskipTests -Phive-thriftserver clean test:package

cd python

./run-tests --python-executable=python3 --testname="pyspark.sql.udf UserDefinedFunction"

./run-tests --python-executable=python3 --testname="pyspark.sql.tests.test_udf UDFTests"

```

Closes apache#28795 from HyukjinKwon/SPARK-31965.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

… sql.execution package ### What changes were proposed in this pull request? This PR proposes to remove package private in classes/objects in sql.execution package, as per SPARK-16964. ### Why are the changes needed? This is per post-hoc review comment, see apache#24996 (comment) ### Does this PR introduce _any_ user-facing change? No. ### How was this patch tested? N/A Closes apache#28790 from HeartSaVioR/SPARK-28199-FOLLOWUP-apply-SPARK-16964. Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan.opensource@gmail.com> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

…gisticRegressionWithSGDTests.test_training_and_prediction ### What changes were proposed in this pull request? This is similar with apache@64cb6f7 The test `StreamingLogisticRegressionWithSGDTests.test_training_and_prediction` seems also flaky. This PR just increases the timeout to 3 mins too. The cause is very likely the time elapsed. See https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/123787/testReport/pyspark.mllib.tests.test_streaming_algorithms/StreamingLogisticRegressionWithSGDTests/test_training_and_prediction/ ``` Traceback (most recent call last): File "/home/jenkins/workspace/SparkPullRequestBuilder2/python/pyspark/mllib/tests/test_streaming_algorithms.py", line 330, in test_training_and_prediction eventually(condition, timeout=60.0) File "/home/jenkins/workspace/SparkPullRequestBuilder2/python/pyspark/testing/utils.py", line 90, in eventually % (timeout, lastValue)) AssertionError: Test failed due to timeout after 60 sec, with last condition returning: Latest errors: 0.67, 0.71, 0.78, 0.7, 0.75, 0.74, 0.73, 0.69, 0.62, 0.71, 0.69, 0.75, 0.72, 0.77, 0.71, 0.74, 0.76, 0.78, 0.7, 0.78, 0.8, 0.74, 0.77, 0.75, 0.76, 0.76, 0.75 ``` ### Why are the changes needed? To make PR builds more stable. ### Does this PR introduce _any_ user-facing change? No. ### How was this patch tested? Jenkins will test them out. Closes apache#28798 from HyukjinKwon/SPARK-31966. Authored-by: HyukjinKwon <gurwls223@apache.org> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request? This is a followup of apache#23388 . apache#23388 has an issue: it doesn't handle subquery expressions and assumes they will be turned into joins. However, this is not true for non-correlated subquery expressions. This PR fixes this issue. It now doesn't skip `Subquery`, and subquery expressions will be handled by `OptimizeSubqueries`, which runs the optimizer with the subquery. Note that, correlated subquery expressions will be handled twice: once in `OptimizeSubqueries`, once later when it becomes join. This is OK as `NormalizeFloatingNumbers` is idempotent now. ### Why are the changes needed? fix a bug ### Does this PR introduce _any_ user-facing change? yes, see the newly added test. ### How was this patch tested? new test Closes apache#28785 from cloud-fan/normalize. Authored-by: Wenchen Fan <wenchen@databricks.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request? remove duplicate test cases ### Why are the changes needed? improve test quality ### Does this PR introduce _any_ user-facing change? NO ### How was this patch tested? No test Closes apache#28782 from GuoPhilipse/31954-delete-duplicate-testcase. Lead-authored-by: GuoPhilipse <46367746+GuoPhilipse@users.noreply.github.com> Co-authored-by: GuoPhilipse <guofei_ok@126.com> Signed-off-by: HyukjinKwon <gurwls223@apache.org>

… task ### What changes were proposed in this pull request? This PR is a followup of apache#26624. This PR cleans up MDC properties if the original value is empty. Besides, this PR adds a warning and ignore the value when the user tries to override the value of `taskName`. ### Why are the changes needed? Before this PR, running the following jobs: ``` sc.setLocalProperty("mdc.my", "ABC") sc.parallelize(1 to 100).count() sc.setLocalProperty("mdc.my", null) sc.parallelize(1 to 100).count() ``` there's still MDC value "ABC" in the log of the second count job even if we've unset the value. ### Does this PR introduce _any_ user-facing change? Yes, user will 1) no longer see the MDC values after unsetting the value; 2) see a warning if he/she tries to override the value of `taskName`. ### How was this patch tested? Tested Manaually. Closes apache#28756 from Ngone51/followup-8981. Authored-by: yi.wu <yi.wu@databricks.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com>

… conversion ### What changes were proposed in this pull request? This PR add a new rule to support push predicate through join by rewriting join condition to CNF(conjunctive normal form). The following example is the steps of this rule: 1. Prepare Table: ```sql CREATE TABLE x(a INT); CREATE TABLE y(b INT); ... SELECT * FROM x JOIN y ON ((a < 0 and a > b) or a > 10); ``` 2. Convert the join condition to CNF: ``` (a < 0 or a > 10) and (a > b or a > 10) ``` 3. Split conjunctive predicates Predicates ---| (a < 0 or a > 10) (a > b or a > 10) 4. Push predicate Table | Predicate --- | --- x | (a < 0 or a > 10) ### Why are the changes needed? Improve query performance. PostgreSQL, [Impala](https://issues.apache.org/jira/browse/IMPALA-9183) and Hive support this feature. ### Does this PR introduce _any_ user-facing change? No. ### How was this patch tested? Unit test and benchmark test. SQL | Before this PR | After this PR --- | --- | --- TPCDS 5T Q13 | 84s | 21s TPCDS 5T q85 | 66s | 34s TPCH 1T q19 | 37s | 32s Closes apache#28733 from gengliangwang/cnf. Lead-authored-by: Gengliang Wang <gengliang.wang@databricks.com> Co-authored-by: Yuming Wang <yumwang@ebay.com> Signed-off-by: Gengliang Wang <gengliang.wang@databricks.com>

### What changes were proposed in this pull request? This PR intends to add a build-in SQL function - `WIDTH_BUCKET`. It is the rework of apache#18323. Closes apache#18323 The other RDBMS references for `WIDTH_BUCKET`: - Oracle: https://docs.oracle.com/cd/B28359_01/olap.111/b28126/dml_functions_2137.htm#OLADM717 - PostgreSQL: https://www.postgresql.org/docs/current/functions-math.html - Snowflake: https://docs.snowflake.com/en/sql-reference/functions/width_bucket.html - Prestodb: https://prestodb.io/docs/current/functions/math.html - Teradata: https://docs.teradata.com/reader/kmuOwjp1zEYg98JsB8fu_A/Wa8vw69cGzoRyNULHZeudg - DB2: https://www.ibm.com/support/producthub/db2/docs/content/SSEPGG_11.5.0/com.ibm.db2.luw.sql.ref.doc/doc/r0061483.html?pos=2 ### Why are the changes needed? For better usability. ### Does this PR introduce _any_ user-facing change? No. ### How was this patch tested? Added unit tests. Closes apache#28764 from maropu/SPARK-21117. Lead-authored-by: Takeshi Yamamuro <yamamuro@apache.org> Co-authored-by: Yuming Wang <wgyumg@gmail.com> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request? This PR upgrades HtmlUnit. Selenium and Jetty also upgraded because of dependency. ### Why are the changes needed? Recently, a security issue which affects HtmlUnit is reported. https://nvd.nist.gov/vuln/detail/CVE-2020-5529 According to the report, arbitrary code can be run by malicious users. HtmlUnit is used for test so the impact might not be large but it's better to upgrade it just in case. ### Does this PR introduce _any_ user-facing change? No. ### How was this patch tested? Existing testcases. Closes apache#28585 from sarutak/upgrade-htmlunit. Authored-by: Kousuke Saruta <sarutak@oss.nttdata.com> Signed-off-by: Sean Owen <srowen@gmail.com>

…eption ### What changes were proposed in this pull request? A minor fix to fix the append method of StringConcat to cap the length at MAX_ROUNDED_ARRAY_LENGTH to make sure it does not overflow and cause StringIndexOutOfBoundsException Thanks to **Jeffrey Stokes** for reporting the issue and explaining the underlying problem in detail in the JIRA. ### Why are the changes needed? This fixes StringIndexOutOfBoundsException on an overflow. ### Does this PR introduce any user-facing change? No. ### How was this patch tested? Added a test in StringsUtilSuite. Closes apache#28750 from dilipbiswal/SPARK-31916. Authored-by: Dilip Biswal <dkbiswal@gmail.com> Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

This pr normalize all binary comparison expressions when comparing plans.

### Why are the changes needed?

Improve test framework, otherwise this test will fail:

```scala

test("SPARK-31912 Normalize all binary comparison expressions") {

val original = testRelation

.where('a === 'b && Literal(13) >= 'b).as("x")

val optimized = testRelation

.where(IsNotNull('a) && IsNotNull('b) && 'a === 'b && 'b <= 13 && 'a <= 13).as("x")

comparePlans(Optimize.execute(original.analyze), optimized.analyze)

}

```

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Manual test.

Closes apache#28734 from wangyum/SPARK-31912.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Yuming Wang <wgyumg@gmail.com>

…while switching standard time zone offset ### What changes were proposed in this pull request? Fix the bug in microseconds rebasing during transitions from one standard time zone offset to another one. In the PR, I propose to change the implementation of `rebaseGregorianToJulianMicros` which performs rebasing via local timestamps. In the case of overlapping: 1. Check that the original instant belongs to earlier or later instant of overlapped local timestamp. 2. If it is an earlier instant, take zone and DST offsets from the previous day otherwise 3. Set time zone offsets to Julian timestamp from the next day. Note: The fix assumes that transitions cannot happen more often than once per 2 days. ### Why are the changes needed? Current implementation handles timestamps overlapping only during daylight saving time but overlapping can happen also during transition from one standard time zone to another one. For example in the case of `Asia/Hong_Kong`, the time zone switched from `Japan Standard Time` (UTC+9) to `Hong Kong Time` (UTC+8) on _Sunday, 18 November, 1945 01:59:59 AM_. The changes allow to handle the special case as well. ### Does this PR introduce _any_ user-facing change? It might affect micros rebasing in before common era when not-optimised version of `rebaseGregorianToJulianMicros()` is used directly. ### How was this patch tested? 1. By existing tests in `DateTimeUtilsSuite`, `RebaseDateTimeSuite`, `DateFunctionsSuite`, `DateExpressionsSuite` and `TimestampFormatterSuite`. 2. Added new test to `RebaseDateTimeSuite` 3. Regenerated `gregorian-julian-rebase-micros.json` with the step of 30 minutes, and got the same JSON file. The JSON file isn't affected because previously it was generated with the step of 1 week. And the spike in diffs/switch points during 1 hour of timestamp overlapping wasn't detected. Closes apache#28787 from MaxGekk/HongKong-tz-1945. Authored-by: Max Gekk <max.gekk@gmail.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com>

…/Join ### What changes were proposed in this pull request? Currently we only push nested column pruning through a few operators such as LIMIT, SAMPLE, etc. This patch extends the feature to other operators including RepartitionByExpression, Join. ### Why are the changes needed? Currently nested column pruning only applied on a few operators. It limits the benefit of nested column pruning. Extending nested column pruning coverage to make this feature more generally applied through different queries. ### Does this PR introduce _any_ user-facing change? Yes. More SQL operators are covered by nested column pruning. ### How was this patch tested? Added unit test, end-to-end tests. Closes apache#28556 from viirya/others-column-pruning. Authored-by: Liang-Chi Hsieh <viirya@gmail.com> Signed-off-by: HyukjinKwon <gurwls223@apache.org>

This reverts commit 69ba9b6.

### What changes were proposed in this pull request? apache#28747 reverted apache#28439 due to some flaky test case. This PR fixes the flaky test and adds pagination support. ### Why are the changes needed? To support pagination for streaming tab ### Does this PR introduce _any_ user-facing change? Yes, Now streaming tab tables will be paginated. ### How was this patch tested? Manually. Closes apache#28748 from iRakson/fixstreamingpagination. Authored-by: iRakson <raksonrakesh@gmail.com> Signed-off-by: Sean Owen <srowen@gmail.com>

… files ### What changes were proposed in this pull request? When removing non-existing files in the release script, do not fail. ### Why are the changes needed? This is to make the release script more robust, as we don't care if the files exist before we remove them. ### Does this PR introduce _any_ user-facing change? no ### How was this patch tested? tested when cutting 3.0.0 RC Closes apache#28815 from cloud-fan/release. Authored-by: Wenchen Fan <wenchen@databricks.com> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

### What changes were proposed in this pull request? This PR intends to extract SQL reserved/non-reserved keywords from the ANTLR grammar file (`SqlBase.g4`) directly. This approach is based on the cloud-fan suggestion: apache#28779 (comment) ### Why are the changes needed? It is hard to maintain a full set of the keywords in `TableIdentifierParserSuite`, so it would be nice if we could extract them from the `SqlBase.g4` file directly. ### Does this PR introduce _any_ user-facing change? No. ### How was this patch tested? Existing tests. Closes apache#28802 from maropu/SPARK-31950-2. Authored-by: Takeshi Yamamuro <yamamuro@apache.org> Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

This proposes a minor refactoring to match `NestedColumnAliasing` to `GeneratorNestedColumnAliasing` so it returns the pruned plan directly.

```scala

case p NestedColumnAliasing(nestedFieldToAlias, attrToAliases) =>

NestedColumnAliasing.replaceToAliases(p, nestedFieldToAlias, attrToAliases)

```

vs

```scala

case GeneratorNestedColumnAliasing(p) => p

```

### Why are the changes needed?

Just for readability.

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

Existing tests should cover.

Closes apache#28812 from HyukjinKwon/SPARK-31977.

Authored-by: HyukjinKwon <gurwls223@apache.org>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

…ime regression ### What changes were proposed in this pull request? After apache#28192, the job list page becomes very slow. For example, after the following operation, the UI loading can take >40 sec. ``` (1 to 1000).foreach(_ => sc.parallelize(1 to 10).collect) ``` This is caused by a [performance issue of `vis-timeline`](visjs/vis-timeline#379). The serious issue affects both branch-3.0 and branch-2.4 I tried a different version 4.21.0 from https://cdnjs.com/libraries/vis The infinite drawing issue seems also fixed if the zoom is disabled as default. ### Why are the changes needed? Fix the serious perf issue in web UI by falling back vis-timeline-graph2d to an ealier version. ### Does this PR introduce _any_ user-facing change? Yes, fix the UI perf regression ### How was this patch tested? Manual test Closes apache#28806 from gengliangwang/downgradeVis. Authored-by: Gengliang Wang <gengliang.wang@databricks.com> Signed-off-by: Gengliang Wang <gengliang.wang@databricks.com>

### What changes were proposed in this pull request? Add instance weight support in LinearRegressionSummary ### Why are the changes needed? LinearRegression and RegressionMetrics support instance weight. We should support instance weight in LinearRegressionSummary too. ### Does this PR introduce _any_ user-facing change? No ### How was this patch tested? add new test Closes apache#28772 from huaxingao/lir_weight_summary. Authored-by: Huaxin Gao <huaxing@us.ibm.com> Signed-off-by: Sean Owen <srowen@gmail.com>

…e from the command line for sbt ### What changes were proposed in this pull request? This PR proposes to support guava version configurable from command line for sbt. ### Why are the changes needed? apache#28455 added the configurability for Maven but not for sbt. sbt is usually faster than Maven so it's useful for developers. ### Does this PR introduce _any_ user-facing change? No. ### How was this patch tested? I confirmed the guava version is changed with the following commands. ``` $ build/sbt "inspect tree clean" | grep guava [info] +-spark/*:dependencyOverrides = Set(com.google.guava:guava:14.0.1, xerces:xercesImpl:2.12.0, jline:jline:2.14.6, org.apache.avro:avro:1.8.2) ``` ``` $ build/sbt -Dguava.version=25.0-jre "inspect tree clean" | grep guava [info] +-spark/*:dependencyOverrides = Set(com.google.guava:guava:25.0-jre, xerces:xercesImpl:2.12.0, jline:jline:2.14.6, org.apache.avro:avro:1.8.2) ``` Closes apache#28822 from sarutak/guava-version-for-sbt. Authored-by: Kousuke Saruta <sarutak@oss.nttdata.com> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

…n application summary is unavailable ### What changes were proposed in this pull request? <!-- Please clarify what changes you are proposing. The purpose of this section is to outline the changes and how this PR fixes the issue. If possible, please consider writing useful notes for better and faster reviews in your PR. See the examples below. 1. If you refactor some codes with changing classes, showing the class hierarchy will help reviewers. 2. If you fix some SQL features, you can provide some references of other DBMSes. 3. If there is design documentation, please add the link. 4. If there is a discussion in the mailing list, please add the link. --> This PR enriches the exception message when application summary is not available. apache#28444 covers the case when application information is not available but the case application summary is not available is not covered. ### Why are the changes needed? <!-- Please clarify why the changes are needed. For instance, 1. If you propose a new API, clarify the use case for a new API. 2. If you fix a bug, you can clarify why it is a bug. --> To complement apache#28444 . ### Does this PR introduce _any_ user-facing change? <!-- Note that it means *any* user-facing change including all aspects such as the documentation fix. If yes, please clarify the previous behavior and the change this PR proposes - provide the console output, description and/or an example to show the behavior difference if possible. If possible, please also clarify if this is a user-facing change compared to the released Spark versions or within the unreleased branches such as master. If no, write 'No'. --> Yes. Before this change, we can get the following error message when we access to `/jobs` if application summary is not available. <img width="707" alt="no-such-element-exception-error-message" src="https://user-images.githubusercontent.com/4736016/84562182-6aadf200-ad8d-11ea-8980-d63edde6fad6.png"> After this change, we can get the following error message. It's like apache#28444 does. <img width="1349" alt="enriched-errorm-message" src="https://user-images.githubusercontent.com/4736016/84562189-85806680-ad8d-11ea-8346-4da2ec11df2b.png"> ### How was this patch tested? <!-- If tests were added, say they were added here. Please make sure to add some test cases that check the changes thoroughly including negative and positive cases if possible. If it was tested in a way different from regular unit tests, please clarify how you tested step by step, ideally copy and paste-able, so that other reviewers can test and check, and descendants can verify in the future. If tests were not added, please describe why they were not added and/or why it was difficult to add. --> I checked with the following procedure. 1. Set breakpoint in the line of `kvstore.write(appSummary)` in `AppStatusListener#onStartApplicatin`. Only the thread reaching this line should be suspended. 2. Start spark-shell and wait few seconds. 3. Access to `/jobs` Closes apache#28820 from sarutak/fix-no-such-element. Authored-by: Kousuke Saruta <sarutak@oss.nttdata.com> Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

A unit test is added

Partition duplicate check added in `org.apache.spark.sql.execution.datasources.PartitioningUtils#validatePartitionColumn`

### Why are the changes needed?

When people write data with duplicate partition column, it will cause a `org.apache.spark.sql.AnalysisException: Found duplicate column ...` in loading data from the writted.

### Does this PR introduce _any_ user-facing change?

Yes.

It will prevent people from using duplicate partition columns to write data.

1. Before the PR:

It will look ok at `df.write.partitionBy("b", "b").csv("file:///tmp/output")`,

but get an exception when read:

`spark.read.csv("file:///tmp/output").show()`

org.apache.spark.sql.AnalysisException: Found duplicate column(s) in the partition schema: `b`;

2. After the PR:

`df.write.partitionBy("b", "b").csv("file:///tmp/output")` will trigger the exception:

org.apache.spark.sql.AnalysisException: Found duplicate column(s) b, b: `b`;

### How was this patch tested?

Unit test.

Closes apache#28814 from TJX2014/master-SPARK-31968.

Authored-by: TJX2014 <xiaoxingstack@gmail.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

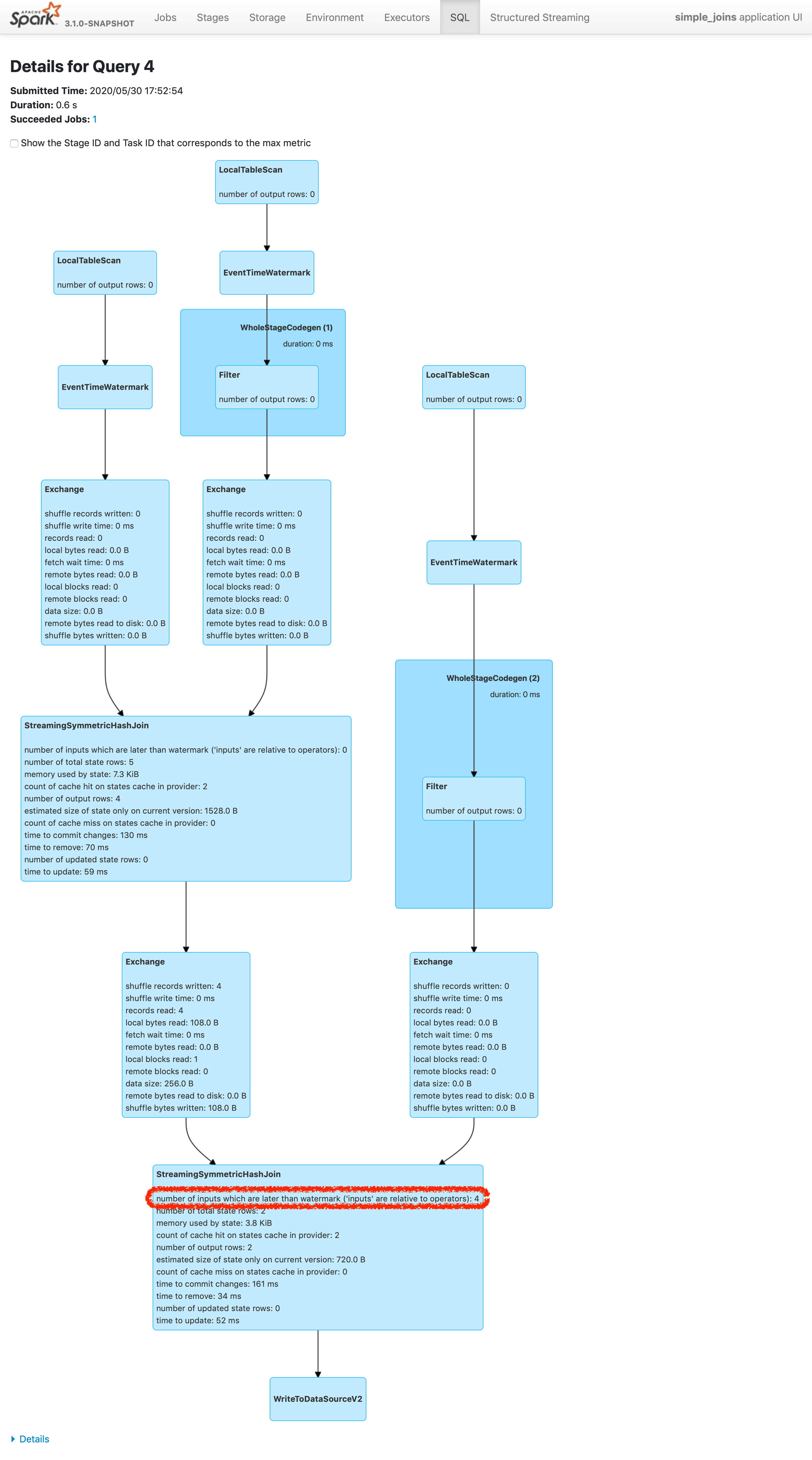

…han watermark plus allowed delay ### What changes were proposed in this pull request? Please refer https://issues.apache.org/jira/browse/SPARK-24634 to see rationalization of the issue. This patch adds a new metric to count the number of inputs arrived later than watermark plus allowed delay. To make changes simpler, this patch doesn't count the exact number of input rows which are later than watermark plus allowed delay. Instead, this patch counts the inputs which are dropped in the logic of operator. The difference of twos are shown in streaming aggregation: to optimize the calculation, streaming aggregation "pre-aggregates" the input rows, and later checks the lateness against "pre-aggregated" inputs, hence the number might be reduced. The new metric will be provided via two places: 1. On Spark UI: check the metrics in stateful operator nodes in query execution details page in SQL tab 2. On Streaming Query Listener: check "numLateInputs" in "stateOperators" in QueryProcessEvent. ### Why are the changes needed? Dropping late inputs means that end users might not get expected outputs. Even end users may indicate the fact and tolerate the result (as that's what allowed lateness is for), but they should be able to observe whether the current value of allowed lateness drops inputs or not so that they can adjust the value. Also, whatever the chance they have multiple of stateful operators in a single query, if Spark drops late inputs "between" these operators, it becomes "correctness" issue. Spark should disallow such possibility, but given we already provided the flexibility, at least we should provide the way to observe the correctness issue and decide whether they should make correction of their query or not. ### Does this PR introduce _any_ user-facing change? Yes. End users will be able to retrieve the information of late inputs via two ways: 1. SQL tab in Spark UI 2. Streaming Query Listener ### How was this patch tested? New UTs added & existing UTs are modified to reflect the change. And ran manual test reproducing SPARK-28094. I've picked the specific case on "B outer C outer D" which is enough to represent the "intermediate late row" issue due to global watermark. https://gist.github.com/jammann/b58bfbe0f4374b89ecea63c1e32c8f17 Spark logs warning message on the query which means SPARK-28074 is working correctly, ``` 20/05/30 17:52:47 WARN UnsupportedOperationChecker: Detected pattern of possible 'correctness' issue due to global watermark. The query contains stateful operation which can emit rows older than the current watermark plus allowed late record delay, which are "late rows" in downstream stateful operations and these rows can be discarded. Please refer the programming guide doc for more details.; Join LeftOuter, ((D_FK#28 = D_ID#87) AND (B_LAST_MOD#26-T30000ms = D_LAST_MOD#88-T30000ms)) :- Join LeftOuter, ((C_FK#27 = C_ID#58) AND (B_LAST_MOD#26-T30000ms = C_LAST_MOD#59-T30000ms)) : :- EventTimeWatermark B_LAST_MOD#26: timestamp, 30 seconds : : +- Project [v#23.B_ID AS B_ID#25, v#23.B_LAST_MOD AS B_LAST_MOD#26, v#23.C_FK AS C_FK#27, v#23.D_FK AS D_FK#28] : : +- Project [from_json(StructField(B_ID,StringType,false), StructField(B_LAST_MOD,TimestampType,false), StructField(C_FK,StringType,true), StructField(D_FK,StringType,true), value#21, Some(UTC)) AS v#23] : : +- Project [cast(value#8 as string) AS value#21] : : +- StreamingRelationV2 org.apache.spark.sql.kafka010.KafkaSourceProvider3a7fd18c, kafka, org.apache.spark.sql.kafka010.KafkaSourceProvider$KafkaTable396d2958, org.apache.spark.sql.util.CaseInsensitiveStringMapa51ee61a, [key#7, value#8, topic#9, partition#10, offset#11L, timestamp#12, timestampType#13], StreamingRelation DataSource(org.apache.spark.sql.SparkSessiond221af8,kafka,List(),None,List(),None,Map(inferSchema -> true, startingOffsets -> earliest, subscribe -> B, kafka.bootstrap.servers -> localhost:9092),None), kafka, [key#0, value#1, topic#2, partition#3, offset#4L, timestamp#5, timestampType#6] : +- EventTimeWatermark C_LAST_MOD#59: timestamp, 30 seconds : +- Project [v#56.C_ID AS C_ID#58, v#56.C_LAST_MOD AS C_LAST_MOD#59] : +- Project [from_json(StructField(C_ID,StringType,false), StructField(C_LAST_MOD,TimestampType,false), value#54, Some(UTC)) AS v#56] : +- Project [cast(value#41 as string) AS value#54] : +- StreamingRelationV2 org.apache.spark.sql.kafka010.KafkaSourceProvider3f507373, kafka, org.apache.spark.sql.kafka010.KafkaSourceProvider$KafkaTable7b6736a4, org.apache.spark.sql.util.CaseInsensitiveStringMapa51ee61b, [key#40, value#41, topic#42, partition#43, offset#44L, timestamp#45, timestampType#46], StreamingRelation DataSource(org.apache.spark.sql.SparkSessiond221af8,kafka,List(),None,List(),None,Map(inferSchema -> true, startingOffsets -> earliest, subscribe -> C, kafka.bootstrap.servers -> localhost:9092),None), kafka, [key#33, value#34, topic#35, partition#36, offset#37L, timestamp#38, timestampType#39] +- EventTimeWatermark D_LAST_MOD#88: timestamp, 30 seconds +- Project [v#85.D_ID AS D_ID#87, v#85.D_LAST_MOD AS D_LAST_MOD#88] +- Project [from_json(StructField(D_ID,StringType,false), StructField(D_LAST_MOD,TimestampType,false), value#83, Some(UTC)) AS v#85] +- Project [cast(value#70 as string) AS value#83] +- StreamingRelationV2 org.apache.spark.sql.kafka010.KafkaSourceProvider2b90e779, kafka, org.apache.spark.sql.kafka010.KafkaSourceProvider$KafkaTable36f8cd29, org.apache.spark.sql.util.CaseInsensitiveStringMapa51ee620, [key#69, value#70, topic#71, partition#72, offset#73L, timestamp#74, timestampType#75], StreamingRelation DataSource(org.apache.spark.sql.SparkSessiond221af8,kafka,List(),None,List(),None,Map(inferSchema -> true, startingOffsets -> earliest, subscribe -> D, kafka.bootstrap.servers -> localhost:9092),None), kafka, [key#62, value#63, topic#64, partition#65, offset#66L, timestamp#67, timestampType#68] ``` and we can find the late inputs from the batch 4 as follows:  which represents intermediate inputs are being lost, ended up with correctness issue. Closes apache#28607 from HeartSaVioR/SPARK-24634-v3. Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan.opensource@gmail.com> Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request? Structured Streaming progress reporter will always report an `empty` progress when there is no new data. As design, we should provide progress updates every 10s (default) when there is no new data. Before PR:    After PR:  ### Why are the changes needed? Fixes a bug around incorrect progress report ### Does this PR introduce any user-facing change? Fixes a bug around incorrect progress report ### How was this patch tested? existing ut and manual test Closes apache#28391 from uncleGen/SPARK-31593. Authored-by: uncleGen <hustyugm@gmail.com> Signed-off-by: HyukjinKwon <gurwls223@apache.org>

… setLocalProperty and log4j.properties ### What changes were proposed in this pull request? This PR proposes to use "mdc.XXX" as the consistent key for both `sc.setLocalProperty` and `log4j.properties` when setting up configurations for MDC. ### Why are the changes needed? It's weird that we use "mdc.XXX" as key to set MDC value via `sc.setLocalProperty` while we use "XXX" as key to set MDC pattern in log4j.properties. It could also bring extra burden to the user. ### Does this PR introduce _any_ user-facing change? No, as MDC feature is added in version 3.1, which hasn't been released. ### How was this patch tested? Tested manually. Closes apache#28801 from Ngone51/consistent-mdc. Authored-by: yi.wu <yi.wu@databricks.com> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

…us column sortable ### What changes were proposed in this pull request? In apache#28485 pagination support for tables of Structured Streaming Tab was added. It missed 2 things: * For sorting duration column, `String` was used which sometimes gives wrong results(consider `"3 ms"` and `"12 ms"`). Now we first sort the duration column and then convert it to readable String * Status column was not made sortable. ### Why are the changes needed? To fix the wrong result for sorting and making Status column sortable. ### Does this PR introduce _any_ user-facing change? No ### How was this patch tested? After changes: <img width="1677" alt="Screenshot 2020-06-08 at 2 18 48 PM" src="https://user-images.githubusercontent.com/15366835/84010992-153fa280-a993-11ea-9846-bf176f2ec5d7.png"> Closes apache#28752 from iRakson/ssTests. Authored-by: iRakson <raksonrakesh@gmail.com> Signed-off-by: Sean Owen <srowen@gmail.com>

## What changes were proposed in this pull request? In NestedColumnAliasing rule, we create aliases for nested field access in project list. We considered that top level parent field and nested fields under it were both accessed. In the case, we don't create the aliases because they are redundant. There is another case, where a nested parent field and nested fields under it were both accessed, which we don't consider now. We don't need to create aliases in this case too. ## How was this patch tested? Added test. Closes apache#24525 from viirya/SPARK-27633. Lead-authored-by: Liang-Chi Hsieh <viirya@gmail.com> Co-authored-by: Liang-Chi Hsieh <liangchi@uber.com> Signed-off-by: HyukjinKwon <gurwls223@apache.org>

…r ThriftCLIService to getPortNumber ### What changes were proposed in this pull request? This PR brings 02f32cf back which reverted by 4a25200 because of maven test failure diffs newly made: 1. add a missing log4j file to test resources 2. Call `SessionState.detachSession()` to clean the thread local one in `afterAll`. 3. Not use dedicated JVMs for sbt test runner too ### Why are the changes needed? fix the maven test ### Does this PR introduce _any_ user-facing change? no ### How was this patch tested? add new tests Closes apache#28797 from yaooqinn/SPARK-31926-NEW. Authored-by: Kent Yao <yaooqinn@hotmail.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request? - Modify `DateTimeRebaseBenchmark` to benchmark the default date-time rebasing mode - `EXCEPTION` for saving/loading dates/timestamps from/to parquet files. The mode is benchmarked for modern timestamps after 1900-01-01 00:00:00Z and dates after 1582-10-15. - Regenerate benchmark results in the environment: | Item | Description | | ---- | ----| | Region | us-west-2 (Oregon) | | Instance | r3.xlarge | | AMI | ubuntu/images/hvm-ssd/ubuntu-bionic-18.04-amd64-server-20190722.1 (ami-06f2f779464715dc5) | | Java | OpenJDK 64-Bit Server VM 1.8.0_252 and OpenJDK 64-Bit Server VM 11.0.7+10 | ### Why are the changes needed? The `EXCEPTION` rebasing mode is the default mode of the SQL configs `spark.sql.legacy.parquet.datetimeRebaseModeInRead` and `spark.sql.legacy.parquet.datetimeRebaseModeInWrite`. The changes are needed to improve benchmark coverage for default settings. ### Does this PR introduce _any_ user-facing change? No ### How was this patch tested? By running the benchmark and check results manually. Closes apache#28829 from MaxGekk/benchmark-exception-mode. Authored-by: Max Gekk <max.gekk@gmail.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com>

…cation, regression, clustering and fpm ### What changes were proposed in this pull request? set params default values in trait ...Params in both Scala and Python. I will do this in two PRs. I will change classification, regression, clustering and fpm in this PR. Will change the rest in another PR. ### Why are the changes needed? Make ML has the same default param values between estimator and its corresponding transformer, and also between Scala and Python. ### Does this PR introduce _any_ user-facing change? No ### How was this patch tested? Existing tests Closes apache#29112 from huaxingao/set_default. Authored-by: Huaxin Gao <huaxing@us.ibm.com> Signed-off-by: Huaxin Gao <huaxing@us.ibm.com>

### What changes were proposed in this pull request? This PR aims to remove Python 2 test case from K8s IT. ### Why are the changes needed? Since Apache Spark 3.1.0 dropped Python 2.7, 3.4 and 3.5 support officially via SPARK-32138, K8s IT fails. ``` KubernetesSuite: - Run SparkPi with no resources - Run SparkPi with a very long application name. - Use SparkLauncher.NO_RESOURCE - Run SparkPi with a master URL without a scheme. - Run SparkPi with an argument. - Run SparkPi with custom labels, annotations, and environment variables. - All pods have the same service account by default - Run extraJVMOptions check on driver - Run SparkRemoteFileTest using a remote data file - Run SparkPi with env and mount secrets. - Run PySpark on simple pi.py example - Run PySpark with Python2 to test a pyfiles example *** FAILED *** The code passed to eventually never returned normally. Attempted 113 times over 2.0014854648999996 minutes. Last failure message: false was not true. (KubernetesSuite.scala:370) - Run PySpark with Python3 to test a pyfiles example - Run PySpark with memory customization - Run in client mode. - Start pod creation from template - PVs with local storage - Launcher client dependencies - Test basic decommissioning - Run SparkR on simple dataframe.R example Run completed in 11 minutes, 15 seconds. Total number of tests run: 20 Suites: completed 2, aborted 0 Tests: succeeded 19, failed 1, canceled 0, ignored 0, pending 0 *** 1 TEST FAILED *** ``` ### Does this PR introduce _any_ user-facing change? No. ### How was this patch tested? Pass Jenkins K8s IT. Closes apache#29136 from dongjoon-hyun/SPARK-32335. Authored-by: Dongjoon Hyun <dongjoon@apache.org> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

…e in hive version related subdirectories ### What changes were proposed in this pull request? This patch fixes the build issue on Hive 1.2 profile brought by apache#29069, via putting mocks for HiveSessionImplSuite in hive version related subdirectories, so that maven build will pick up the proper source code according to the profile. ### Why are the changes needed? apache#29069 fixed the flakiness of HiveSessionImplSuite, but given the patch relied on the default profile (Hive 2.3) it broke the build with Hive 1.2 profile. This patch addresses both Hive versions. ### Does this PR introduce _any_ user-facing change? No ### How was this patch tested? Manually confirmed the test suite via below command: > Hive 1.2 ``` build/mvn -Dtest=none -DwildcardSuites=org.apache.spark.sql.hive.thriftserver.HiveSessionImplSuite test -Phive-1.2 -Phadoop-2.7 -Phive-thriftserver ``` > Hive 2.3 ``` build/mvn -Dtest=none -DwildcardSuites=org.apache.spark.sql.hive.thriftserver.HiveSessionImplSuite test -Phive-2.3 -Phadoop-3.2 -Phive-thriftserver ``` Closes apache#29129 from frankyin-factual/hive-tests. Authored-by: Frank Yin <frank@factual.com> Signed-off-by: Jungtaek Lim (HeartSaVioR) <kabhwan.opensource@gmail.com>

### What changes were proposed in this pull request? This PR aims to upgrade PySpark's embedded cloudpickle to the latest cloudpickle v1.5.0 (See https://github.com/cloudpipe/cloudpickle/blob/v1.5.0/cloudpickle/cloudpickle.py) ### Why are the changes needed? There are many bug fixes. For example, the bug described in the JIRA: dill unpickling fails because they define `types.ClassType`, which is undefined in dill. This results in the following error: ``` Traceback (most recent call last): File "/usr/local/lib/python3.6/site-packages/apache_beam/internal/pickler.py", line 279, in loads return dill.loads(s) File "/usr/local/lib/python3.6/site-packages/dill/_dill.py", line 317, in loads return load(file, ignore) File "/usr/local/lib/python3.6/site-packages/dill/_dill.py", line 305, in load obj = pik.load() File "/usr/local/lib/python3.6/site-packages/dill/_dill.py", line 577, in _load_type return _reverse_typemap[name] KeyError: 'ClassType' ``` See also cloudpipe/cloudpickle#82. This was fixed for cloudpickle 1.3.0+ (cloudpipe/cloudpickle#337), but PySpark's cloudpickle.py doesn't have this change yet. More notably, now it supports C pickle implementation with Python 3.8 which hugely improve performance. This is already adopted in another project such as Ray. ### Does this PR introduce _any_ user-facing change? Yes, as described above, the bug fixes. Internally, users also could leverage the fast cloudpickle backed by C pickle. ### How was this patch tested? Jenkins will test it out. Closes apache#29114 from HyukjinKwon/SPARK-32094. Authored-by: HyukjinKwon <gurwls223@apache.org> Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request? Fix typo error in the error log of SparkOperation trait, reported by apache#28963 (comment) ### Why are the changes needed? fix error in thrift server driver log ### Does this PR introduce _any_ user-facing change? No ### How was this patch tested? Passing GitHub actions Closes apache#29140 from yaooqinn/SPARK-32145-F. Authored-by: Kent Yao <yaooqinn@hotmail.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com>

…erWebUI ### What changes were proposed in this pull request? This PR allows an external agent to inform the Master that certain hosts are being decommissioned. ### Why are the changes needed? The current decommissioning is triggered by the Worker getting getting a SIGPWR (out of band possibly by some cleanup hook), which then informs the Master about it. This approach may not be feasible in some environments that cannot trigger a clean up hook on the Worker. In addition, when a large number of worker nodes are being decommissioned then the master will get a flood of messages. So we add a new post endpoint `/workers/kill` on the MasterWebUI that allows an external agent to inform the master about all the nodes being decommissioned in bulk. The list of nodes is specified by providing a list of hostnames. All workers on those hosts will be decommissioned. This API is merely a new entry point into the existing decommissioning logic. It does not change how the decommissioning request is handled in its core. ### Does this PR introduce _any_ user-facing change? Yes, a new endpoint `/workers/kill` is added to the MasterWebUI. By default only requests originating from an IP address local to the MasterWebUI are allowed. ### How was this patch tested? Added unit tests Closes apache#29015 from agrawaldevesh/master_decom_endpoint. Authored-by: Devesh Agrawal <devesh.agrawal@gmail.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request? New `spark.sql.metadataCacheTTLSeconds` option that adds time-to-live cache behaviour to the existing caches in `FileStatusCache` and `SessionCatalog`. ### Why are the changes needed? Currently Spark [caches file listing for tables](https://spark.apache.org/docs/2.4.4/sql-data-sources-parquet.html#metadata-refreshing) and requires issuing `REFRESH TABLE` any time the file listing has changed outside of Spark. Unfortunately, simply submitting `REFRESH TABLE` commands could be very cumbersome. Assuming frequently added files, hundreds of tables and dozens of users querying the data (and expecting up-to-date results), manually refreshing metadata for each table is not a solution. This is a pretty common use-case for streaming ingestion of data, which can be done outside of Spark (with tools like Kafka Connect, etc.). A similar feature exists in Presto: `hive.file-status-cache-expire-time` can be found [here](https://prestosql.io/docs/current/connector/hive.html#hive-configuration-properties). ### Does this PR introduce _any_ user-facing change? Yes, it's controlled with the new `spark.sql.metadataCacheTTLSeconds` option. When it's set to `-1` (by default), the behaviour of caches doesn't change, so it stays _backwards-compatible_. Otherwise, you can specify a value in seconds, for example `spark.sql.metadataCacheTTLSeconds: 60` means 1-minute cache TTL. ### How was this patch tested? Added new tests in: - FileIndexSuite - SessionCatalogSuite Closes apache#28852 from sap1ens/SPARK-30616-metadata-cache-ttl. Authored-by: Yaroslav Tkachenko <sapiensy@gmail.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com>

…PROFILES ### What changes were proposed in this pull request? This PR aims to rename `HADOOP2_MODULE_PROFILES` to `HADOOP_MODULE_PROFILES` because Hadoop 3 is now the default. ### Why are the changes needed? Hadoop 3 is now the default. ### Does this PR introduce _any_ user-facing change? No. ### How was this patch tested? Pass GitHub Action dependency test. Closes apache#29128 from williamhyun/williamhyun-patch-3. Authored-by: williamhyun <62487364+williamhyun@users.noreply.github.com> Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request? use while-loop instead of the recursive way ### Why are the changes needed? 3% ~ 10% faster ### Does this PR introduce _any_ user-facing change? No ### How was this patch tested? existing testsuites Closes apache#29095 from zhengruifeng/tree_pred_opt. Authored-by: zhengruifeng <ruifengz@foxmail.com> Signed-off-by: Sean Owen <srowen@gmail.com>

### What changes were proposed in this pull request? This PR aims to update the docker/spark-test and clean up unused stuff. ### Why are the changes needed? Since Spark 3.0.0, Java 11 is supported. We had better use the latest Java and OS. ### Does this PR introduce _any_ user-facing change? No. ### How was this patch tested? Manually do the following as described in https://github.com/apache/spark/blob/master/external/docker/spark-test/README.md . ``` docker run -v $SPARK_HOME:/opt/spark spark-test-master docker run -v $SPARK_HOME:/opt/spark spark-test-worker spark://<master_ip>:7077 ``` Closes apache#29150 from williamhyun/docker. Authored-by: William Hyun <williamhyun3@gmail.com> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

… executors ### What changes were proposed in this pull request? This PR adds functionality to consider the running tasks on decommission executors based on some config. In spark-on-cloud , we sometimes already know that an executor won't be alive for more than fix amount of time. Ex- In AWS Spot nodes, once we get the notification, we know that a node will be gone in 120 seconds. So if the running tasks on the decommissioning executors may run beyond currentTime+120 seconds, then they are candidate for speculation. ### Why are the changes needed? Currently when an executor is decommission, we stop scheduling new tasks on those executors but the already running tasks keeps on running on them. Based on the cloud, we might know beforehand that an executor won't be alive for more than a preconfigured time. Different cloud providers gives different timeouts before they take away the nodes. For Ex- In case of AWS spot nodes, an executor won't be alive for more than 120 seconds. We can utilize this information in cloud environments and take better decisions about speculating the already running tasks on decommission executors. ### Does this PR introduce _any_ user-facing change? Yes. This PR adds a new config "spark.executor.decommission.killInterval" which they can explicitly set based on the cloud environment where they are running. ### How was this patch tested? Added UT. Closes apache#28619 from prakharjain09/SPARK-21040-speculate-decommission-exec-tasks. Authored-by: Prakhar Jain <prakharjain09@gmail.com> Signed-off-by: Holden Karau <hkarau@apple.com>

viirya

pushed a commit

that referenced

this pull request

Dec 23, 2020

…mand ### What changes were proposed in this pull request? This PR proposes to sort table properties in DESCRIBE TABLE command. This is consistent with DSv2 command as well: https://github.com/apache/spark/blob/e3058ba17cb4512537953eb4ded884e24ee93ba2/sql/core/src/main/scala/org/apache/spark/sql/execution/datasources/v2/DescribeTableExec.scala#L63 This PR fixes the test case in Scala 2.13 build as well where the table properties have different order in the map. ### Why are the changes needed? To keep the deterministic and pretty output, and fix the tests in Scala 2.13 build. See https://amplab.cs.berkeley.edu/jenkins/job/spark-master-test-maven-hadoop-3.2-scala-2.13/49/testReport/junit/org.apache.spark.sql/SQLQueryTestSuite/describe_sql/ ``` describe.sql Expected "...spark_catalog, view.[query.out.col.2=c, view.referredTempFunctionsNames=[], view.catalogAndNamespace.part.1=default]]", but got "...spark_catalog, view.[catalogAndNamespace.part.1=default, view.query.out.col.2=c, view.referredTempFunctionsNames=[]]]" Result did not match for query apache#29 DESC FORMATTED v ``` ### Does this PR introduce _any_ user-facing change? Yes, it will change the text output from `DESCRIBE [EXTENDED|FORMATTED] table_name`. Now the table properties are sorted by its key. ### How was this patch tested? Related unittests were fixed accordingly. Closes apache#30799 from HyukjinKwon/SPARK-33803. Authored-by: HyukjinKwon <gurwls223@apache.org> Signed-off-by: Wenchen Fan <wenchen@databricks.com>

viirya

pushed a commit

that referenced

this pull request

Dec 24, 2020

…mand ### What changes were proposed in this pull request? This PR proposes to sort table properties in DESCRIBE TABLE command. This is consistent with DSv2 command as well: https://github.com/apache/spark/blob/e3058ba17cb4512537953eb4ded884e24ee93ba2/sql/core/src/main/scala/org/apache/spark/sql/execution/datasources/v2/DescribeTableExec.scala#L63 This PR fixes the test case in Scala 2.13 build as well where the table properties have different order in the map. ### Why are the changes needed? To keep the deterministic and pretty output, and fix the tests in Scala 2.13 build. See https://amplab.cs.berkeley.edu/jenkins/job/spark-master-test-maven-hadoop-3.2-scala-2.13/49/testReport/junit/org.apache.spark.sql/SQLQueryTestSuite/describe_sql/ ``` describe.sql Expected "...spark_catalog, view.[query.out.col.2=c, view.referredTempFunctionsNames=[], view.catalogAndNamespace.part.1=default]]", but got "...spark_catalog, view.[catalogAndNamespace.part.1=default, view.query.out.col.2=c, view.referredTempFunctionsNames=[]]]" Result did not match for query apache#29 DESC FORMATTED v ``` ### Does this PR introduce _any_ user-facing change? Yes, it will change the text output from `DESCRIBE [EXTENDED|FORMATTED] table_name`. Now the table properties are sorted by its key. ### How was this patch tested? Related unittests were fixed accordingly. Closes apache#30799 from HyukjinKwon/SPARK-33803. Authored-by: HyukjinKwon <gurwls223@apache.org> Signed-off-by: Wenchen Fan <wenchen@databricks.com> (cherry picked from commit 7845865) Signed-off-by: Wenchen Fan <wenchen@databricks.com>

viirya

pushed a commit

that referenced

this pull request

Mar 14, 2022

…aceable

### What changes were proposed in this pull request?

This PR uses a manual recursion to replace `RuntimeReplaceable` expressions instead of `transformAllExpressionsWithPruning`. The problem of `transformAllExpressionsWithPruning` is it will automatically make the replacement expression inherit the function alias name from the parent node, which is quite misleading. For example, `select date_part('month', c) from t`, the optimized plan in EXPLAIN before this PR is

```

Project [date_part(cast(c#18 as date)) AS date_part(month, c)apache#19]

+- Relation default.t[c#18] parquet

```

Now it's

```

Project [month(cast(c#9 as date)) AS date_part(month, c)#10]

+- Relation default.t[c#9] parquet

```

### Why are the changes needed?

fix misleading EXPLAIN result

### Does this PR introduce _any_ user-facing change?

no

### How was this patch tested?

new test

Closes apache#35821 from cloud-fan/follow2.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Dongjoon Hyun <dongjoon@apache.org>

viirya

pushed a commit

that referenced

this pull request

Feb 28, 2024

…n properly

### What changes were proposed in this pull request?

Make `ResolveRelations` handle plan id properly

### Why are the changes needed?

bug fix for Spark Connect, it won't affect classic Spark SQL

before this PR:

```

from pyspark.sql import functions as sf

spark.range(10).withColumn("value_1", sf.lit(1)).write.saveAsTable("test_table_1")

spark.range(10).withColumnRenamed("id", "index").withColumn("value_2", sf.lit(2)).write.saveAsTable("test_table_2")

df1 = spark.read.table("test_table_1")

df2 = spark.read.table("test_table_2")

df3 = spark.read.table("test_table_1")

join1 = df1.join(df2, on=df1.id==df2.index).select(df2.index, df2.value_2)

join2 = df3.join(join1, how="left", on=join1.index==df3.id)

join2.schema

```

fails with

```

AnalysisException: [CANNOT_RESOLVE_DATAFRAME_COLUMN] Cannot resolve dataframe column "id". It's probably because of illegal references like `df1.select(df2.col("a"))`. SQLSTATE: 42704

```

That is due to existing plan caching in `ResolveRelations` doesn't work with Spark Connect

```

=== Applying Rule org.apache.spark.sql.catalyst.analysis.Analyzer$ResolveRelations ===

'[#12]Join LeftOuter, '`==`('index, 'id) '[#12]Join LeftOuter, '`==`('index, 'id)

!:- '[#9]UnresolvedRelation [test_table_1], [], false :- '[#9]SubqueryAlias spark_catalog.default.test_table_1

!+- '[#11]Project ['index, 'value_2] : +- 'UnresolvedCatalogRelation `spark_catalog`.`default`.`test_table_1`, [], false

! +- '[#10]Join Inner, '`==`('id, 'index) +- '[#11]Project ['index, 'value_2]

! :- '[#7]UnresolvedRelation [test_table_1], [], false +- '[#10]Join Inner, '`==`('id, 'index)

! +- '[#8]UnresolvedRelation [test_table_2], [], false :- '[#9]SubqueryAlias spark_catalog.default.test_table_1

! : +- 'UnresolvedCatalogRelation `spark_catalog`.`default`.`test_table_1`, [], false

! +- '[#8]SubqueryAlias spark_catalog.default.test_table_2

! +- 'UnresolvedCatalogRelation `spark_catalog`.`default`.`test_table_2`, [], false

Can not resolve 'id with plan 7

```

`[#7]UnresolvedRelation [test_table_1], [], false` was wrongly resolved to the cached one

```

:- '[#9]SubqueryAlias spark_catalog.default.test_table_1

+- 'UnresolvedCatalogRelation `spark_catalog`.`default`.`test_table_1`, [], false

```

### Does this PR introduce _any_ user-facing change?

yes, bug fix

### How was this patch tested?

added ut

### Was this patch authored or co-authored using generative AI tooling?

ci

Closes apache#45214 from zhengruifeng/connect_fix_read_join.

Authored-by: Ruifeng Zheng <ruifengz@apache.org>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

viirya

pushed a commit

that referenced

this pull request

Jul 18, 2024

…plan properly ### What changes were proposed in this pull request? Make `ResolveRelations` handle plan id properly cherry-pick bugfix apache#45214 to 3.5 ### Why are the changes needed? bug fix for Spark Connect, it won't affect classic Spark SQL before this PR: ``` from pyspark.sql import functions as sf spark.range(10).withColumn("value_1", sf.lit(1)).write.saveAsTable("test_table_1") spark.range(10).withColumnRenamed("id", "index").withColumn("value_2", sf.lit(2)).write.saveAsTable("test_table_2") df1 = spark.read.table("test_table_1") df2 = spark.read.table("test_table_2") df3 = spark.read.table("test_table_1") join1 = df1.join(df2, on=df1.id==df2.index).select(df2.index, df2.value_2) join2 = df3.join(join1, how="left", on=join1.index==df3.id) join2.schema ``` fails with ``` AnalysisException: [CANNOT_RESOLVE_DATAFRAME_COLUMN] Cannot resolve dataframe column "id". It's probably because of illegal references like `df1.select(df2.col("a"))`. SQLSTATE: 42704 ``` That is due to existing plan caching in `ResolveRelations` doesn't work with Spark Connect ``` === Applying Rule org.apache.spark.sql.catalyst.analysis.Analyzer$ResolveRelations === '[#12]Join LeftOuter, '`==`('index, 'id) '[#12]Join LeftOuter, '`==`('index, 'id) !:- '[#9]UnresolvedRelation [test_table_1], [], false :- '[#9]SubqueryAlias spark_catalog.default.test_table_1 !+- '[#11]Project ['index, 'value_2] : +- 'UnresolvedCatalogRelation `spark_catalog`.`default`.`test_table_1`, [], false ! +- '[#10]Join Inner, '`==`('id, 'index) +- '[#11]Project ['index, 'value_2] ! :- '[#7]UnresolvedRelation [test_table_1], [], false +- '[#10]Join Inner, '`==`('id, 'index) ! +- '[#8]UnresolvedRelation [test_table_2], [], false :- '[#9]SubqueryAlias spark_catalog.default.test_table_1 ! : +- 'UnresolvedCatalogRelation `spark_catalog`.`default`.`test_table_1`, [], false ! +- '[#8]SubqueryAlias spark_catalog.default.test_table_2 ! +- 'UnresolvedCatalogRelation `spark_catalog`.`default`.`test_table_2`, [], false Can not resolve 'id with plan 7 ``` `[#7]UnresolvedRelation [test_table_1], [], false` was wrongly resolved to the cached one ``` :- '[#9]SubqueryAlias spark_catalog.default.test_table_1 +- 'UnresolvedCatalogRelation `spark_catalog`.`default`.`test_table_1`, [], false ``` ### Does this PR introduce _any_ user-facing change? yes, bug fix ### How was this patch tested? added ut ### Was this patch authored or co-authored using generative AI tooling? ci Closes apache#46291 from zhengruifeng/connect_fix_read_join_35. Authored-by: Ruifeng Zheng <ruifengz@apache.org> Signed-off-by: Ruifeng Zheng <ruifengz@apache.org>

viirya

pushed a commit

that referenced

this pull request

Aug 24, 2024

…plan properly ### What changes were proposed in this pull request? Make `ResolveRelations` handle plan id properly cherry-pick bugfix apache#45214 to 3.4 ### Why are the changes needed? bug fix for Spark Connect, it won't affect classic Spark SQL before this PR: ``` from pyspark.sql import functions as sf spark.range(10).withColumn("value_1", sf.lit(1)).write.saveAsTable("test_table_1") spark.range(10).withColumnRenamed("id", "index").withColumn("value_2", sf.lit(2)).write.saveAsTable("test_table_2") df1 = spark.read.table("test_table_1") df2 = spark.read.table("test_table_2") df3 = spark.read.table("test_table_1") join1 = df1.join(df2, on=df1.id==df2.index).select(df2.index, df2.value_2) join2 = df3.join(join1, how="left", on=join1.index==df3.id) join2.schema ``` fails with ``` AnalysisException: [CANNOT_RESOLVE_DATAFRAME_COLUMN] Cannot resolve dataframe column "id". It's probably because of illegal references like `df1.select(df2.col("a"))`. SQLSTATE: 42704 ``` That is due to existing plan caching in `ResolveRelations` doesn't work with Spark Connect ``` === Applying Rule org.apache.spark.sql.catalyst.analysis.Analyzer$ResolveRelations === '[#12]Join LeftOuter, '`==`('index, 'id) '[#12]Join LeftOuter, '`==`('index, 'id) !:- '[#9]UnresolvedRelation [test_table_1], [], false :- '[#9]SubqueryAlias spark_catalog.default.test_table_1 !+- '[#11]Project ['index, 'value_2] : +- 'UnresolvedCatalogRelation `spark_catalog`.`default`.`test_table_1`, [], false ! +- '[#10]Join Inner, '`==`('id, 'index) +- '[#11]Project ['index, 'value_2] ! :- '[#7]UnresolvedRelation [test_table_1], [], false +- '[#10]Join Inner, '`==`('id, 'index) ! +- '[#8]UnresolvedRelation [test_table_2], [], false :- '[#9]SubqueryAlias spark_catalog.default.test_table_1 ! : +- 'UnresolvedCatalogRelation `spark_catalog`.`default`.`test_table_1`, [], false ! +- '[#8]SubqueryAlias spark_catalog.default.test_table_2 ! +- 'UnresolvedCatalogRelation `spark_catalog`.`default`.`test_table_2`, [], false Can not resolve 'id with plan 7 ``` `[#7]UnresolvedRelation [test_table_1], [], false` was wrongly resolved to the cached one ``` :- '[#9]SubqueryAlias spark_catalog.default.test_table_1 +- 'UnresolvedCatalogRelation `spark_catalog`.`default`.`test_table_1`, [], false ``` ### Does this PR introduce _any_ user-facing change? yes, bug fix ### How was this patch tested? added ut ### Was this patch authored or co-authored using generative AI tooling? ci Closes apache#46290 from zhengruifeng/connect_fix_read_join_34. Authored-by: Ruifeng Zheng <ruifengz@apache.org> Signed-off-by: Ruifeng Zheng <ruifengz@apache.org>

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

Sign up for free

to join this conversation on GitHub.

Already have an account?

Sign in to comment

Add this suggestion to a batch that can be applied as a single commit.This suggestion is invalid because no changes were made to the code.Suggestions cannot be applied while the pull request is closed.Suggestions cannot be applied while viewing a subset of changes.Only one suggestion per line can be applied in a batch.Add this suggestion to a batch that can be applied as a single commit.Applying suggestions on deleted lines is not supported.You must change the existing code in this line in order to create a valid suggestion.Outdated suggestions cannot be applied.This suggestion has been applied or marked resolved.Suggestions cannot be applied from pending reviews.Suggestions cannot be applied on multi-line comments.Suggestions cannot be applied while the pull request is queued to merge.Suggestion cannot be applied right now. Please check back later.

What changes were proposed in this pull request?

Why are the changes needed?

Does this PR introduce any user-facing change?

How was this patch tested?