router filter: implement hedge_on_per_try_timeout.#6228

router filter: implement hedge_on_per_try_timeout.#6228snowp merged 81 commits intoenvoyproxy:masterfrom

Conversation

Implements the hedge_on_per_try_timeout option in HedgePolicy config which instructs the router filter to not cancel requests that hit the per try timeout before sending a retry. This means the router must be able to manage multiple simultaneous upstream requests and handle deciding which response "wins" and canceling any other in flight requests. Finishes envoyproxy#5841. Signed-off-by: Michael Puncel <mpuncel@squareup.com>

563bc05 to

4ab0641

Compare

| RetryStatus RetryStateImpl::shouldHedgeRetryPerTryTimeout(DoRetryCallback callback) { | ||

| // A hedged retry on per try timeout is always retried if there are retries | ||

| // left. NOTE: this is different than non-hedged per try timeouts which are only retried | ||

| // if RETRY_ON_5XX or RETRY_ON_GATEWAY_ERROR |

There was a problem hiding this comment.

This is one place I wasn't sure if this is the right thing to do. There was some discussion on #5841 I'm not caught up on yet

| // buffer limit we give up on retries and buffering. We must buffer using addDecodedData() | ||

| // so that all buffered data is available by the time we do request complete processing and | ||

| // potentially shadow. | ||

| callbacks_->addDecodedData(data, true); |

There was a problem hiding this comment.

I'm assuming we only want to call this once rather than per upstream request

source/common/router/router.cc

Outdated

|

|

||

| // Possible that we got an immediate reset. | ||

| if (upstream_request_) { | ||

| bool any_upstreams = false; |

There was a problem hiding this comment.

This logic is a bit funky and I'm not sure what the original intention of the guard against upstream reset is for shadowing, in particular the "riskier" part. Could use some context

source/common/router/router.cc

Outdated

| // If we have not yet sent anything downstream, send a response with an appropriate status code. | ||

| // Otherwise just reset the ongoing response. | ||

| if (downstream_response_started_) { | ||

| if (upstream_request_ != nullptr && upstream_request_->grpc_rq_success_deferred_) { |

There was a problem hiding this comment.

had to decompose onUpstreamAbort a bit more because some of it should run per-upstream and some is once. Only affects global timeout where we might be canceling multiple upstream requests at once

source/common/router/router.cc

Outdated

| void Filter::onUpstreamReset(Http::StreamResetReason reset_reason) { | ||

| ASSERT(upstream_request_); | ||

| void Filter::onUpstreamReset(Http::StreamResetReason reset_reason, | ||

| UpstreamRequest* upstream_request) { |

There was a problem hiding this comment.

Let me know if it's weird to be using raw pointers here, alternatively could make UpstreamRequest a shared pointer (it's a unique pointer)

There was a problem hiding this comment.

You can probably pass a ref instead since it won't ever be null

source/common/router/router.cc

Outdated

| const RetryStatus retry_status = | ||

| // Check if this upstream request was already retried, for instance after | ||

| // hitting a per try timeout. Don't retry it if we already have. | ||

| if (retry_state_ && !upstream_request->retried_) { |

There was a problem hiding this comment.

realized I can use another unit test that makes sure we don't retry the same upstream request twice (once for per try timeout and then again for a 5xx for example).

|

I'd like some integration tests for this as well, but will probably wait until Matt is done with the one he's writing so I can use it as a guide. |

Signed-off-by: Michael Puncel <mpuncel@squareup.com>

|

working through the build failures and will add more tests today |

Signed-off-by: Michael Puncel <mpuncel@squareup.com>

* master: token bucket: several fixes (envoyproxy#6235) config: move logging of full response to trace logging (envoyproxy#6226) mysql_filter: add a warning about compatibility (envoyproxy#6234) upstream: add transport socket failure reason to stream info and log (envoyproxy#6018) IoHandle readv and writev (envoyproxy#6037) Signed-off-by: Michael Puncel <mpuncel@squareup.com>

Signed-off-by: Michael Puncel <mpuncel@squareup.com>

Signed-off-by: Michael Puncel <mpuncel@squareup.com>

This required exposing wouldRetryFromHeaders in the retry state impl API so that the router can know if a response is "good" or not without actually scheduling a retry. If the response is "bad", it checks if there are any pending upstream requests that have not seen headers yet. If there are not, then it returns the response downstream. Signed-off-by: Michael Puncel <mpuncel@squareup.com>

Signed-off-by: Michael Puncel <mpuncel@squareup.com>

Signed-off-by: Michael Puncel <mpuncel@squareup.com>

Signed-off-by: Michael Puncel <mpuncel@squareup.com>

Signed-off-by: Michael Puncel <mpuncel@squareup.com>

…emoved Signed-off-by: Michael Puncel <mpuncel@squareup.com>

Signed-off-by: Michael Puncel <mpuncel@squareup.com>

|

This is no longer WIP and ready for comment |

Resets didn't have the same handling that upstream headers had. In particular, a reset would have always returned early even if other requests were in flight, and we might retry the same request twice if there was first a per try timeout and then a reset. Signed-off-by: Michael Puncel <mpuncel@squareup.com>

Signed-off-by: Michael Puncel <mpuncel@squareup.com>

Signed-off-by: Michael Puncel <mpuncel@squareup.com>

8fa6ad9 to

67b7fa9

Compare

mattklein123

left a comment

mattklein123

left a comment

There was a problem hiding this comment.

I'm not going to lie this change is petrifying, but at a high level it looks great. I have a few small remaining comments. @snowp any further comments?

/wait

source/common/router/router.cc

Outdated

|

|

||

| FilterUtility::HedgingParams FilterUtility::finalHedgingParams(const RouteEntry& route, | ||

| Http::HeaderMap& request_headers) { | ||

| HedgingParams hedgingParams; |

| Http::FilterTrailersStatus Filter::decodeTrailers(Http::HeaderMap& trailers) { | ||

| ENVOY_STREAM_LOG(debug, "router decoding trailers:\n{}", *callbacks_, trailers); | ||

| downstream_trailers_ = &trailers; | ||

| ASSERT(upstream_requests_.size() == 1); |

There was a problem hiding this comment.

Doesn't the same ASSERT/explanation apply as you wrote in decodeData()? Can we be consistent?

| static_cast<Http::Code>(response_code)); | ||

| upstream_host->stats().rq_error_.inc(); | ||

| return; | ||

| } else if (retry_status == RetryStatus::NoOverflow) { |

There was a problem hiding this comment.

This logic seems largely duplicated in onSoftPerTryTimeout. Is there any sharing we can do here?

There was a problem hiding this comment.

it's enough different that' it'd be difficult. We call a different retry state function, reset the stream on headers but not soft per try timeout, charge http code on headers and not soft per try timeout, and also have the could_not_retry logic which tells us whether we're done (e.g. this was the last in-flight attempt and we should return the "bad" response downstream) vs need to wait for other attempts to potentially get headers back.

I'll take a look into deduplicating the retry handing onSoftPerTryTimeout with maybeRetryReset though, those look similar enough.

There was a problem hiding this comment.

after looking at it, it's probably not worth it because you end up with a nested lambda situation (one calls shouldRetryReset, one calls shouldRetryHedgedPerTryTimeout and both need to provide the doRetry() lambda)

source/common/router/router.cc

Outdated

| } | ||

|

|

||

| callbacks_->encodeData(data, end_stream); | ||

| if (final_upstream_request_ == &upstream_request) { |

There was a problem hiding this comment.

We need this block because if this isn't the final request, that upstream stream might have just been reset, right? Same below for trailers? Can you add a comment about this? Presumably we don't need any deferred delete behavior here to handle this since we are only looking at pointer values?

There was a problem hiding this comment.

Looking at this again, I don't think we need this block because when we set final_upstream_request_ we reset all other upstreams which also removes the callbacks. We might not need final_upstream_request_ at all actually for that reason

source/common/router/router.cc

Outdated

| upstream_requests_.front()->encodeHeaders(!callbacks_->decodingBuffer() && !downstream_trailers_); | ||

| // It's possible we got immediately reset. | ||

| if (upstream_requests_.size() == 1) { | ||

| if (upstream_requests_.front()) { |

There was a problem hiding this comment.

nit: !upstream_requests_.empty() ?

snowp

left a comment

snowp

left a comment

There was a problem hiding this comment.

Looks pretty good, nothing obviously wrong to me.

source/common/router/router.cc

Outdated

| } | ||

| resetStream(); | ||

|

|

||

| // Set response flag to UT for now, but it might be overwritten if a |

There was a problem hiding this comment.

setResponseFlag ORs together each passed value, subsequent calls won't overwrite previous calls.

source/common/router/router.cc

Outdated

| // There are two states we should get this callback in: 1) the watermark was | ||

| // hit due to writes from a different filter instance over a shared | ||

| // downstream connection, or 2) the watermark was hit due to THIS filter | ||

| // instance due to writing back the "winning" upstream request. In either |

There was a problem hiding this comment.

nit: due to is repeated twice

source/common/router/router.cc

Outdated

| } | ||

|

|

||

| uint32_t Filter::numRequestsAwaitingHeaders() { | ||

| uint32_t ret = 0; |

There was a problem hiding this comment.

you can use std::count_if here

| buffer_len = encoder_filters_[1]->callbacks_->encodingBuffer()->length(); | ||

| EXPECT_CALL(callbacks, onBelowWriteBufferLowWatermark()); | ||

| EXPECT_CALL(callbacks2, onBelowWriteBufferLowWatermark()); | ||

| EXPECT_CALL(callbacks2, onBelowWriteBufferLowWatermark()).Times(0); |

There was a problem hiding this comment.

Can you just remove this line if you don't expect it to be called?

There was a problem hiding this comment.

I think I need that to make sure it's not called?

There was a problem hiding this comment.

As long as the mock is not a NiceMock it will fail the test with "uninteresting function called on ..." if you haven't added an expectation for it

There is possibly some value in being visually explicit in this assertion but it's not necessary afaik

Signed-off-by: Michael Puncel <mpuncel@squareup.com>

…t on calling encodeData on the wrong upstream_request Signed-off-by: Michael Puncel <mpuncel@squareup.com>

Signed-off-by: Michael Puncel <mpuncel@squareup.com>

* master: (65 commits) proto: Add PATCH method to RequestMethod enum (envoyproxy#6737) exe: drop unused deps on zlib compressor code (envoyproxy#7022) coverage: fix some misc coverage (envoyproxy#7033) Enable proto schema for router_check_tool (envoyproxy#6992) stats: rework stat sink flushing to centralize counter latching (envoyproxy#6996) [test] convert lds api test config stubs to v2 (envoyproxy#7021) router: scoped rds (2c): implement scoped rds API (envoyproxy#6932) build: Add option for size-optimized binary (envoyproxy#6960) test: adding an integration test framework for file-based LDS (envoyproxy#6933) doc: update obsolete ref to api/XDS_PROTOCOL.md (envoyproxy#7002) dispatcher: faster runOnAllThreads (envoyproxy#7011) example: add csrf sandbox (envoyproxy#6805) fix syntax of gcov exclusion zone. (envoyproxy#7023) /runtime_modify: add support for query params in body (envoyproxy#6977) stats: Create stats for http codes with the symbol table. (envoyproxy#6733) health check: fix more fallout from inline deletion change (envoyproxy#6988) Max heap fix (envoyproxy#7016) Add support to unregister from lifecycle notifications (envoyproxy#6984) build spdy_core_alt_svc_wire_format (envoyproxy#7010) ext_authz: Make sure initiateCall only called once (envoyproxy#6949) ... Signed-off-by: Michael Puncel <mpuncel@squareup.com>

Signed-off-by: Michael Puncel <mpuncel@squareup.com>

|

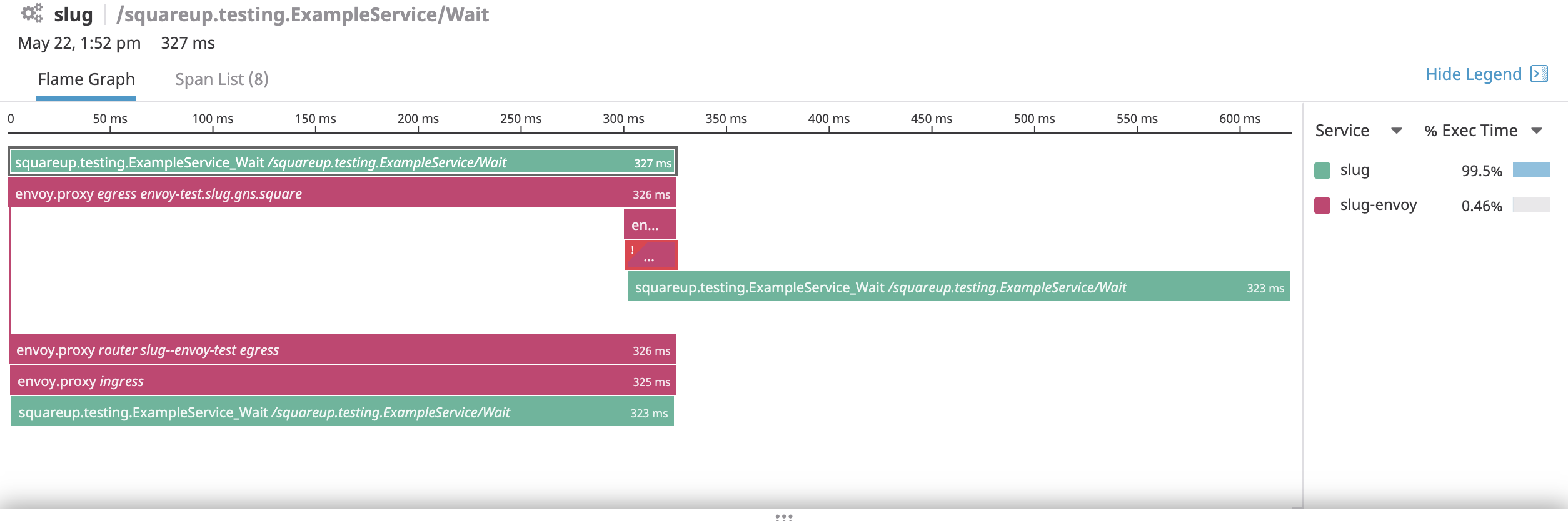

just merged master again and addressed PR feedback. I also got a screenshot of tracing showing hedging working for a real gRPC request at Square. It shows an app calling itself with a 300ms per try timeout and the server takes longer than that. It looks a bit odd because the 2 spans have the same parent but don't start at the same time, and then that one server span is long because cancellation wasn't handled (it just does a |

mattklein123

left a comment

mattklein123

left a comment

There was a problem hiding this comment.

Thanks, this is great work with very thorough test coverage. Looks like you need a clang-tidy fix though?

/wait-any

Additionally moves the handling of in-flight upstream requests out of cleanup() and into the various applicable places of the request lifecycle. Hopefully this makes it clear what the cycle is instead of having a cleanup() function that hands a various number of states. Signed-off-by: Michael Puncel <mpuncel@squareup.com>

* master: test: Add coverage for IsolatedStoreImpl::find* (envoyproxy#7043) server: add ProcessContext (envoyproxy#7018) config: Implement both versions of onConfigUpdate() everywhere (envoyproxy#6879) gzip: add test for various compression strategy and level (envoyproxy#7055) Fix typo in comment for rds.RouteConfiguration.validate_clusters (envoyproxy#7056) mysql_filter: add handling for partial messages (envoyproxy#6885) migrate from v2alpha to v2 (envoyproxy#7044) tests: fix tsan test flake (envoyproxy#7052) upstream: fix HostUtility::healthFlagsToString (envoyproxy#7051) tech debt: eliminate absl::make_unique (envoyproxy#7034) router: add a route name field in route.Route list (envoyproxy#6776) ext_authz: configurable HTTP status code for network errors. (envoyproxy#6669) stats: remove const-cast for symbol-table in edcs_filter_test.cc (envoyproxy#7045) build: bump libevent to 3b1864b. (envoyproxy#7012) stats: improve test-coverage for a few stats-related functions. (envoyproxy#7038) docs: fix csrf filter source origin note (envoyproxy#7041) Fix common typo: grcp -> grpc (envoyproxy#7040) snapshot (envoyproxy#7036) Signed-off-by: Michael Puncel <mpuncel@squareup.com>

Signed-off-by: Michael Puncel <mpuncel@squareup.com>

|

Looks like CI issues. Note also that docs is broken on master right now so watch for a fix for that. /wait |

Signed-off-by: Michael Puncel <mpuncel@squareup.com>

Signed-off-by: Michael Puncel <mpuncel@squareup.com>

mattklein123

left a comment

mattklein123

left a comment

There was a problem hiding this comment.

Very awesome work. Will defer to @snowp for final review and merge. Thank you!

snowp

left a comment

snowp

left a comment

There was a problem hiding this comment.

LGTM! Really exciting to see this land

Implements the hedge_on_per_try_timeout option in HedgePolicy config

which instructs the router filter to not cancel requests that hit the

per try timeout before sending a retry. This means the router must be

able to manage multiple simultaneous upstream requests and handle

deciding which response "wins" and canceling any other in flight

requests.

Finishes #5841.

Description: Implements request hedging (racing) in response to a per try timeout in the router filter.

Risk Level: High

Testing: Unit/Integration tests

Docs Changes: Added a request hedging section to the HTTP routing arch overview in addition to proto-level docs.

Release Notes: updated

Fixes #5841