-

Notifications

You must be signed in to change notification settings - Fork 2.5k

[HUDI-1624] The state based index should bootstrap from existing base… #2581

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Conversation

Codecov Report

@@ Coverage Diff @@

## master #2581 +/- ##

============================================

- Coverage 51.14% 9.69% -41.46%

+ Complexity 3215 48 -3167

============================================

Files 438 53 -385

Lines 20041 1929 -18112

Branches 2064 230 -1834

============================================

- Hits 10250 187 -10063

+ Misses 8946 1729 -7217

+ Partials 845 13 -832

Flags with carried forward coverage won't be shown. Click here to find out more. |

|

@lamber-ken You can also help to review this PR, if you would like to do it. |

Yeah. By the way, big thanks to @danny0405 for RFC-24 👍 |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

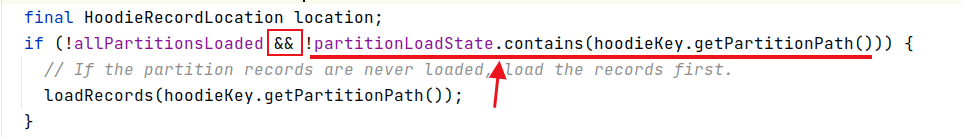

The allPartitionsLoaded member variable seems redundant, can we only use partitionLoadState?

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

No, allPartitionsLoaded flag is used to speed up so that there is no need to query the state which is not very efficient.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

by the way, allPartitionPath only inited in BucketAssignFunction#open method, seems also need to update allPartitionPath?

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

We only need to ensure the initial partitions are loaded successfully, the new input data would trigger index update if there are new data partitions.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

0, It is better to use static constants instead.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

It is okey because only one code snippet uses it.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

The 0 is meaningless here, It's may not intuitive for beginners.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

maybe put a boolean?

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

The 0 is meaningless here

It is meaningless anyway, because Flink does not have Set state.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

checkPartitionsLoaded() method seems redundant as allPartitionsLoaded.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

IIUC, this seems necessary cause we didn't update the partitionLoadState if we see a new partition in the upcoming records so we need to check after each commit. Otherwise, we need to update the partitionLoadState with indexState together.

garyli1019

left a comment

garyli1019

left a comment

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Overall LGTM. Left some minor comments.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

maybe put a boolean?

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

can we use this.hadoopConf, getHadoopConf() seems called twice.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

shall we use this in getLatestBaseFilesForAllPartitions to avoid duplicate codes.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

I think we could.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

It's good that getLatestBaseFilesForPartition was extracted from getLatestBaseFilesForAllPartitions.

Current codebase:

public static List<HoodieBaseFile> getLatestBaseFilesForPartition(

final String partition,

final HoodieTable hoodieTable) {

Option<HoodieInstant> latestCommitTime = hoodieTable.getMetaClient().getCommitsTimeline()

.filterCompletedInstants().lastInstant();

if (latestCommitTime.isPresent()) {

return hoodieTable.getBaseFileOnlyView()

.getLatestBaseFilesBeforeOrOn(partition, latestCommitTime.get().getTimestamp())

.collect(toList());

}

return Collections.emptyList();

}

Maybe the following implementation is more efficient

public static List<HoodieBaseFile> getLatestBaseFilesForPartition(

final String partition,

final HoodieTable hoodieTable) {

return hoodieTable.getFileSystemView()

.getAllFileGroups(partition)

.map(HoodieFileGroup::getLatestDataFile)

.filter(Option::isPresent)

.map(Option::get)

.collect(toList());

}

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

refer to SparkHoodieBackedTableMetadataWriter#prepRecords

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Agree, there is no need to decide and compare the instant time here, but i would not promote it in this PR, because it is not related.

You can promote it in a separate JIRA issue.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

IIUC, this seems necessary cause we didn't update the partitionLoadState if we see a new partition in the upcoming records so we need to check after each commit. Otherwise, we need to update the partitionLoadState with indexState together.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

fs never used, we can remove it.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Already removed

|

hi @danny0405 is it necessary to revert |

I will do it in another patch, thanks. |

ba652e8 to

66804a6

Compare

|

@danny0405 please check the CI? |

Should not be caused by this PR, re-trigger to run the tests again. |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

we can use getRuntimeContext().getMaxNumberOfParallelSubtasks()

… files The index should bootstrap from existing base files if the files exist, in the design, we load all the keys for one partition if we found that the key does not exist in the index for `processElement`, if there are many records for this partition, the processing may block and trigger back pressure. When all the records are loaded, we only need to check the state each time a record is tagged.

yanghua

left a comment

yanghua

left a comment

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

LGTM

|

Thanks @danny0405 for the base index patch, maybe there are some points to think about later, 👍 |

Thanks for the new ideas if you have some and welcome the contribution ~ |

| final int maxParallelism = getRuntimeContext().getMaxNumberOfParallelSubtasks(); | ||

| final int taskID = getRuntimeContext().getIndexOfThisSubtask(); | ||

| // reference: org.apache.flink.streaming.api.datastream.KeyedStream | ||

| this.initialPartitionsToLoad = allPartitionPaths.stream() |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

if (context.isRestored()) {

checkPartitionsLoaded();

}

when restored from checkpoint, initialPartitionsToLoad has not initialized yet.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Yes welcome to fire a fix and add test cases

…OSS master Summary: [HUDI-1509]: Reverting LinkedHashSet changes to combine fields from oldSchema and newSchema in favor of using only new schema for record rewriting (apache#2424) [MINOR] Bumping snapshot version to 0.7.0 (apache#2435) [HUDI-1533] Make SerializableSchema work for large schemas and add ability to sortBy numeric values (apache#2453) [HUDI-1529] Add block size to the FileStatus objects returned from metadata table to avoid too many file splits (apache#2451) [HUDI-1532] Fixed suboptimal implementation of a magic sequence search (apache#2440) [HUDI-1535] Fix 0.7.0 snapshot (apache#2456) [MINOR] Fixing setting defaults for index config (apache#2457) [HUDI-1540] Fixing commons codec shading in spark bundle (apache#2460) [HUDI 1308] Harden RFC-15 Implementation based on production testing (apache#2441) [MINOR] Remove redundant judgments (apache#2466) [MINOR] Fix dataSource cannot use hoodie.datasource.hive_sync.auto_create_database (apache#2444) [MINOR] Disabling problematic tests temporarily to stabilize CI (apache#2468) [MINOR] Make a separate travis CI job for hudi-utilities (apache#2469) [HUDI-1512] Fix spark 2 unit tests failure with Spark 3 (apache#2412) [HUDI-1511] InstantGenerateOperator support multiple parallelism (apache#2434) [HUDI-1332] Introduce FlinkHoodieBloomIndex to hudi-flink-client (apache#2375) [HUDI] Add bloom index for hudi-flink-client [MINOR] Remove InstantGeneratorOperator parallelism limit in HoodieFlinkStreamer and update docs (apache#2471) [MINOR] Improve code readability,remove the continue keyword (apache#2459) [HOTFIX] Revert upgrade flink verison to 1.12.0 (apache#2473) [HUDI-1453] Fix NPE using HoodieFlinkStreamer to etl data from kafka to hudi (apache#2474) [MINOR] Use skipTests flag for skip.hudi-spark2.unit.tests property (apache#2477) [HUDI-1476] Introduce unit test infra for java client (apache#2478) [MINOR] Update doap with 0.7.0 release (apache#2491) [MINOR]Fix NPE when using HoodieFlinkStreamer with multi parallelism (apache#2492) [HUDI-1234] Insert new records to data files without merging for "Insert" operation. (apache#2111) [MINOR] Add Jira URL and Mailing List (apache#2404) [HUDI-1522] Add a new pipeline for Flink writer (apache#2430) [HUDI-1522] Add a new pipeline for Flink writer [HUDI-623] Remove UpgradePayloadFromUberToApache (apache#2455) [HUDI-1555] Remove isEmpty to improve clustering execution performance (apache#2502) [HUDI-1266] Add unit test for validating replacecommit rollback (apache#2418) [MINOR] Quickstart.generateUpdates method add check (apache#2505) [HUDI-1519] Improve minKey/maxKey computation in HoodieHFileWriter (apache#2427) [HUDI-1550] Honor ordering field for MOR Spark datasource reader (apache#2497) [MINOR] Fix method comment typo (apache#2518) [MINOR] Rename FileSystemViewHandler to RequestHandler and corrected the class comment (apache#2458) [HUDI-1335] Introduce FlinkHoodieSimpleIndex to hudi-flink-client (apache#2271) [HUDI-1523] Call mkdir(partition) only if not exists (apache#2501) [HUDI-1538] Try to init class trying different signatures instead of checking its name (apache#2476) [HUDI-1538] Try to init class trying different signatures instead of checking its name. [HUDI-1547] CI intermittent failure: TestJsonStringToHoodieRecordMapF… (apache#2521) [MINOR] Fixing the default value for source ordering field for payload config (apache#2516) [HUDI-1420] HoodieTableMetaClient.getMarkerFolderPath works incorrectly on windows client with hdfs server for wrong file seperator (apache#2526) [HUDI-1571] Adding commit_show_records_info to display record sizes for commit (apache#2514) [HUDI-1589] Fix Rollback Metadata AVRO backwards incompatiblity (apache#2543) [MINOR] Fix wrong logic for checking state condition (apache#2524) [HUDI-1557] Make Flink write pipeline write task scalable (apache#2506) [HUDI-1545] Add test cases for INSERT_OVERWRITE Operation (apache#2483) [HUDI-1603] fix DefaultHoodieRecordPayload serialization failure (apache#2556) [MINOR] Fix the wrong comment for HoodieJavaWriteClientExample (apache#2559) [HUDI-1526] Translate the api partitionBy in spark datasource to hoodie.datasource.write.partitionpath.field (apache#2431) [HUDI-1612] Fix write test flakiness in StreamWriteITCase (apache#2567) [HUDI-1612] Fix write test flakiness in StreamWriteITCase [MINOR] Default to empty list for unset datadog tags property (apache#2574) [MINOR] Add clustering to feature list (apache#2568) [HUDI-1598] Write as minor batches during one checkpoint interval for the new writer (apache#2553) [HUDI-1109] Support Spark Structured Streaming read from Hudi table (apache#2485) [HUDI-1621] Gets the parallelism from context when init StreamWriteOperatorCoordinator (apache#2579) [HUDI-1381] Schedule compaction based on time elapsed (apache#2260) [HUDI-1582] Throw an exception when syncHoodieTable() fails, with RuntimeException (apache#2536) [HUDI-1539] Fix bug in HoodieCombineRealtimeRecordReader with reading empty iterators (apache#2583) [HUDI-1315] Adding builder for HoodieTableMetaClient initialization (apache#2534) [HUDI-1486] Remove inline inflight rollback in hoodie writer (apache#2359) [HUDI-1586] [Common Core] [Flink Integration] Reduce the coupling of hadoop. (apache#2540) [HUDI-1624] The state based index should bootstrap from existing base files (apache#2581) [HUDI-1477] Support copyOnWriteTable in java client (apache#2382) [MINOR] Ensure directory exists before listing all marker files. (apache#2594) [MINOR] hive sync checks for table after creating db if auto create is true (apache#2591) [HUDI-1620] Add azure pipelines configs (apache#2582) [HUDI-1347] Fix Hbase index to make rollback synchronous (via config) (apache#2188) [HUDI-1637] Avoid to rename for bucket update when there is only one flush action during a checkpoint (apache#2599) [HUDI-1638] Some improvements to BucketAssignFunction (apache#2600) [HUDI-1367] Make deltaStreamer transition from dfsSouce to kafkasouce (apache#2227) [HUDI-1269] Make whether the failure of connect hive affects hudi ingest process configurable (apache#2443) [HUDI-1611] Added a configuration to allow specific directories to be filtered out during Metadata Table bootstrap. (apache#2565) [Hudi-1583]: Fix bug that Hudi will skip remaining log files if there is logFile with zero size in logFileList when merge on read. (apache#2584) [HUDI-1632] Supports merge on read write mode for Flink writer (apache#2593) [HUDI-1540] Fixing commons codec dependency in bundle jars (apache#2562) [HUDI-1644] Do not delete older rollback instants as part of rollback. Archival can take care of removing old instants cleanly (apache#2610) [HUDI-1634] Re-bootstrap metadata table when un-synced instants have been archived. (apache#2595) [HUDI-1584] Modify maker file path, which should start with the target base path. (apache#2539) [MINOR] Fix default value for hoodie.deltastreamer.source.kafka.auto.reset.offsets (apache#2617) [HUDI-1553] Configuration and metrics for the TimelineService. (apache#2495) [HUDI-1587] Add latency and freshness support (apache#2541) [HUDI-1647] Supports snapshot read for Flink (apache#2613) [HUDI-1646] Provide mechanism to read uncommitted data through InputFormat (apache#2611) [HUDI-1655] Support custom date format and fix unsupported exception in DatePartitionPathSelector (apache#2621) [HUDI-1636] Support Builder Pattern To Build Table Properties For HoodieTableConfig (apache#2596) [HUDI-1660] Excluding compaction and clustering instants from inflight rollback (apache#2631) [HUDI-1661] Exclude clustering commits from getExtraMetadataFromLatest API (apache#2632) [MINOR] Fix import in StreamerUtil.java (apache#2638) [HUDI-1618] Fixing NPE with Parquet src in multi table delta streamer (apache#2577) [HUDI-1662] Fix hive date type conversion for mor table (apache#2634) [HUDI-1673] Replace scala.Tule2 to Pair in FlinkHoodieBloomIndex (apache#2642) [MINOR] HoodieClientTestHarness close resources in AfterAll phase (apache#2646) [HUDI-1635] Improvements to Hudi Test Suite (apache#2628) [HUDI-1651] Fix archival of requested replacecommit (apache#2622) [HUDI-1663] Streaming read for Flink MOR table (apache#2640) [HUDI-1678] Row level delete for Flink sink (apache#2659) [HUDI-1664] Avro schema inference for Flink SQL table (apache#2658) [HUDI-1681] Support object storage for Flink writer (apache#2662) [HUDI-1685] keep updating current date for every batch (apache#2671) [HUDI-1496] Fixing input stream detection of GCS FileSystem (apache#2500) [HUDI-1684] Tweak hudi-flink-bundle module pom and reorganize the pacakges for hudi-flink module (apache#2669) [HUDI-1692] Bounded source for stream writer (apache#2674) [HUDI-1552] Improve performance of key lookups from base file in Metadata Table. (apache#2494) [HUDI-1552] Improve performance of key lookups from base file in Metadata Table. [HUDI-1695] Fixed the error messaging (apache#2679) [HUDI 1615] Fixing null schema in bulk_insert row writer path (apache#2653) [HUDI-845] Added locking capability to allow multiple writers (apache#2374) [HUDI-1701] Implement HoodieTableSource.explainSource for all kinds of pushing down (apache#2690) [HUDI-1704] Use PRIMARY KEY syntax to define record keys for Flink Hudi table (apache#2694) [HUDI-1688]hudi write should uncache rdd, when the write operation is finnished (apache#2673) [MINOR] Remove unused var in AbstractHoodieWriteClient (apache#2693) [HUDI-1653] Add support for composite keys in NonpartitionedKeyGenerator (apache#2627) [HUDI-1705] Flush as per data bucket for mini-batch write (apache#2695) [1568] Fixing spark3 bundles (apache#2625) [HUDI-1650] Custom avro kafka deserializer. (apache#2619) [HUDI-1667]: Fix a null value related bug for spark vectorized reader. (apache#2636) [HUDI-1709] Improving config names and adding hive metastore uri config (apache#2699) [MINOR][DOCUMENT] Update README doc for integ test (apache#2703) [HUDI-1710] Read optimized query type for Flink batch reader (apache#2702) [HUDI-1712] Rename & standardize config to match other configs (apache#2708) [hotfix] Log the error message for creating table source first (apache#2711) [HUDI-1495] Bump Flink version to 1.12.2 (apache#2718) [HUDI-1728] Fix MethodNotFound for HiveMetastore Locks (apache#2731) [HUDI-1478] Introduce HoodieBloomIndex to hudi-java-client (apache#2608) [HUDI-1729] Asynchronous Hive sync and commits cleaning for Flink writer (apache#2732) [HOTFIX] close spark session in functional test suite and disable spark3 test for spark2 (apache#2727) [HOTFIX] Disable ITs for Spark3 and scala2.12 (apache#2733) [HOTFIX] fix deploy staging jars script [MINOR] Add Missing Apache License to test files (apache#2736) [UBER] Fixed creation of HoodieMetadataClient which now uses a Builder pattern instead of a constructor. Reviewers: balajee, O955 Project Hoodie Project Reviewer: Add blocking reviewers!, PHID-PROJ-pxfpotkfgkanblb3detq! JIRA Issues: HUDI-593 Differential Revision: https://code.uberinternal.com/D5867129

…OSS master Summary: [HUDI-1509]: Reverting LinkedHashSet changes to combine fields from oldSchema and newSchema in favor of using only new schema for record rewriting (apache#2424) [MINOR] Bumping snapshot version to 0.7.0 (apache#2435) [HUDI-1533] Make SerializableSchema work for large schemas and add ability to sortBy numeric values (apache#2453) [HUDI-1529] Add block size to the FileStatus objects returned from metadata table to avoid too many file splits (apache#2451) [HUDI-1532] Fixed suboptimal implementation of a magic sequence search (apache#2440) [HUDI-1535] Fix 0.7.0 snapshot (apache#2456) [MINOR] Fixing setting defaults for index config (apache#2457) [HUDI-1540] Fixing commons codec shading in spark bundle (apache#2460) [HUDI 1308] Harden RFC-15 Implementation based on production testing (apache#2441) [MINOR] Remove redundant judgments (apache#2466) [MINOR] Fix dataSource cannot use hoodie.datasource.hive_sync.auto_create_database (apache#2444) [MINOR] Disabling problematic tests temporarily to stabilize CI (apache#2468) [MINOR] Make a separate travis CI job for hudi-utilities (apache#2469) [HUDI-1512] Fix spark 2 unit tests failure with Spark 3 (apache#2412) [HUDI-1511] InstantGenerateOperator support multiple parallelism (apache#2434) [HUDI-1332] Introduce FlinkHoodieBloomIndex to hudi-flink-client (apache#2375) [HUDI] Add bloom index for hudi-flink-client [MINOR] Remove InstantGeneratorOperator parallelism limit in HoodieFlinkStreamer and update docs (apache#2471) [MINOR] Improve code readability,remove the continue keyword (apache#2459) [HOTFIX] Revert upgrade flink verison to 1.12.0 (apache#2473) [HUDI-1453] Fix NPE using HoodieFlinkStreamer to etl data from kafka to hudi (apache#2474) [MINOR] Use skipTests flag for skip.hudi-spark2.unit.tests property (apache#2477) [HUDI-1476] Introduce unit test infra for java client (apache#2478) [MINOR] Update doap with 0.7.0 release (apache#2491) [MINOR]Fix NPE when using HoodieFlinkStreamer with multi parallelism (apache#2492) [HUDI-1234] Insert new records to data files without merging for "Insert" operation. (apache#2111) [MINOR] Add Jira URL and Mailing List (apache#2404) [HUDI-1522] Add a new pipeline for Flink writer (apache#2430) [HUDI-1522] Add a new pipeline for Flink writer [HUDI-623] Remove UpgradePayloadFromUberToApache (apache#2455) [HUDI-1555] Remove isEmpty to improve clustering execution performance (apache#2502) [HUDI-1266] Add unit test for validating replacecommit rollback (apache#2418) [MINOR] Quickstart.generateUpdates method add check (apache#2505) [HUDI-1519] Improve minKey/maxKey computation in HoodieHFileWriter (apache#2427) [HUDI-1550] Honor ordering field for MOR Spark datasource reader (apache#2497) [MINOR] Fix method comment typo (apache#2518) [MINOR] Rename FileSystemViewHandler to RequestHandler and corrected the class comment (apache#2458) [HUDI-1335] Introduce FlinkHoodieSimpleIndex to hudi-flink-client (apache#2271) [HUDI-1523] Call mkdir(partition) only if not exists (apache#2501) [HUDI-1538] Try to init class trying different signatures instead of checking its name (apache#2476) [HUDI-1538] Try to init class trying different signatures instead of checking its name. [HUDI-1547] CI intermittent failure: TestJsonStringToHoodieRecordMapF… (apache#2521) [MINOR] Fixing the default value for source ordering field for payload config (apache#2516) [HUDI-1420] HoodieTableMetaClient.getMarkerFolderPath works incorrectly on windows client with hdfs server for wrong file seperator (apache#2526) [HUDI-1571] Adding commit_show_records_info to display record sizes for commit (apache#2514) [HUDI-1589] Fix Rollback Metadata AVRO backwards incompatiblity (apache#2543) [MINOR] Fix wrong logic for checking state condition (apache#2524) [HUDI-1557] Make Flink write pipeline write task scalable (apache#2506) [HUDI-1545] Add test cases for INSERT_OVERWRITE Operation (apache#2483) [HUDI-1603] fix DefaultHoodieRecordPayload serialization failure (apache#2556) [MINOR] Fix the wrong comment for HoodieJavaWriteClientExample (apache#2559) [HUDI-1526] Translate the api partitionBy in spark datasource to hoodie.datasource.write.partitionpath.field (apache#2431) [HUDI-1612] Fix write test flakiness in StreamWriteITCase (apache#2567) [HUDI-1612] Fix write test flakiness in StreamWriteITCase [MINOR] Default to empty list for unset datadog tags property (apache#2574) [MINOR] Add clustering to feature list (apache#2568) [HUDI-1598] Write as minor batches during one checkpoint interval for the new writer (apache#2553) [HUDI-1109] Support Spark Structured Streaming read from Hudi table (apache#2485) [HUDI-1621] Gets the parallelism from context when init StreamWriteOperatorCoordinator (apache#2579) [HUDI-1381] Schedule compaction based on time elapsed (apache#2260) [HUDI-1582] Throw an exception when syncHoodieTable() fails, with RuntimeException (apache#2536) [HUDI-1539] Fix bug in HoodieCombineRealtimeRecordReader with reading empty iterators (apache#2583) [HUDI-1315] Adding builder for HoodieTableMetaClient initialization (apache#2534) [HUDI-1486] Remove inline inflight rollback in hoodie writer (apache#2359) [HUDI-1586] [Common Core] [Flink Integration] Reduce the coupling of hadoop. (apache#2540) [HUDI-1624] The state based index should bootstrap from existing base files (apache#2581) [HUDI-1477] Support copyOnWriteTable in java client (apache#2382) [MINOR] Ensure directory exists before listing all marker files. (apache#2594) [MINOR] hive sync checks for table after creating db if auto create is true (apache#2591) [HUDI-1620] Add azure pipelines configs (apache#2582) [HUDI-1347] Fix Hbase index to make rollback synchronous (via config) (apache#2188) [HUDI-1637] Avoid to rename for bucket update when there is only one flush action during a checkpoint (apache#2599) [HUDI-1638] Some improvements to BucketAssignFunction (apache#2600) [HUDI-1367] Make deltaStreamer transition from dfsSouce to kafkasouce (apache#2227) [HUDI-1269] Make whether the failure of connect hive affects hudi ingest process configurable (apache#2443) [HUDI-1611] Added a configuration to allow specific directories to be filtered out during Metadata Table bootstrap. (apache#2565) [Hudi-1583]: Fix bug that Hudi will skip remaining log files if there is logFile with zero size in logFileList when merge on read. (apache#2584) [HUDI-1632] Supports merge on read write mode for Flink writer (apache#2593) [HUDI-1540] Fixing commons codec dependency in bundle jars (apache#2562) [HUDI-1644] Do not delete older rollback instants as part of rollback. Archival can take care of removing old instants cleanly (apache#2610) [HUDI-1634] Re-bootstrap metadata table when un-synced instants have been archived. (apache#2595) [HUDI-1584] Modify maker file path, which should start with the target base path. (apache#2539) [MINOR] Fix default value for hoodie.deltastreamer.source.kafka.auto.reset.offsets (apache#2617) [HUDI-1553] Configuration and metrics for the TimelineService. (apache#2495) [HUDI-1587] Add latency and freshness support (apache#2541) [HUDI-1647] Supports snapshot read for Flink (apache#2613) [HUDI-1646] Provide mechanism to read uncommitted data through InputFormat (apache#2611) [HUDI-1655] Support custom date format and fix unsupported exception in DatePartitionPathSelector (apache#2621) [HUDI-1636] Support Builder Pattern To Build Table Properties For HoodieTableConfig (apache#2596) [HUDI-1660] Excluding compaction and clustering instants from inflight rollback (apache#2631) [HUDI-1661] Exclude clustering commits from getExtraMetadataFromLatest API (apache#2632) [MINOR] Fix import in StreamerUtil.java (apache#2638) [HUDI-1618] Fixing NPE with Parquet src in multi table delta streamer (apache#2577) [HUDI-1662] Fix hive date type conversion for mor table (apache#2634) [HUDI-1673] Replace scala.Tule2 to Pair in FlinkHoodieBloomIndex (apache#2642) [MINOR] HoodieClientTestHarness close resources in AfterAll phase (apache#2646) [HUDI-1635] Improvements to Hudi Test Suite (apache#2628) [HUDI-1651] Fix archival of requested replacecommit (apache#2622) [HUDI-1663] Streaming read for Flink MOR table (apache#2640) [HUDI-1678] Row level delete for Flink sink (apache#2659) [HUDI-1664] Avro schema inference for Flink SQL table (apache#2658) [HUDI-1681] Support object storage for Flink writer (apache#2662) [HUDI-1685] keep updating current date for every batch (apache#2671) [HUDI-1496] Fixing input stream detection of GCS FileSystem (apache#2500) [HUDI-1684] Tweak hudi-flink-bundle module pom and reorganize the pacakges for hudi-flink module (apache#2669) [HUDI-1692] Bounded source for stream writer (apache#2674) [HUDI-1552] Improve performance of key lookups from base file in Metadata Table. (apache#2494) [HUDI-1552] Improve performance of key lookups from base file in Metadata Table. [HUDI-1695] Fixed the error messaging (apache#2679) [HUDI 1615] Fixing null schema in bulk_insert row writer path (apache#2653) [HUDI-845] Added locking capability to allow multiple writers (apache#2374) [HUDI-1701] Implement HoodieTableSource.explainSource for all kinds of pushing down (apache#2690) [HUDI-1704] Use PRIMARY KEY syntax to define record keys for Flink Hudi table (apache#2694) [HUDI-1688]hudi write should uncache rdd, when the write operation is finnished (apache#2673) [MINOR] Remove unused var in AbstractHoodieWriteClient (apache#2693) [HUDI-1653] Add support for composite keys in NonpartitionedKeyGenerator (apache#2627) [HUDI-1705] Flush as per data bucket for mini-batch write (apache#2695) [1568] Fixing spark3 bundles (apache#2625) [HUDI-1650] Custom avro kafka deserializer. (apache#2619) [HUDI-1667]: Fix a null value related bug for spark vectorized reader. (apache#2636) [HUDI-1709] Improving config names and adding hive metastore uri config (apache#2699) [MINOR][DOCUMENT] Update README doc for integ test (apache#2703) [HUDI-1710] Read optimized query type for Flink batch reader (apache#2702) [HUDI-1712] Rename & standardize config to match other configs (apache#2708) [hotfix] Log the error message for creating table source first (apache#2711) [HUDI-1495] Bump Flink version to 1.12.2 (apache#2718) [HUDI-1728] Fix MethodNotFound for HiveMetastore Locks (apache#2731) [HUDI-1478] Introduce HoodieBloomIndex to hudi-java-client (apache#2608) [HUDI-1729] Asynchronous Hive sync and commits cleaning for Flink writer (apache#2732) [HOTFIX] close spark session in functional test suite and disable spark3 test for spark2 (apache#2727) [HOTFIX] Disable ITs for Spark3 and scala2.12 (apache#2733) [HOTFIX] fix deploy staging jars script [MINOR] Add Missing Apache License to test files (apache#2736) [UBER] Fixed creation of HoodieMetadataClient which now uses a Builder pattern instead of a constructor. Reviewers: balajee Reviewed By: balajee JIRA Issues: HUDI-593 Differential Revision: https://code.uberinternal.com/D5867129

… files

What is the purpose of the pull request

The index should bootstrap from existing base files if there are, in the

design, we load all the keys for one partition if we found that the key

does not exist in the index for

processElement, if there are manyrecords for this partition, the processing may block and trigger back

pressure. When all the records are loaded, we only need to check the

state each time a record is tagged.

Brief change log

Verify this pull request

Added UTs.

Committer checklist

Has a corresponding JIRA in PR title & commit

Commit message is descriptive of the change

CI is green

Necessary doc changes done or have another open PR

For large changes, please consider breaking it into sub-tasks under an umbrella JIRA.