Persistent tower#10718

Conversation

Codecov Report

@@ Coverage Diff @@

## master #10718 +/- ##

=========================================

+ Coverage 82.1% 82.2% +0.1%

=========================================

Files 341 341

Lines 80245 81022 +777

=========================================

+ Hits 65896 66633 +737

- Misses 14349 14389 +40 |

2a50708 to

e53a9cb

Compare

9969102 to

49c9e77

Compare

|

@carllin EDIT: I've also managed to simplify the adjust logic a bit: dd25003 df55bfb |

Yeah, I think this is safe too. I lightly read #11727. |

|

|

||

| #[test] | ||

| #[serial] | ||

| #[ignore] |

There was a problem hiding this comment.

Towards addressing this review comment: #10718 (comment)

@carllin Well, I found it's very hard to make a validator violate optimistic confirmation if and only if tower is removed.

The hard part is that marking a slot as dead makes a validator to violate the optimistic conf. even if tower is persited. And eventually, the cluster makes new roots.

The initial situation is like this:

A: validator A (observer)

B: validator B (violator, will be restarted)

A & B:

0 -> 1 -> 2 -> ... -> 50 -> 51 (last vote for A and B; thus opt. confirmed)

Then mark 51 as dead only on B and B's new view after restart:

[...] = not in bank_forks

B:

0 -> 1 -> 2 -> ... -> 50 [-> 51 (stray last vote for B)]

Even if we keep (optimistically-confirmed) slot 51 in towrer, B's bank_forks doesn't have the ancestors info for slot 51 due to forcibly marking 51 as dead. So, this falls into this clause: let last_vote_ancestors = ... { &empty_ancestors };. Then, !last_vote_ancestors.contains(lockout_interval_start) always evaluates to true. So we can't correctly exclude A's stake using A's lockout_interval, which is actually ancestor of slot 51. Finally, B then starts to vote, creating a new fork like this:

B:

0 -> 1 -> 2 -> ... -> 49 -> 50 [-> 51 (stray last vote for B)]

|

+-> 56 -> 57

Ultimately, A starts to vote on the new bad fork, resulting in violated optimistic confirmation for the slot 51.

The forced dead slot 51 is the problem. But this shouldn't occur in practice. Usually, we should have ancestors for any voted slots in bank_forks. The only exception is newer snapshot. Snapshot is by-definition a new trustworthy root, so we can safely ignore unavailable ancestors for any stray last vote. Thus, this isn't bug per se.

But I couldn't come up with a good test scenario/strategy. Any suggestions?

There was a problem hiding this comment.

@ryoqun, ah I see! Does that mean we can remove this: https://github.com/solana-labs/solana/pull/10718/files#diff-a6e7be832768ca536ac5b7450a42ee09R1439-R1444 as well now?

There was a problem hiding this comment.

@ryoqun Hmm I couldn't come up with a simple example, but here's an interesting alternative idea:

You have validator A + B with 31% and 36% of the stake:

0 -> 1 -> 2 -> 3 (A + B vote, optimistically confirmed)

Turn off A + B, and truncate the ledger after slot 3 (simulate votes not landing in next slot). Start validator C with 33% of the stake with same ledger, but only up to slot 2. Have C generate some blocks like:

0 -> 1-> 2 -> 4

Then restart A which had 31% of the stake. With the tower, from A's perspective it sees:

0 -> 1 -> 2 -> 3 (voted)

|

-> 4 -> 5 (C's vote for 4)

The fork choice rule weights look like:

0 -> 1 -> 2 (ABC) -> 3

|

-> 4 (C) -> 5

Without the tower, A would choose to vote on the fork with 4->5. This is true even if A generates a new fork starting at slot 3 because C has more stake than A so A will eventually pick the fork C is on. Furthermore B's vote on 3 is not observable because there are no descendants of slot 3, so that fork will not be chose over C's fork

With the persisted tower however, A should not be able to generate a switching proof.

There was a problem hiding this comment.

oh this looks interesting case. Because this isn't critical part of this pr, I'll address this as a follow-up pr.

First let's land this 1700-lines pr to free me of rebasing... ;)

|

@ryoqun I think we should wait to check this in until after the mainnet upgrade so that it can bake on Tds for a while |

@carllin Whoa. This pr finally got LGTM-ed? Yay!!! So, let's target v1.4? So, merging is ok? and backporting (to v1.3) is not ok?. I'm getting tired of re-re-re-re-...-rebases, maybe another one due to this #12340. ;) |

|

@ryoqun yeah lets target 1.4! |

Sorry! Want me to wait until you merge this PR? |

@mvines if it's not too painful and/or time-pressured for you to rebase, please wait. I'll merge this pr in 30-min or so after final last-minute checking. |

|

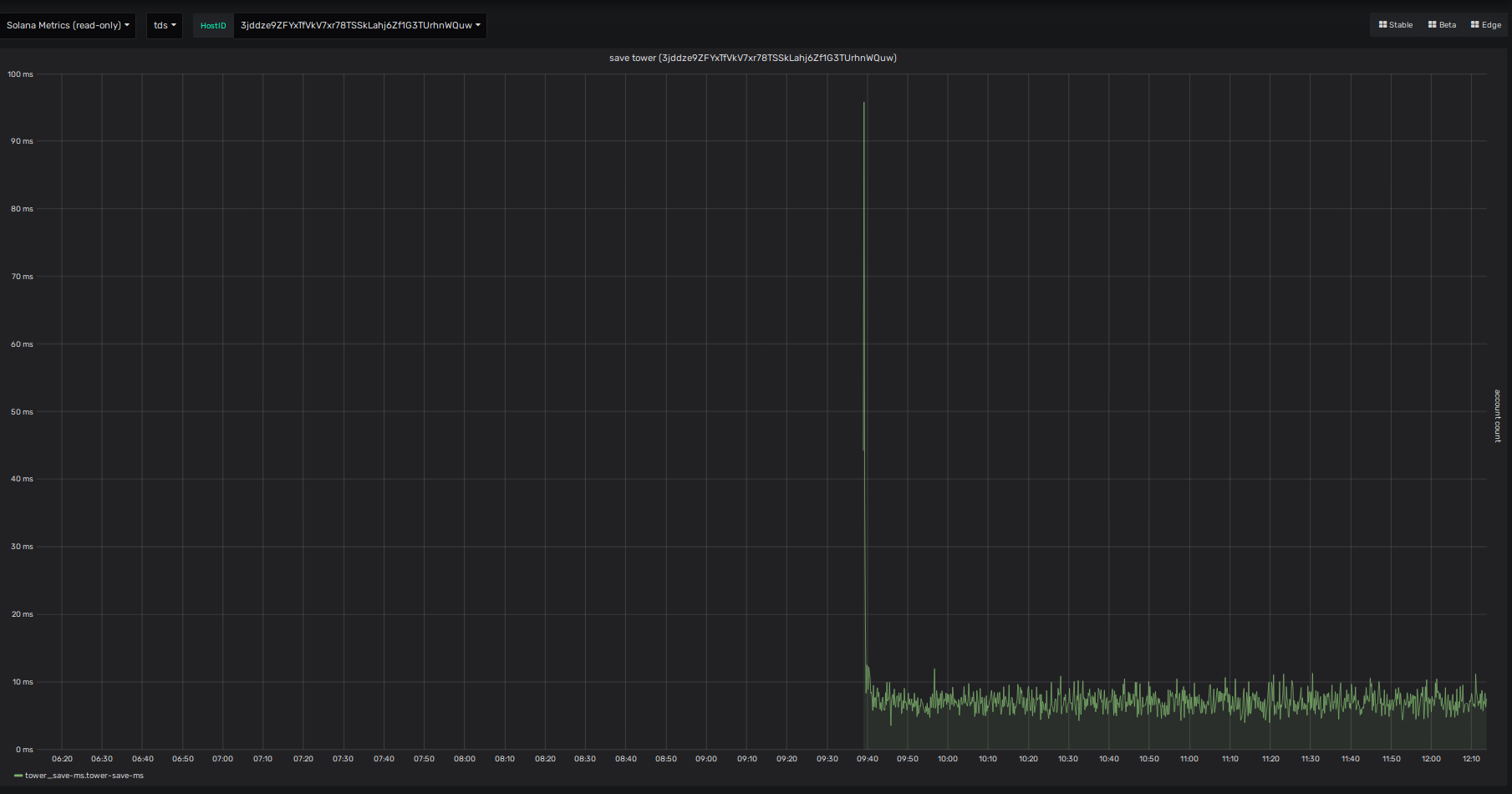

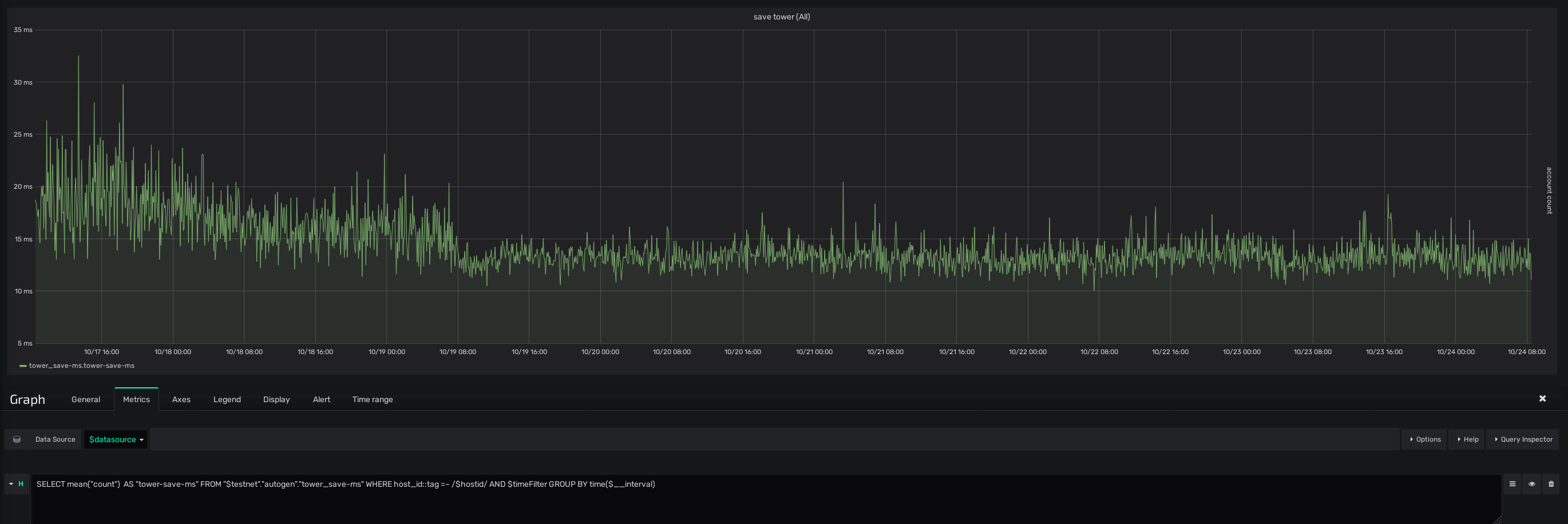

hmm. this isn't super fun; but it looks like even un-fsynced tower save takes around 7ms (medium): And this directly delays that amount for any level of confirmations. CC: @carllin |

|

come to think of it, I remembered we sign the saved tower. |

|

Weekly update on the perf and error monitoring! it seems that mean of tower save time is still high. I'd like this to be well below 1ms.. After the first upgrade with tower. Also, it seems that there is no tower_error/tower_warn/panic metric indicating some bug in persistent tower (checked with chronograf). (Well, #12671 isn't landed, though). Already 200+ validators experienced the upgrade: |

* Save/restore Tower * Avoid unwrap() * Rebase cleanups * Forcibly pass test * Correct reconcilation of votes after validator resume * d b g * Add more tests * fsync and fix test * Add test * Fix fmt * Debug * Fix tests... * save * Clarify error message and code cleaning around it * Move most of code out of tower save hot codepath * Proper comment for the lack of fsync on tower * Clean up * Clean up * Simpler type alias * Manage tower-restored ancestor slots without banks * Add comment * Extract long code blocks... * Add comment * Simplify returned tuple... * Tweak too aggresive log * Fix typo... * Add test * Update comment * Improve test to require non-empty stray restored slots * Measure tower save and dump all tower contents * Log adjust and add threshold related assertions * cleanup adjust * Properly lower stray restored slots priority... * Rust fmt * Fix test.... * Clarify comments a bit and add TowerError::TooNew * Further clean-up arround TowerError * Truly create ancestors by excluding last vote slot * Add comment for stray_restored_slots * Add comment for stray_restored_slots * Use BTreeSet * Consider root_slot into post-replay adjustment * Tweak logging * Add test for stray_restored_ancestors * Reorder some code * Better names for unit tests * Add frozen_abi to SavedTower * Fold long lines * Tweak stray ancestors and too old slot history * Re-adjust error conditon of too old slot history * Test normal ancestors is checked before stray ones * Fix conflict, update tests, adjust behavior a bit * Fix test * Address review comments * Last touch! * Immediately after creating cleaning pr * Revert stray slots * Revert comment... * Report error as metrics * Revert not to panic! and ignore unfixable test... * Normalize lockouts.root_slot more strictly * Add comments for panic! and more assertions * Proper initialize root without vote account * Clarify code and comments based on review feedback * Fix rebase * Further simplify based on assured tower root * Reorder code for more readability Co-authored-by: Michael Vines <mvines@gmail.com>

* Save/restore Tower * Avoid unwrap() * Rebase cleanups * Forcibly pass test * Correct reconcilation of votes after validator resume * d b g * Add more tests * fsync and fix test * Add test * Fix fmt * Debug * Fix tests... * save * Clarify error message and code cleaning around it * Move most of code out of tower save hot codepath * Proper comment for the lack of fsync on tower * Clean up * Clean up * Simpler type alias * Manage tower-restored ancestor slots without banks * Add comment * Extract long code blocks... * Add comment * Simplify returned tuple... * Tweak too aggresive log * Fix typo... * Add test * Update comment * Improve test to require non-empty stray restored slots * Measure tower save and dump all tower contents * Log adjust and add threshold related assertions * cleanup adjust * Properly lower stray restored slots priority... * Rust fmt * Fix test.... * Clarify comments a bit and add TowerError::TooNew * Further clean-up arround TowerError * Truly create ancestors by excluding last vote slot * Add comment for stray_restored_slots * Add comment for stray_restored_slots * Use BTreeSet * Consider root_slot into post-replay adjustment * Tweak logging * Add test for stray_restored_ancestors * Reorder some code * Better names for unit tests * Add frozen_abi to SavedTower * Fold long lines * Tweak stray ancestors and too old slot history * Re-adjust error conditon of too old slot history * Test normal ancestors is checked before stray ones * Fix conflict, update tests, adjust behavior a bit * Fix test * Address review comments * Last touch! * Immediately after creating cleaning pr * Revert stray slots * Revert comment... * Report error as metrics * Revert not to panic! and ignore unfixable test... * Normalize lockouts.root_slot more strictly * Add comments for panic! and more assertions * Proper initialize root without vote account * Clarify code and comments based on review feedback * Fix rebase * Further simplify based on assured tower root * Reorder code for more readability Co-authored-by: Michael Vines <mvines@gmail.com>

Problem

tower isn't persisted.

Summary of Changes

Persist it

Todo

Fixes #6936