-

Notifications

You must be signed in to change notification settings - Fork 29.1k

[SPARK-28907][CORE] Review invalid usage of new Configuration() #25616

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Changes from all commits

File filter

Filter by extension

Conversations

Jump to

Diff view

Diff view

There are no files selected for viewing

| Original file line number | Diff line number | Diff line change | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|

|

|

@@ -45,6 +45,7 @@ class HadoopFileWholeTextReader(file: PartitionedFile, conf: Configuration) | |||||||||

| val attemptId = new TaskAttemptID(new TaskID(new JobID(), TaskType.MAP, 0), 0) | ||||||||||

| val hadoopAttemptContext = new TaskAttemptContextImpl(conf, attemptId) | ||||||||||

| val reader = new WholeTextFileRecordReader(fileSplit, hadoopAttemptContext, 0) | ||||||||||

| reader.setConf(hadoopAttemptContext.getConfiguration) | ||||||||||

|

Contributor

Author

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more.

However, I am wondering for I am wondering if we should remove

Member

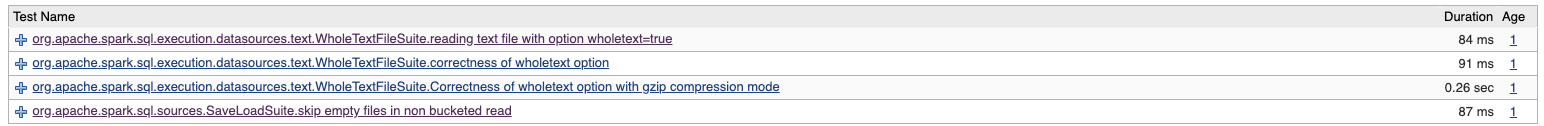

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. Is there an existing test that fails without this change, as you mention? should it be reenabled?

Contributor

Author

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more.

However, the failure is introduced by my change to spark/core/src/main/scala/org/apache/spark/input/WholeTextFileRecordReader.scala Lines 70 to 73 in 149de72

We use

Member

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. I see, they're not failing in master but can fail if run in an env where Hadoop config files are present?

Contributor

Author

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more.

Eh, yes, they are not failing in master. The code(master) even normally won't fail in an env where Hadoop configs are present. They could fail or get unexpected result unless the Hadoop configs are incorrectly configured in executor env(such as yarn-cluster), even user supplies correct configs (passed to |

||||||||||

| reader.initialize(fileSplit, hadoopAttemptContext) | ||||||||||

| new RecordReaderIterator(reader) | ||||||||||

| } | ||||||||||

|

|

||||||||||

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

This is needed because

initNextRecordReadercould be called in the constructor, whichgetConfwould be null.We have to override

setConftoo to set conf for the first reader.