[SPARK-28907][CORE] Review invalid usage of new Configuration()#25616

[SPARK-28907][CORE] Review invalid usage of new Configuration()#25616advancedxy wants to merge 2 commits intoapache:masterfrom

Conversation

| if (r) { | ||

| this.curReader.asInstanceOf[HConfigurable].setConf(getConf) | ||

| if (getConf != null) { | ||

| this.curReader.asInstanceOf[HConfigurable].setConf(getConf) |

There was a problem hiding this comment.

This is needed because initNextRecordReader could be called in the constructor, which getConf would be null.

We have to override setConf too to set conf for the first reader.

|

cc @gatorsmile.

Ah, would you please add me to the white list. |

|

(Drive-by comment; not a serious review): Is it worth adding a Scalastyle regex check to ban the zero-argument |

|

ok to test |

|

Test build #109928 has finished for PR 25616 at commit

|

advancedxy

left a comment

advancedxy

left a comment

There was a problem hiding this comment.

Is it worth adding a Scalastyle regex check to ban the zero-argument Configuration constructor? See the existing Scalastyle XML configurations for examples of how this was done for other similar API (mis)uses. This would prevent re-occurrence of this problem in new code.

@joshrosen-stripe This is a good point. Two problems remain uncertain.

new Configuration()are mostly used in Suite/Test files, can we skip these files in Scalastyle XMLs?new Configuration()is valid and should be called in some places. How can we whitelists these calls? such as allowed in certain classes?

| val attemptId = new TaskAttemptID(new TaskID(new JobID(), TaskType.MAP, 0), 0) | ||

| val hadoopAttemptContext = new TaskAttemptContextImpl(conf, attemptId) | ||

| val reader = new WholeTextFileRecordReader(fileSplit, hadoopAttemptContext, 0) | ||

| reader.setConf(hadoopAttemptContext.getConfiguration) |

There was a problem hiding this comment.

WholeTextFileRecordReader is Configurable, setConf should be called after creation.

This is why tests are failing before this patch.

However, I am wondering for org.apache.spark.input.WholeTextFileRecordReader and org.apache.spark.input.ConfigurableCombineFileRecordReader, we can already retrieve config from org.apache.hadoop.mapreduce.TaskAttemptContext. There is no need to make these class Configurable

I am wondering if we should remove Configurable trait for the related classes all at once. what do you think @gatorsmile

There was a problem hiding this comment.

Is there an existing test that fails without this change, as you mention? should it be reenabled?

There was a problem hiding this comment.

I see, they're not failing in master but can fail if run in an env where Hadoop config files are present?

There was a problem hiding this comment.

I see, they're not failing in master but can fail if run in an env where Hadoop config files are present?I see, they're not failing in master but can fail if run in an env where Hadoop config files are present?

Eh, yes, they are not failing in master. The code(master) even normally won't fail in an env where Hadoop configs are present. They could fail or get unexpected result unless the Hadoop configs are incorrectly configured in executor env(such as yarn-cluster), even user supplies correct configs (passed to TaskAttemptContext

|

Test build #109937 has finished for PR 25616 at commit

|

|

Test build #4851 has finished for PR 25616 at commit

|

|

Merged to master |

What changes were proposed in this pull request?

Replaces some incorrect usage of

new Configuration()as it will load default configs defined in HadoopWhy are the changes needed?

Unexpected config could be accessed instead of the expected config, see SPARK-28203 for example

Does this PR introduce any user-facing change?

No.

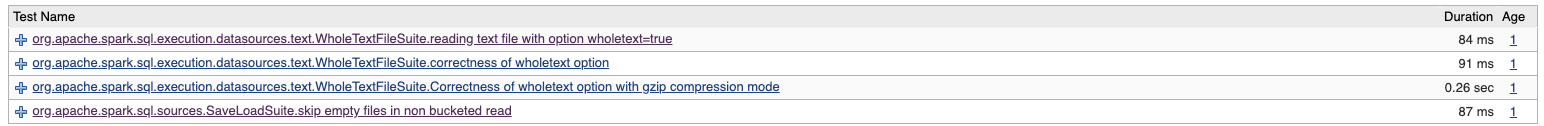

How was this patch tested?

Existed tests.