Ask if overwrite existing ClearML experiment#9865

Ask if overwrite existing ClearML experiment#9865mikel-brostrom wants to merge 18 commits intoultralytics:masterfrom

Conversation

Signed-off-by: Mike <yolov5.deepsort.pytorch@gmail.com>

for more information, see https://pre-commit.ci

for more information, see https://pre-commit.ci

|

@mikel-brostrom nice idea. But I'm not sure if we should have a function that stalls forever for input? Instead we should have a this function time out after a fixed interval if user doesn't respond and go ahead with the default setting. What do you think? |

|

See Timeout class in utils/general # YOLOv5 Timeout class. Usage: @Timeout(seconds) decorator or 'with Timeout(seconds):' context manager |

|

Let's add @timeout(10) on |

|

Maybe add 20sec? 10 sec will get users like me anxious 😆 |

for more information, see https://pre-commit.ci

|

Ok, let's do 20 xD |

Signed-off-by: Mike <yolov5.deepsort.pytorch@gmail.com>

|

Busting in here to add my 2 cents: ClearML will not by default overwrite an experiment (even one with the same name) UNLESS it has no artifacts/model files etc. This was originally designed to not clutter the interface with tasks that have no value to the user. If you don't like this behaviour, the easier way to stop this from happening is to add If so, we could add it like this by default or allow the user to pass a new command line argument to set this? |

|

The problem I see is that sometimes you may want to interrupt a training with partial results as they may be enough for you particular experiment. Specially for us that are low on GPUs. This means that no artifacts/models gets uploaded which leads to this issue. I think |

|

@thepycoder @mikel-brostrom yeah I'd also prefer |

|

The way I see it is that by default the experiments should not be overwritten but I should be able to overwrite them if I want to |

|

I agree, there 2 parts of this though:

EDIT: Actually overriding a task by its ID is in fact possible! I don't know it it's the best way to go, but it is available should you think it a better option @AyushExel |

|

@thepycoder |

|

I'll get right to it :) |

|

@mikel-brostrom @AyushExel I've made a PR request that sets the default behaviour to not override, allows you to override it either with the newest task or a specific one by ID. Thanks to ClearML already supporting this it was straightforward to add :) Thanks all! |

|

@thepycoder @mikel-brostrom hi guys, sorry for the delay here. Is this PR good to go? I see a related PR in #10363, do these work together or should I do one or ther other? |

|

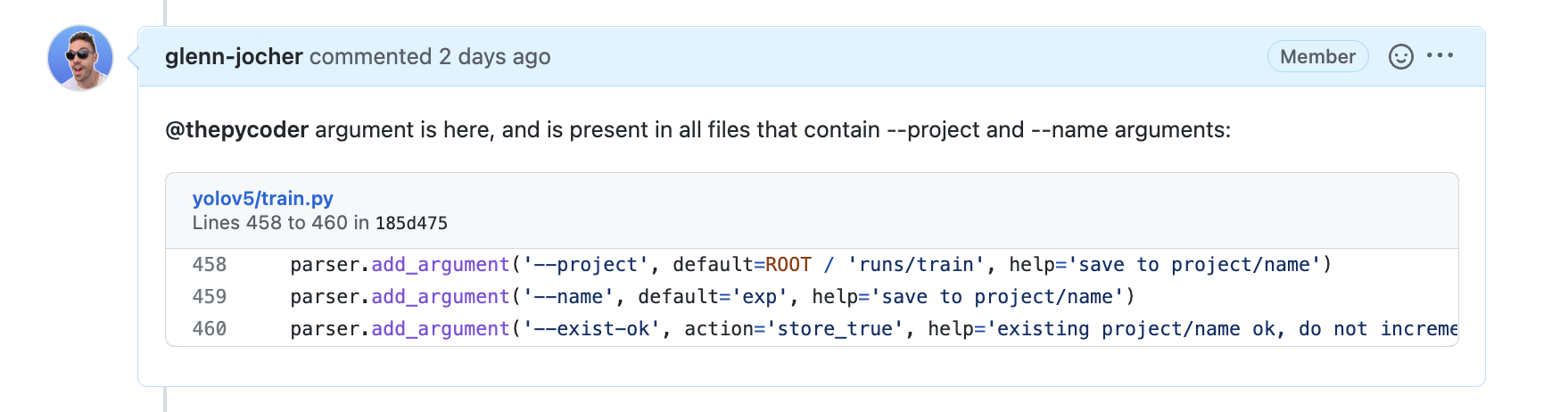

@mikel-brostrom @thepycoder I'd like to close this out along with #10363. Are these two PRs related, should we do one or the other? Can you guys please let me know the status of these two? Thanks! Also as I mentioned in #10363 we have an existing --exist-ok argument that is meant to allow for re-use of existing projects: |

|

Correct me if I am wrong @thepycoder, but your PR solves my issue stated here #9865 as well right, but with the internal functionality in ClearML instead of my workaround? In that case #10363 is the one to merge. I have however not tried this out. |

|

@mikel-brostrom Is right, #10363 is the one to use here (Sorry for stealing your spotlight @mikel-brostrom thanks so much for reporting!) I've just updated it though, according to @glenn-jocher 's comments on it. |

np @thepycoder |

|

closing this down |

|

@mikel-brostrom good news 😃! Your original issue may now be fixed ✅ in PR #10363 by @thepycoder. To receive this update:

Thank you for spotting this issue and informing us of the problem. Please let us know if this update resolves the issue for you, and feel free to inform us of any other issues you discover or feature requests that come to mind. Happy trainings with YOLOv5 🚀! |

Overwriting experiment data without asking is very painful and a huge waste of time. This can be solved by a simple y/n question.

If a task with the chosen name already exists in ClearML, ask if you want to overwrite it.

🛠️ PR Summary

Made with ❤️ by Ultralytics Actions

🌟 Summary

Enhanced ClearML integration with a timeout feature for avoiding experiment overwrite in YOLOv5.

📊 Key Changes

Timeoutdecorator to prevent hanging during user input.🎯 Purpose & Impact