Use only PositionsAppender buffers in PagePartitioner#15839

Use only PositionsAppender buffers in PagePartitioner#15839sopel39 merged 1 commit intotrinodb:masterfrom

Conversation

b70f88a to

8529a7a

Compare

bfe095f to

2af66fd

Compare

core/trino-main/src/main/java/io/trino/operator/output/PagePartitionerPool.java

Outdated

Show resolved

Hide resolved

There was a problem hiding this comment.

Why not "appendRle" here?

There was a problem hiding this comment.

appendRle is less efficient in most cases in terms of CPU.

I did consider it because it has the upside of producing RLE block if all input blocks are RLE with the same value so network traffic would decrease but decided that overhead for every row is not worth it.

I did not run benchmarks for this though, I will do it because if the overhead is not that big producing RLE has its value.

There was a problem hiding this comment.

I ran JMH to compare append vs appendRle and the former is significantly slower (up to 3X)

| channelCount | enableCompression | nullRate | partitionCount | positionCount | type | append | appendRle | appendRle % |

| ------------ | ----------------- | -------- | -------------- | ------------- | --------------------- | ------- | --------- | ----------- |

| 1 | FALSE | 0 | 1000 | 10 | RLE_BIGINT | 118.514 | 133.23 | 12.4170984 |

| 1 | FALSE | 0 | 1000 | 10 | ROW_RLE_BIGINT_BIGINT | 77.396 | 81.651 | 5.49770014 |

| 1 | FALSE | 0 | 1000 | 100 | RLE_BIGINT | 152.432 | 226.563 | 48.63217697 |

| 1 | FALSE | 0 | 1000 | 100 | ROW_RLE_BIGINT_BIGINT | 112.26 | 129.523 | 15.37769464 |

| 1 | FALSE | 0 | 1000 | 500 | RLE_BIGINT | 223.786 | 563.822 | 151.9469493 |

| 1 | FALSE | 0 | 1000 | 500 | ROW_RLE_BIGINT_BIGINT | 210.256 | 363.192 | 72.73799559 |

| 1 | FALSE | 0 | 1000 | 1000 | RLE_BIGINT | 312.515 | 995.08 | 218.4103163 |

| 1 | FALSE | 0 | 1000 | 1000 | ROW_RLE_BIGINT_BIGINT | 351.513 | 684.464 | 94.71939871 |

| 1 | FALSE | 0 | 1000 | 1999 | RLE_BIGINT | 492.314 | 1876.512 | 281.1616164 |

| 1 | FALSE | 0 | 1000 | 1999 | ROW_RLE_BIGINT_BIGINT | 683.095 | 1299.389 | 90.22083312 |

| 1 | FALSE | 0.2 | 1000 | 10 | RLE_BIGINT | 118.375 | 145.382 | 22.81478353 |

| 1 | FALSE | 0.2 | 1000 | 10 | ROW_RLE_BIGINT_BIGINT | 88.252 | 92.292 | 4.577799937 |

| 1 | FALSE | 0.2 | 1000 | 100 | RLE_BIGINT | 152.097 | 223.736 | 47.10086326 |

| 1 | FALSE | 0.2 | 1000 | 100 | ROW_RLE_BIGINT_BIGINT | 98.02 | 119.2 | 21.60783514 |

| 1 | FALSE | 0.2 | 1000 | 500 | RLE_BIGINT | 223.654 | 566.095 | 153.1119497 |

| 1 | FALSE | 0.2 | 1000 | 500 | ROW_RLE_BIGINT_BIGINT | 184.665 | 305.838 | 65.61774023 |

| 1 | FALSE | 0.2 | 1000 | 1000 | RLE_BIGINT | 313.747 | 1021.202 | 225.4858214 |

| 1 | FALSE | 0.2 | 1000 | 1000 | ROW_RLE_BIGINT_BIGINT | 293.456 | 538.015 | 83.33753612 |

| 1 | FALSE | 0.2 | 1000 | 1999 | RLE_BIGINT | 490.783 | 1916.683 | 290.5357358 |

| 1 | FALSE | 0.2 | 1000 | 1999 | ROW_RLE_BIGINT_BIGINT | 548.206 | 1061.718 | 93.67135712 |

2af66fd to

e1cc7e0

Compare

|

jmh results that compare append is way faster |

e1cc7e0 to

dc262e3

Compare

|

rebased on the master due to "Error: Configuration property 'task-retry-attempts-overall' was not used" issue |

There was a problem hiding this comment.

seems like it might not be beneficial (e.g. switch-to-flat for 1000 rows to just append single row). This only makes sense if the you know you will call this method a lot of times from now on.

There was a problem hiding this comment.

we could handle rle here at some CPU cost but we don't handle it in the blockBuilder case anyway

There was a problem hiding this comment.

Yes, but appenders are not block builders nor they should behave like ones. Appenders are optimized to process big batches of positions (including special block types like RLEs and dictionaries). Here we quickly fallback to block-builder behaviour just because we've seen single row append.

There was a problem hiding this comment.

I don't think we need a new method TBH. It seems you could just optimize either existing append or appendRle for single row use.

Also comment here should probably highly discourage usage of single row append as appenders won't be efficient in that case.

There was a problem hiding this comment.

It feels like the cost of allocating single position arrays for every row might be significant. @lukasz-stec Do you know what most of the overhead comes from?

There was a problem hiding this comment.

Well, I could add an if (positionCount == 1) to appendRle to optimize it and then most of overhead would be that if. Not only during execution but also during compilation, which could impact batch append path.

IMO separate method is a lot cleaner and easier to optimize and since PositionsAppender is not a generic API to be used anywhere, at least not at the moment ,we shouldn't worry about adding a method to it.

There was a problem hiding this comment.

it and then most of overhead would be that if

I don't think that would be an overhead. It won't be for batched append. And for row-by-row appends this if would evaluate to same value, so it's largely irrelevant.

There was a problem hiding this comment.

it seems we could just modify partitionPage as:

...

long buildersSizeInBytes = getSizeInBytes();

// don't exceed average maxPageSize when appender and builders are combined together

if (buildersSizeInBytes > maxMemory.toBytes()) {

flushPositionsAppenders(true);

flushPageBuilders(true);

}

updateMemoryUsage();

}

Seems like this change would be much smaller and doesn't rise questions about potential regressions (especially for FTE where there will be a lot of small partitions potentially).

There was a problem hiding this comment.

actually, the if there raises questions about potential regressions.

It can easily produce small pages. For example, if 50% of positions are handled by PostiionsAppenders and the other 50% by BlockBuilders this will cause the average page size to be 50% of the expected page size given enough partitions.

If only 5% of positions go to either one then this will produce a lot of extremely small pages because it will always flush almost empty buffers

There was a problem hiding this comment.

It can easily produce small pages. For example, if 50% of positions are handled by PostiionsAppenders and the other 50% by BlockBuilders this will cause the average page size to be 50% of the expected page size given enough partitions.

50% is still big enough too, right?

If only 5% of positions go to either one then this will produce a lot of extremely small pages because it will always flush almost empty buffers

If you have 5% vs 95%, then you have one small page and one large page. It is fine, see Javadoc for io.trino.operator.project.MergePages

|

I would prefer change like #15839 (comment) since appenders are optimized for batch use and I'm not sure we want to change that (e.g. they are nested and we don't consider cost of recursive calls because they are not important for batch calls). |

dc262e3 to

df1b3f7

Compare

|

I dropped not (strongly) related commits and rebased on the master |

|

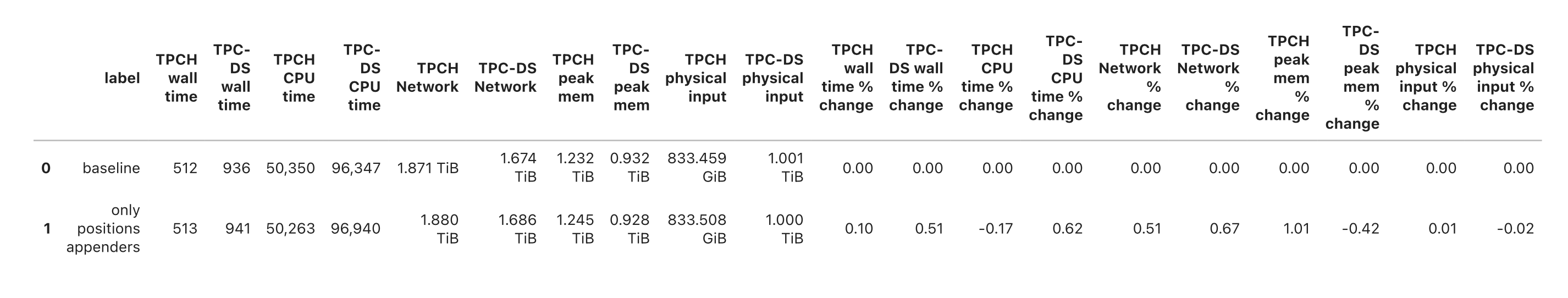

tpch/tpcds benchmark results. Overall no difference. |

There was a problem hiding this comment.

could you add a condition to check that Rle block after single-row append does no longer produce rle?

There was a problem hiding this comment.

done in testRleAppendedWithSinglePositionDoesNotProduceRle

df1b3f7 to

109bda7

Compare

PagePartitioner used both PositionsAppender and BlockBuilder based buffers to support case with small pages relative to partition count where partitioning row by row is more efficient. This has the downside of possibly doubling the amount of memory used to buffer the outgoing pages before they are flushed to the OutputBuffer. This commit switches to only PositionsAppender buffers without much performance impact by adding efficient single position append in PositionsAppender implementations.

d4b74f1 to

f9ff0eb

Compare

Description

The row-based approach in

PagePartitioneris faster than the columnar one when the number of positions in a page is smaller than the partition count.For this reason, we currently maintain two buffers in PagePartitioner, one for a columnar approach and one for row-based.

This has the downside of possibly doubling the amount of memory we use to buffer the outgoing pages before there are flushed to the

OutputBuffer.This change switches to only

PositionsAppenderbuffers without much performance impact by adding efficient single position append inPositionsAppenderimplementations.Full JMH benchmark results

The average difference is around 0 but there are a few cases with some degradation, usually 5-10% but also some with higher, mostly for the extreme 10 positions per page case.

In general, I think this looks good.

Additional context and related issues

Release notes

( X) This is not user-visible or docs only and no release notes are required.

( ) Release notes are required, please propose a release note for me.

( ) Release notes are required, with the following suggested text: