helm/metrics: add a new gauge metric to monitor helm releases#579

helm/metrics: add a new gauge metric to monitor helm releases#579zonggen wants to merge 4 commits intoopenshift:masterfrom

Conversation

|

[APPROVALNOTIFIER] This PR is NOT APPROVED This pull-request has been approved by: zonggen The full list of commands accepted by this bot can be found here. DetailsNeeds approval from an approver in each of these files:Approvers can indicate their approval by writing |

| - create | ||

| - update | ||

| - delete | ||

| - apiGroups: [""] |

There was a problem hiding this comment.

This is required to run helm list --all-namespaces with Helm SDK.

Error without it: metric helm_chart_release_health_status unhandled: list: failed to list: secrets is forbidden: User "system:serviceaccount:openshift-console-operator:console-operator" cannot list resource "secrets" in API group "" at the cluster scope

Adds a `helm_chart_release_health_status` gauge metric to monitor the status of the current helm releases. Closes: https://issues.redhat.com/browse/HELM-224 Signed-off-by: Allen Bai <abai@redhat.com>

e3c498b to

d00be4c

Compare

dperaza4dustbit

left a comment

dperaza4dustbit

left a comment

There was a problem hiding this comment.

All those vendor files came from the helm sdk reference?

| } | ||

|

|

||

| for _, release := range releases { | ||

| if status := release.Info.Status.String(); status == "deployed" { |

There was a problem hiding this comment.

So only deploy is healthy, what about the other states. I would say if it is unknown or failed then is 0 then is 1 for all other states

| for _, release := range releases { | ||

| if status := release.Info.Status.String(); status == "deployed" { | ||

| klog.V(4).Infof("metric helm_chart_release_health_status 1: %s %s %s", release.Name, release.Chart.Metadata.Name, release.Chart.Metadata.Version) | ||

| helmChartReleaseHealthStatus.WithLabelValues(release.Name, release.Chart.Metadata.Name, release.Chart.Metadata.Version).Set(1) |

There was a problem hiding this comment.

the only diff between this and the line below is the 0 or 1. I would do healthStatus := 1 then change to 0 if status unknown or failed. Then make a single call to klog and to helmChartReleaseHealthStatus passing healthStatus

|

The vendor files came from after I did |

a8aa8ab to

b06fdea

Compare

Signed-off-by: Allen Bai <abai@redhat.com>

b06fdea to

bfd9094

Compare

|

@zonggen I see that the e2e Should be fixed in the PR so it's passing. |

a928d49 to

c6bfe9a

Compare

This is to mitigate the case when no helm release is present in the cluster and we want the metrics to get initialized. Reference: https://prometheus.io/docs/practices/instrumentation/#avoid-missing-metrics Signed-off-by: Allen Bai <abai@redhat.com>

c6bfe9a to

9500219

Compare

|

/retest |

|

@jhadvig Could you take a second look? The test case failed because the Reference: https://prometheus.io/docs/practices/instrumentation/#avoid-missing-metrics |

| - apiGroups: | ||

| - "" | ||

| resources: | ||

| - secrets |

There was a problem hiding this comment.

Why does the operator need to be able to fetch any secret from the cluster? Looks like a potential security issue. If we need we should rather create a Role for a specific namespace. But on the other hand I dont see any usage in the added code, where we are fetching any secret for the metrics.

There was a problem hiding this comment.

The helm list --all-namespaces action from metrics.go requires this particular permission. If I remove this it would produce:

metrics.go:40] metric helm_chart_release_health_status unhandled: list: failed to list: secrets is forbidden: User "system:serviceaccount:openshift-console-operator:console-operator" cannot list resource "secrets" in API group "" at the cluster scope

There was a problem hiding this comment.

This is pretty concerning because console-operator is effectively running as root on the cluster with this change. Is there any way to add the metrics without needing this permission?

There was a problem hiding this comment.

Yeah this is a hard no. We won't escalate the console operator to have read on Secrets cluster-wide.

There was a problem hiding this comment.

Understand, we are looking for alternative options to mitigate. It won't be a full solution if we have to use the users identity on the console side, but that is what we will pivot towards. For future reference @spadgett and @jwforres, If we had a way to filter Allow Rules by Resource Type like for example "type": "helm.sh/release.v1", would that work to reduce the access escalation? Trying to figure out if I propose a change in Kubernetes RBAC upstream on this.

| func (co *consoleOperator) SyncConsoleConfig(ctx context.Context, consoleConfig *configv1.Console, consoleURL string) (*configv1.Console, error) { | ||

| oldURL := consoleConfig.Status.ConsoleURL | ||

| metrics.HandleConsoleURL(oldURL, consoleURL) | ||

| helmmetrics.HandleHelmChartReleaseHealthStatus() |

There was a problem hiding this comment.

Why do we call the helmmetrics.HandleHelmChartReleaseHealthStatus() inside the SyncConsoleConfig method? Its not using any of its arguments.

I would suggest to eight put it directly to the main sync_v400 method or create a new controller.

There was a problem hiding this comment.

Agreed, will move it to the main sync_v400. The original intent was to piggyback on the console_url metric. Also willing to add a new controller but that controller will only consist of this one line helmmetrics.HandleHelmChartReleaseHealthStatus().

| @@ -0,0 +1,90 @@ | |||

| package metrics | |||

There was a problem hiding this comment.

lets put this into the pkg/helm/metrics/helm_metrics.go so we can reuse functions like recoverMetricPanic() instead of duplicating them

There was a problem hiding this comment.

Moved to pkg/console/metrics/helm_metrics.go

| // Reference: https://github.com/helm/helm/issues/7430#issuecomment-620489002 | ||

| func getActionConfig() (*action.Configuration, error) { | ||

| actionConfig := new(action.Configuration) | ||

| var kubeConfig *genericclioptions.ConfigFlags |

There was a problem hiding this comment.

Dont think kubeconfig is the right name

| var kubeConfig *genericclioptions.ConfigFlags | |

| var configFlags *genericclioptions.ConfigFlags |

There was a problem hiding this comment.

also why do we need to initialize the configFlags, since on line in 72 you are setting it ? cant we jusst set it directly ?

Signed-off-by: Allen Bai <abai@redhat.com>

|

Thanks for reviewing! Waiting for the tests before another look. |

|

@jhadvig PR is ready for another look but the |

|

/hold |

44d2d57 to

696a192

Compare

|

@zonggen: The following tests failed, say

Full PR test history. Your PR dashboard. DetailsInstructions for interacting with me using PR comments are available here. If you have questions or suggestions related to my behavior, please file an issue against the kubernetes/test-infra repository. I understand the commands that are listed here. |

|

Closing in favor of #601. For record, we are shifting away from calling cluster-wise |

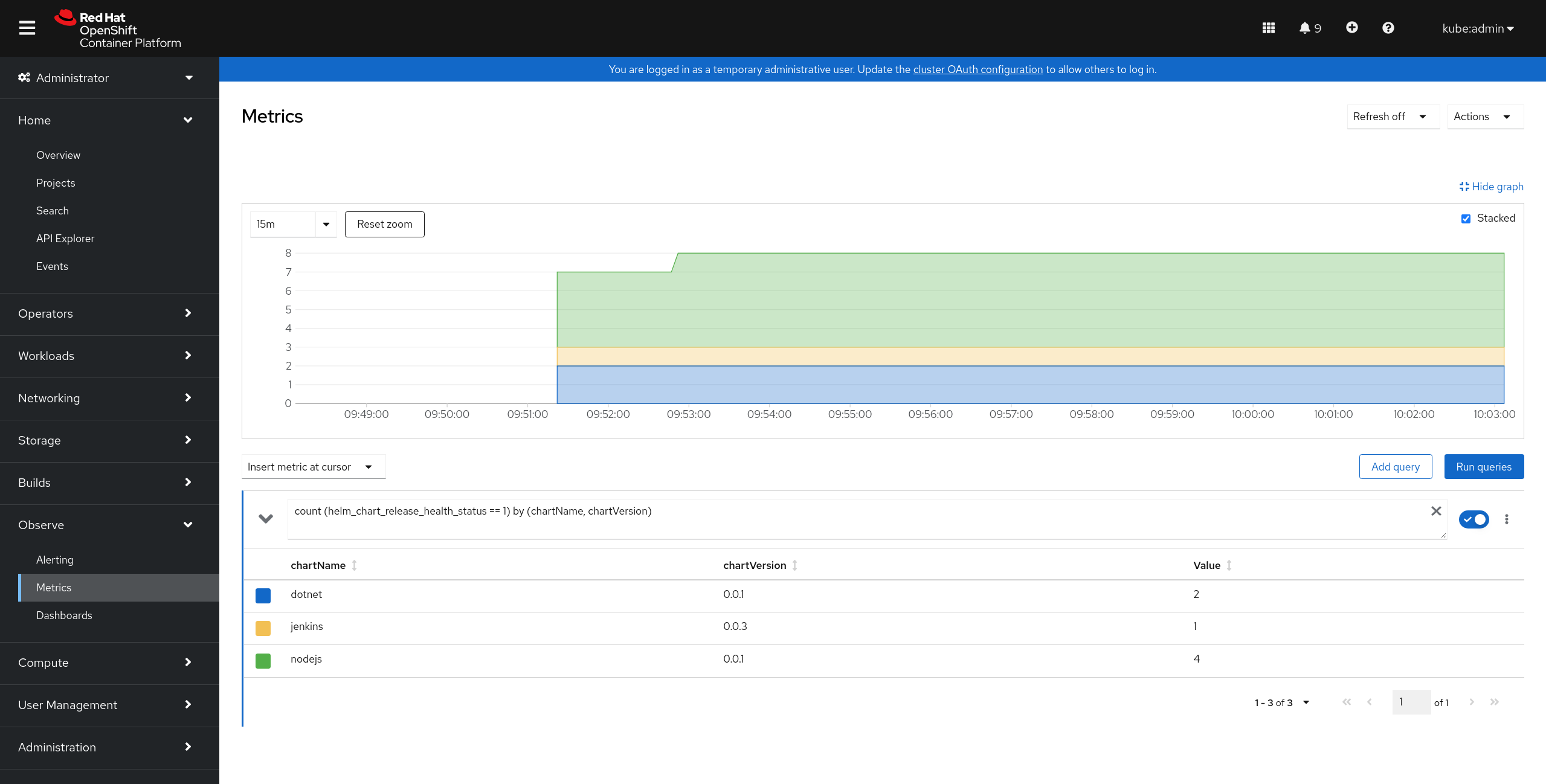

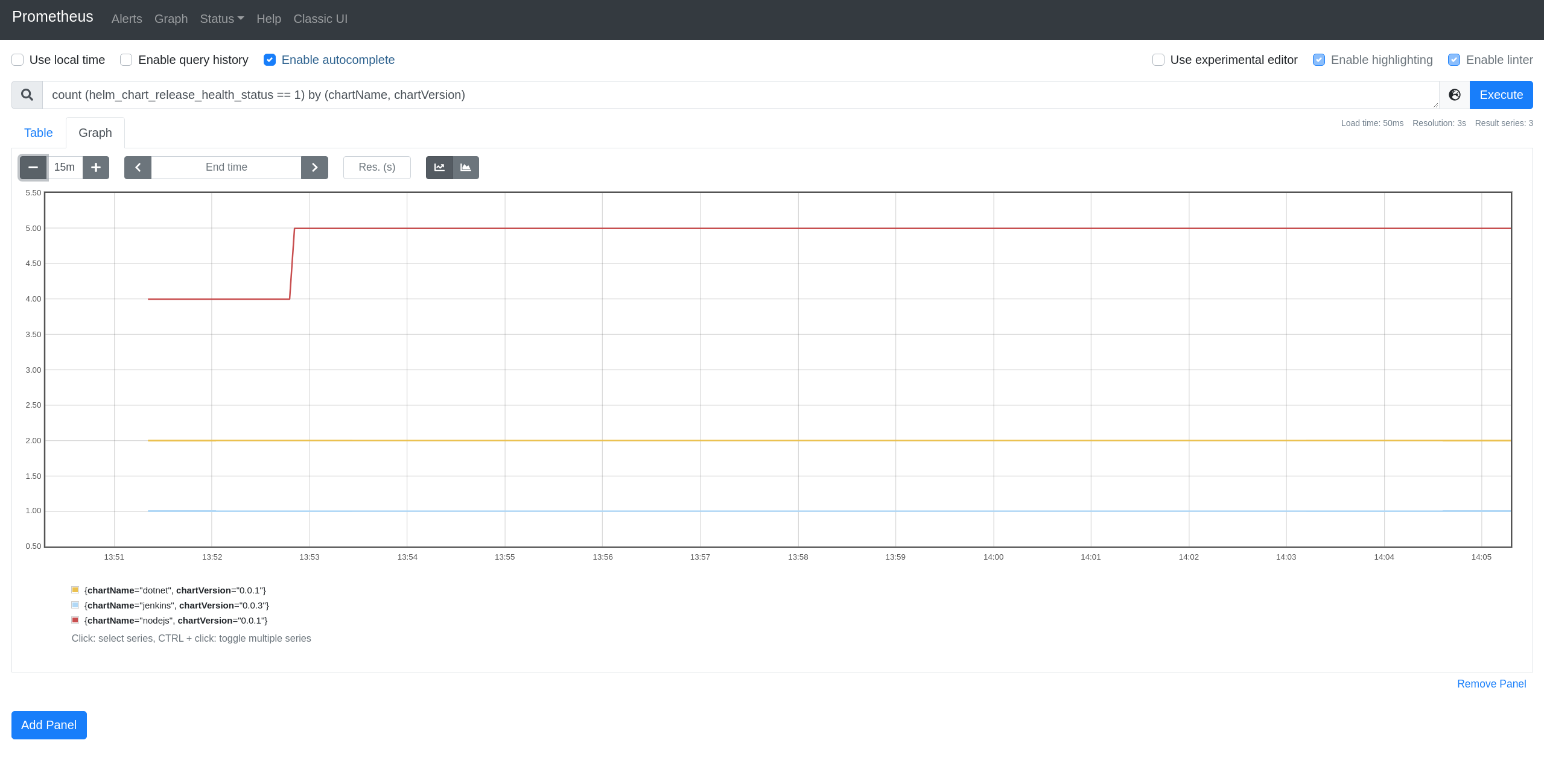

Adds a

helm_chart_release_health_statusgauge metric to monitor thestatus of the current helm releases.

To query the total number of healthy releases:

count (helm_chart_release_health_status == 1) by (chartName, chartVersion, releaseName).To query the total number of unhealthy releases:

count (helm_chart_release_health_status == 0) by (chartName, chartVersion, releaseName).From console UI:

From Prometheus UI:

Closes: https://issues.redhat.com/browse/HELM-224

Signed-off-by: Allen Bai abai@redhat.com