CONSOLE-2833: Integrate multicluster POC operator changes#575

CONSOLE-2833: Integrate multicluster POC operator changes#575TheRealJon wants to merge 1 commit intoopenshift:master-multi-cluster-featurefrom

Conversation

21389eb to

b42a98e

Compare

|

/retest |

|

/retest |

493f7a6 to

f750fd3

Compare

jhadvig

left a comment

jhadvig

left a comment

There was a problem hiding this comment.

@TheRealJon thanks for the PR.

For the first review round I would advice to undo all the example && manifest changes that are only adding extra tabs, so the changes are more readable.

jhadvig

left a comment

jhadvig

left a comment

There was a problem hiding this comment.

Thanks for putting this logic to separate controller. Couple or comments regarding the code.

b02d6ad to

bebe654

Compare

|

/retest |

|

/retest |

2 similar comments

|

/retest |

|

/retest |

|

/hold |

|

/retest |

7a3d007 to

80206a8

Compare

|

/retest |

1 similar comment

|

/retest |

|

/hold cancel |

|

Failing tests seem unrelated to this PR: Agnostic upgrade (multiple)

aws-operatoraws-single-node |

|

/retest |

|

aws-operator failed tests:

|

|

/retest |

1 similar comment

|

/retest |

Testing this PRPrereqs

TestingMost of the test conditions will require grepping the console operator logs for certain output. Here's an example command that can be used when searching for expected log output: Step 1: Spin up or use existing spoke clusterProvision the spoke cluster before the hub cluster because you don't want to waste up-time on your cluster-bot cluster (see next step). You will need kubeadmin access to an existing (non-ACM managed) cluster before step 2. This can be accomplished by using cluster-bot, openshift install, or using an existing cluster. Step 2: Spin up hub clusterUse cluster-bot to spin up a second cluster (the hub cluster) with #575 Once the cluster is up, Step 3: Increase console-operator log level on the hub clusterPatch the console operator to increase log level: Test Conditions

Step 4: Install ACM on the hub clusterMulticluster console requires some unreleased ACM API changes, so we need to install ACM using a 2.4.0 image.

Test Conditions

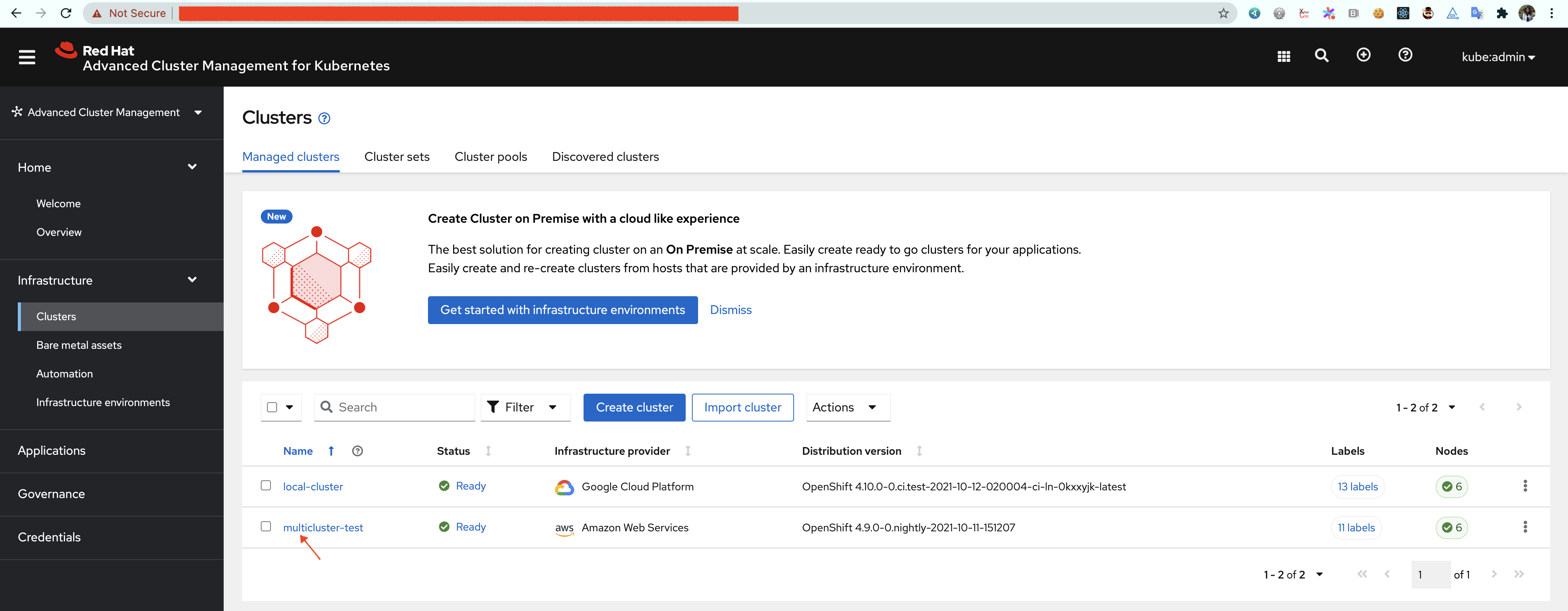

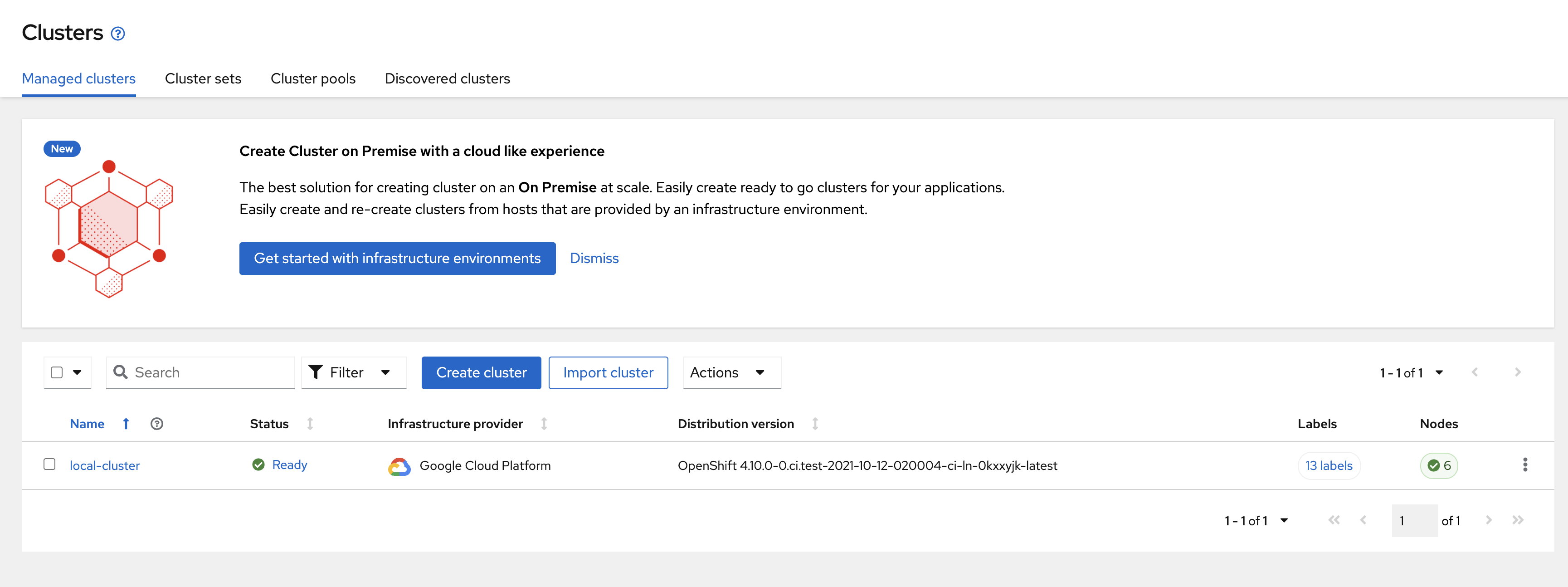

Step 5: Import spoke clusterOnce ACM is up and running on the hub cluster, either use the ACM console or the CLI to import the spoke cluster from step 1. Name the imported cluster "multicluster-test" Console instructions

Test ConditionsIt may take a while for the cluster import to complete and for changes to propagate through the console operator, so you may have to run some of these commands multiple times to see the expected output. ValidationAs the import occurs, the console operator should be validating the state of ManagedCluster resources and will log validation errors before the import is complete. When the ManagedCluster resource is first created, it will not have any

During the import process,

Console operator checks for the existence of the managed-clusters configmap and logs a line when it's not found.

ReconciliationOnce a valid client config is defined on the ManagedCluster resource, the console operator will begin reconciling the state on the cluster. Once everything is reconciled, two ConfigMaps should have been created in the

Expected YAML (omitting actual CA data): To be thorough, you can compare the CA bundle data in this ConfigMap to the data found in the ManagedCluster resource:

Expected YAML:

Expected YAML (only showing the properties we are looking for): |

- Still need feature gate and oauth work for multicluster to be fully implmented - Add managed cluster controller that watches ACM ManagedCluster resources and builds a config file that can be consumed by the console backend - Update console deployment sync with new volumes and volume mounts for managed cluster config and CA bundles. - Add managed cluster and API server CA bundle ConfigMaps to subresources - Update informer event filter utils - Add github.com/open-cluster-management/api dependency

80206a8 to

864b40d

Compare

|

[APPROVALNOTIFIER] This PR is NOT APPROVED This pull-request has been approved by: TheRealJon The full list of commands accepted by this bot can be found here. DetailsNeeds approval from an approver in each of these files:Approvers can indicate their approval by writing |

|

@TheRealJon: The following tests failed, say

Full PR test history. Your PR dashboard. DetailsInstructions for interacting with me using PR comments are available here. If you have questions or suggestions related to my behavior, please file an issue against the kubernetes/test-infra repository. I understand the commands that are listed here. |

|

/remove-label qe-approved |

|

|

Hi @yapei! Thanks for looking at this! I will take a look at the things you pointed out. For the first issue with the managed cluster showing an Available status of "Unknown", was this accompanied by an unexpected behavior in the console operator, or just an issue with the ACM status being unexpected? For the second issue, I think I have an idea of what's causing that issue and it will need to be fixed, so thanks for catching this edge case! |

@TheRealJon It is ACM returns 'Unknown' status(since CLI shows 'Unknown' status as well), I think it is not our console operator issue, sorry for confusing |

|

Closing in lieu of #602 which includes these changes. |

We still need the work from @florkbr for the other part of this, which is to create the OauthClients on spoke clusters and build the manged cluster oauth configs.