Conversation

| if (!getTransform(data_->header.frame_id, data_->header.stamp, curr_time, tf_transform)) { | ||

| return; | ||

| if (!tf_transform_received) { | ||

| tf_transform_received = getTransform(data_->header.frame_id, data_->header.stamp, curr_time, tf_transform); |

There was a problem hiding this comment.

It might be good to keep the return here, if it was not received after trying

| /// @brief Latest data obtained from pointcloud | ||

| sensor_msgs::msg::PointCloud2::ConstSharedPtr data_; | ||

|

|

||

| // Transform from the data source frame to the robot base frame |

There was a problem hiding this comment.

you could add a comment here why this is cached and which assumption is made

|

Could you open a PR to upstream in parallel? I generally like this approach so that we get early feedback from the maintainers -> better than merging, opening a PR upstream, making changes, merging upstream and then getting these changes back into our fork. If they take too long too answer we won't wait of course |

tonynajjar

left a comment

tonynajjar

left a comment

There was a problem hiding this comment.

@HovorunB very nice find! Could you run a small experiment to confirm your hypothesis:

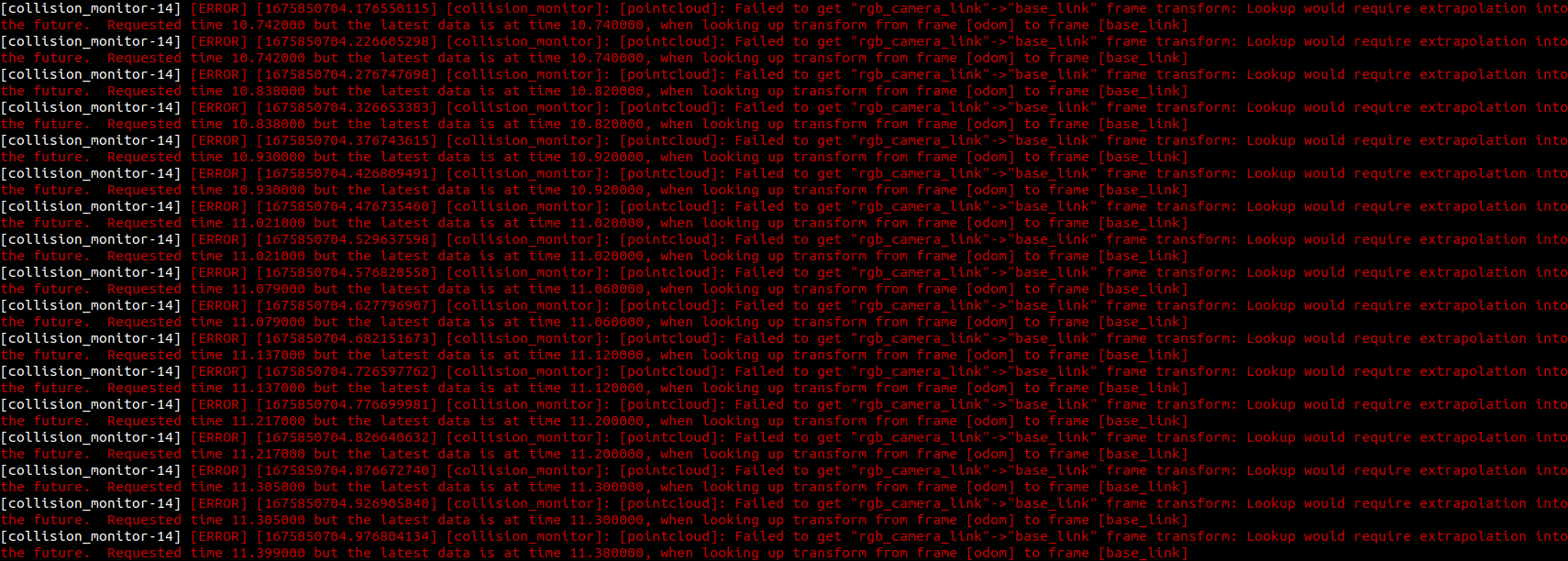

Set the transform_tolerance parameter to 0, I think this is where the delay comes from. You will likely get extrapolation error messages, but this would be useful to confirm and to see by how much.

As discussed, If we want to merge this urgently and it works, feel free but let's make a follow up ticket to get this fix upstream (and potentially change the fix for us)

@tonynajjar With our publishing frequency of 20hz the maximum we can allow is |

|

That's an interesting log, it says it fails to get camera_rgb_link -> base_link and then that it's because it fails to get odom -> base_link. It's likely because they use the Advanced API of lookup_transform. I'm not sure yet but I'm starting to think that this might not be a suitable solution, there must be a reason why we need odom -> base_link and by just saving the transform we skip that reason -> maybe you can figure out that reason? Now, knowing that odom->base_link is actually the issue, publishing odom at a faster rate could also fix the issue. Another interesting thing: I first thought this but looks like it's: "If I request a TF at a specific time but it's not available then wait I'm not 100% sure of what I'm saying yet, just sharing my thoughts. Would be cool if you can do this investigation and confirm or deny my hypothesis. |

Okay I think this is confirmed here It could be interesting to implement an argument to act like I first described 🤔 |

|

so, in our case, it computes rgb_link to map, then travels in map, then computes map to base_link. So, it traverses odom for which we don't have a transform yet. This all sounds way to complex given that in nearly all cases the TF between base_link and camera will be static anyway. |

|

Changed to "simple API of lookup transform", the frequency looks great. it is able to keep up and I get no extrapolation errors even when running it at 100hz |

|

nice, I think this is now a good candidate to merge and at the same time to ask for feedback from nav2 team |

Sure that will work as with the simple API you don't get odom->base_link, but there is probably a reason why they use the advanced API, e.g. to solve the same problem described here |

Ah sorry I just read @jplapp's answer here, ignore my previous comment until I check |

Alright sounds good to me, I still have to wrap my head around this advanced API.... But if the answer is: to account for a dynamic camera_link->base_link, then we have to think: 1- if we keep these changes |

|

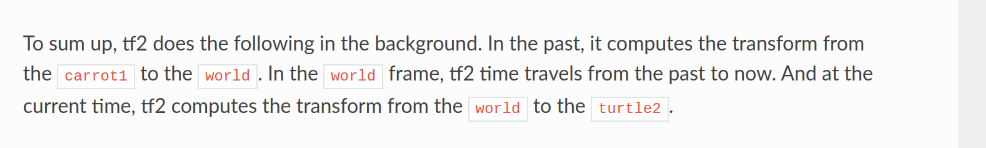

I think this new change does support a dynamic camera_link->base_link - it gets the transform between base_link and camera_link at the time of capture of the pointcloud and then projects the pointcloud into the baselink frame at the current time. (This is the simple example from the docs “What was the pose of carrot1 5 seconds ago, relative to turtle2 5 seconds ago?”). It seems like what we need here, if there would somehow be a moving camera on the robot. Honestly I don't understand what the advanced API in this case even tries to answer, but maybe we will know soon 😄 |

I think this is the problem they tried to solve what the advanced API, because we want to publish the pointcloud into the base_link frame at the time of capture and not at the current time. So I feel like it also affects our case with the static sensor. |

|

that makes sense! |

|

Follow up ticket: https://lvserv01.logivations.com/browse/AMRNAV-4141 |

Basic Info

Description of contribution in a few bullet points

The cmd_vel and velocity_smoother/cmd_vel topics have the frequency of 20hz. The collison_monitor/cmd_vel has 10hz in gazebo and ~16hz basement AMR. This is related to the collison_monitor waiting for the transform base_link->rgb_camera_link every time it receives input cmd_vel.

This was tested on basement AMR, collision monitor is able to keep up with 20hz frequency. The CPU usage by the collision monitor is around 25% while driving, and the logic still works