clean up azure temporary managed os disk #10713

Conversation

Codecov Report

|

|

cc @nywilken -- looks like you were working on these bits recently :) |

nywilken

left a comment

nywilken

left a comment

There was a problem hiding this comment.

@alexeldeib apologies for the delay here, and thank you for taking on this fix. I did touch the file last and have had some trouble figuring out the right path. So happy to see new eyes on the issue.

I gave your changes a test and they work as expected. I found it a little confusing at first to see us having to handle the deletion of the OS in the StepDeleteAdditionalDisk. But your comments in the description provided some insight.

While testing I ran into an issue with one of my templates and ultimately found that I made a mistake in #9559. I left a comment on the PR. After my findings I made a slight change to the original code to see if it would solve the problem as well and I think it does I would like to get your thoughts on it. I think we can improve things further in your PR to avoid potential bugs with the call to StepDeployTemplate#Cleanup.

Here is a gist with my changes https://gist.github.com/nywilken/de296af15f55fe3c8c2267735120321b

Look forward to reading your feedback.

|

|

||

| if isManagedDisk && !isExistingResourceGroup { | ||

| s.say(fmt.Sprintf(" -> Additional Disk : skipping, managed disk was used...")) | ||

| if isManagedDisk { |

There was a problem hiding this comment.

I think part of this confusion around where we can delete this disk is that I moved the call to NewStepDeleteAddtionalDisk into the StepDeployTemplate#Cleanup but in reality it should be its own thing.

While testing this today with a Windows OS build, non-managed, I was getting failures due to NewStepDeleteAddtionalDisk being called twice. After further investigation I found that for Windows builds NewStepDeployTemplate happens twice: one for the key vault deployment, and one for the virtual machine deployment. This issue only occurs for VHD builds.

Your solution for deleting the OS disk works, but still ends in a failed build because of the double call. I know what I'm pointing out is a different issue than the one you are fixing, but I believe they are related and if we move NewStepDeleteAddtionalDisk out of StepDeployTemplate#Cleanup then we can use your logic for deleting the OS disk or even the original code where we capture the os type and uri to be used for deletion in the cleanup function.

I moved NewStepDeleteAddtionalDisk into the Deployment cleanup step because it was previously being called after the Publish to SIG step, and if that failed the deletion would not happen. So my thought was to ensure the cleanup always happens where they are created - in StepDeployTemplate.

Here's a gist of the template I am using.

There was a problem hiding this comment.

Ah, nice! That takes care of the ordering issue between the cleanup steps very cleanly. I'll incorporate your patch and give it a try on my end 👍

Co-authored-by: Wilken Rivera <dev@wilkenrivera.com>

Co-authored-by: Wilken Rivera <dev@wilkenrivera.com>

|

Hi @alexeldeib, did the changes work as expected for you? I see you applied the path I sent your way. I posted a link to the binaries with the potential fix on the reporting issues for additional testing. If the patch is working as expected I think we can clean up the tests and see about adding an acceptance test that validates that all resources have been deleted. Let me know what you think. |

|

Sorry for the delay! Yep, things are working well for me now. I still need to update the tests and then I think we will be good to go? |

|

I added a few unit tests, is something like that what you had in mind? I hit some null refs in the test setup as well, so tweaked the step a bit further.

are there some other e2e/integration tests you were thinking about? or more sophisticated mocking/resource tracking here? I saw https://github.com/hashicorp/packer/tree/master/test but those looked fairly minimal (albeit missing an azure version). What are you thinking 🙂 ? From the report in the other issue, we still should wait a bit anyway to validate this does what's expected. It seems to for me, and I tested both linux/win and temp/pre-existing resource group before the last commit. |

|

there was a bit of a gotcha. we skip cleaning up the template resources if packer created the resource group, because we'll delete the resource group. however using unmanaged disks creates a copy not in the temporary RG, but in the storage account for the images. this makes it difficult to run as a separate step, but we could unconditionally always clean up the individual resources in packer? It shouldn't actually be much worse than what Azure does internally for RG delete -- fire off deletes with retries on each of the individual resources, basically ignoring any dependencies. Then when we actually do the RG delete in the last step, it should be nearly immediate. This should have the added benefit of reducing the number of times the RG delete times out, which I see surprisingly often. |

That sounds like a big win |

nywilken

left a comment

nywilken

left a comment

There was a problem hiding this comment.

@alexeldeib awesome work. Thank you for going so deep and for the extra info on the temp resource group. I left a small suggestion and a question. But this is otherwise good and it appears to have resolved the open issues.

Re:testing I was thinking of adding an acceptance test like

with a Check that we could use to validate that all of the resources for a deployment, including the OS disk, have been deleted. But I dont know how feasible that is since some of the items are deleted async. I also don't have insight on the effort to track all the items created and store them so that we can compare in the check.

| step.delete = step.deleteDeploymentResources | ||

| step.disk = step.getImageDetails | ||

| step.deleteDisk = step.deleteImage | ||

| step.deleteDeployment = step.deleteDeploymentObject |

There was a problem hiding this comment.

Nice add. The name provides more details for sure.

Co-authored-by: Wilken Rivera <dev@wilkenrivera.com>

|

@nywilken what do you think of the approach in this commit for acceptance checks? It's not pretty, and pulls out some of the private internals of the builder, but it seems to work okay. I wanted to see if this makes sense before adding anything else (or maybe just leave it here for this PR). I didn't see a good way to track resources deployed in the template and verify their deletion at the end, but we generally have enough information between the test case itself, and the builder internals to handle manually validating that XYZ resources were deleted. The main ones I think worth checking would be removing the VHD from the storage account, since it's not in the same resource group, and doing proper cleanup when using a pre-existing RG. I added the former, but not the latter. |

|

(also happy to rebase and clean up the history before merging, just wanted to give you a chance to review the diffs) |

| } | ||

|

|

||

| var wg sync.WaitGroup | ||

| wg.Add(len(resources)) |

There was a problem hiding this comment.

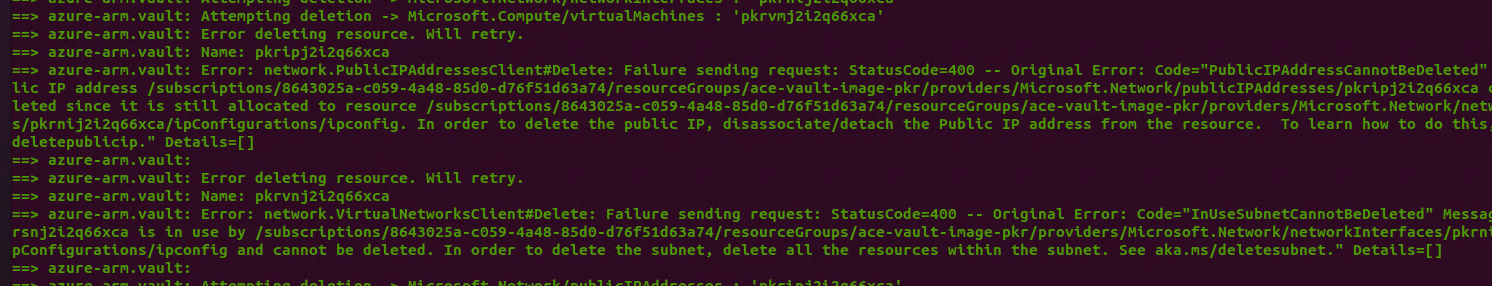

soo funny story. without the synchronization, Azure was returning the deployed resources in an order that happened to Just Work(TM) for deletion, incidentally ordering dependencies nicely.

Adding this basically replicates what Azure does internally, but it does make you more likely to see some transient deletion failures during cleanup...I hope that's an acceptable compromise? The alternative would be sorting by resource type to make a semi-DAG of dependencies, which feels like a bit bigger change

There was a problem hiding this comment.

(Incidentally, that means this logic was always racy and potentially broken, but somehow the likelihood of triggering it in a broken way was fairly low when doing serial deletion)

nywilken

left a comment

nywilken

left a comment

There was a problem hiding this comment.

One extra quote, but otherwise good to go. If you can fix and rebase I will merge immediately.

--- PASS: TestBuilderAcc_Blob_Linux (340.37s)

--- PASS: TestBuilderAcc_Blob_Windows (476.38s)

PASS

ok github.com/hashicorp/packer/builder/azure/arm 476.398s

Thanks again for the great work here.

|

|

||

| "storage_account": "{{user ` + "`storage_account`" + `}}", | ||

| "resource_group_name": "packer-acceptance-test", | ||

| "resource_group_name": "packer-acceptance-test"", |

There was a problem hiding this comment.

One extra quote

| "resource_group_name": "packer-acceptance-test"", | |

| "resource_group_name": "packer-acceptance-test", |

|

sorry for all the last minute changes -- I think I'm actually done 😄 that bit with the deletion ordering threw me for a loop. |

|

Results with the latest changes 👍 Thanks again for such a great and detailed PR. |

|

I'm going to lock this issue because it has been closed for 30 days ⏳. This helps our maintainers find and focus on the active issues. If you have found a problem that seems similar to this, please open a new issue and complete the issue template so we can capture all the details necessary to investigate further. |

related to #9559

Closes #10268

Closes #10686

Closes #10416

Closes #9615

testing was slightly awkward. I tried re-introducing the code from #9559, but it seems because the VM cleanup doesn't happen until the DeployTemplate clean up step, we need to do it ~around the same time as deleting disks (after the VM has been deleted). I also tried running it as a separate step after Additional Disk cleanup, but that seemed to move error handling that should belong in the core builder?

this is necessary any time you create a VM with managed disk without creating it separately. It won't be deployed as part of the template, but WILL be created as a dependency of the VM, so you have to delete it after creating the VM.

we don't need this when the disk is in a temporary RG, since we delete the whole RG.

this is probably also necessary for VHD builds, actually? but i'm less sure about that. I'll test one out when I have a chance.