Split slow ecosystems across multiple CI jobs#5568

Conversation

The python tests are too damn slow. This is something that we _should_ fix at the core, by making them faster, but until we do that, let's not waste precious time waiting on those tests to pass, let's throw more computers at the problem. This is a relatively crude approach, but it should result in stable reruns. There's probably a way to make this cleaner by moving it into a rake task or something, so we can reuse it for more ecosystems. I'll leave that as an exercise for someone else that feels strongly about this.

There's probably a better way to exclude ruby scripts, or maybe move it to a rake task/different directory

0e93dc3 to

dcc484c

Compare

|

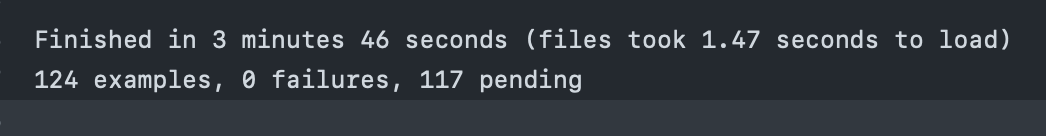

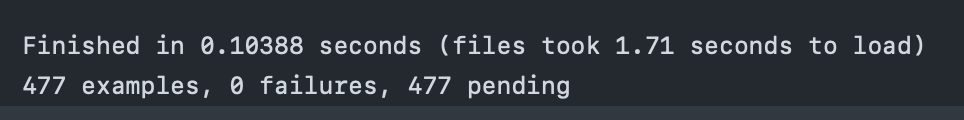

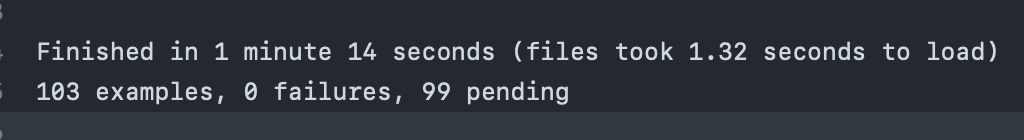

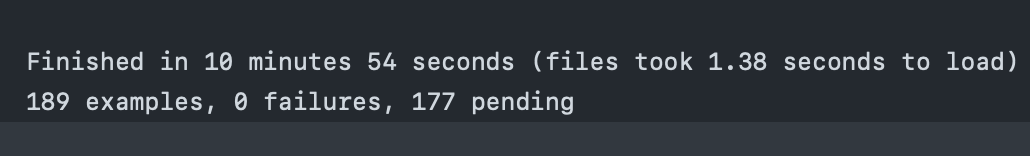

Based on just glancing at the test speeds in the actions outputs of a few recent builds, I would recommend we do this for a few more suites:

At that point it might be worth looking if we can somehow reuse the docker images across all those suites instead of building from scratch for each one, but previous experimentation has shown that building for every job was slightly faster because copying the image from storage was relatively slow. It's a lot of wasted compute though, and might have gotten faster?

|

|

Nice work, @jurre! Adding some links for posterity:

|

|

I'm seeing some pretty big variation between what's being run. I wonder if we could sort the tests being run into buckets before shuffling (though I understand that introduces some brittleness), so that we could make sure they're somewhat evenly distributed? Suite 0Tests run: 7 Suite 1Tests run: 0 Suite 2Tests run: 4 Suite 3Tests run: 12 Suite 4Tests run: 0 |

|

@landongrindheim I can't think of a way to do it easily, we'd have to keep track of every files average runtime somewhere or manually tag the files which is annoying and very easy to forget when adding new files. In some runs I've seen the distribution be better, but it is indeed unpredictable with this approach. The But with all those approaches you always keep running into the fact that some files are just much slower than others, and you could keep track of every test individually and try to match them up as best as possible etc, but it seems very involved. There is a service that offers something like this: https://knapsackpro.com/, but I'm not sure if we can get it approved or if it's even worth it. If you have an idea on how to do it, I'd suggest we put it on a backlog somewhere to pick up relatively soon but move forward with this approach for now. Let's not make perfect the enemy of better here |

|

The |

|

Something else I'm thinking, related to @landongrindheim's comment, is: do we need the shuffling at all? We cannot yet balance this properly, but maybe we can at least make test times stable by always running the same files on each runner? |

landongrindheim

left a comment

landongrindheim

left a comment

There was a problem hiding this comment.

Nice work on this @jurre!

I think the conversation that's coming out of this change is definitely worth having, but I'd be disappointed if we hesitated on shipping this improvement because we hoped we could do better.

Let's keep talking about further improvements while benefiting from this one ![]()

Yeah, and with Another thing I hope to look at soon is figuring out how much time in those tests is spent installing a new version of python, listing those versions out somewhere and preinstalling them in the CI image. That way we can cache them in between many runs. There must be more things like that where we can improve the actual runtime of the tests themselves, which seems like that would be the ultimate fix, but it's much more time consuming. |

Absolutely, my suggestions are definitely not blockers for shipping this, it's an awesome improvement already! |

Yeah we can try it and see if it happens to be a good distribution :D |

|

It's going to change over time anyways, but I think it's better to have something stable (even if there's an unlucky machine that doesn't run anything like the example copied above) than something unstable. |

|

Just for future reference, apparently parallel_tests also supports this exact use case: https://github.com/grosser/parallel_tests/wiki/Distributed-Parallel-Tests-on-CI-systems. By default it distributes tests by file size. |

Ah that's an interesting option, we should try it, I think it should work out of the box |

1e00893 to

081b854

Compare

deivid-rodriguez

left a comment

deivid-rodriguez

left a comment

There was a problem hiding this comment.

I noticed that required jobs are pending because all required job names are now changed. I left an idea that I'm not sure it works, but would leave us with more readable job names, and without needing to change all required jobs in UI configuration manually.

@jurre just an FYI, when I was experimenting with arm image builds, I did just that, build image only once and transfer via github artifacts. The image is pretty beefy, it takes roughly 6 minutes to upload the image tar to gh artifacts and something like 30 seconds to download it in the next job. It is also possible to simply publish dev docker images in to the registry, it will definitely be faster since it will allow reusing existing layers but will create a fair amount of dev tags bloat. Some kind of cleanup setup will be required. |

d94d9b6 to

8eb45b6

Compare

8eb45b6 to

f13ba46

Compare

|

It seems a bit slower using Now that it's this easy, should I split up a couple other ecosystems as well? |

048562f to

09e997e

Compare

| - { path: python, name: python_slow, ci_node_total: 5, ci_node_index: 3 } | ||

| - { path: python, name: python_slow, ci_node_total: 5, ci_node_index: 4 } | ||

| - { path: pub, name: pub, ci_node_total: 2, ci_node_index: 0 } | ||

| - { path: pub, name: pub, ci_node_total: 2, ci_node_index: 1 } | ||

| - { path: terraform, name: terraform, ci_node_total: 1, ci_node_index: 0 } |

There was a problem hiding this comment.

Please forgive my YAML syntax ignorance 😇

Is it possible to use space inside these curly braces to align these arguments? Ex:

- { path: pub, name: pub, ci_node_total: 2, ci_node_index: 1 }

- { path: terraform, name: terraform, ci_node_total: 1, ci_node_index: 0 }It'd make it easier for me to read.

There was a problem hiding this comment.

The spaces should be perfectly fine IIRC. However, in VS Code, I tend to just right-click in YAML files and select "format document" and it auto-spaces things... whatever it spits out might be a sensible default?

Also, I filed:

As I've been bit before by unexpected yaml issues.

| # NOTE: Don't use `if` branches without `else` part, since the code in some of | ||

| # then seems to not abort the script regardless of `set -e` | ||

|

|

||

| # Should we only run these on one of the CI_NODE_INDEX's? |

There was a problem hiding this comment.

Is this a future TODO that should be merged in or does it need addressing/removing before merge?

There was a problem hiding this comment.

I was treating it as a future TODO. Should be easy to tackle, but I don't really have more spare cycles to burn on this

CI specs run using a single core even when Examples:

|

The python tests are too damn slow. This is something that we should

fix at the core, by making them faster, but until we do that, let's not

waste precious time waiting on those tests to pass, let's throw more

computers at the problem.

This splits the tests up across multiple CI jobs using the

parallel_testgem.You'll notice the builds are still all fairly slow in this PR, but that's because the docker build step isn't being cached here, because we've passed some extra arguments to that step. It will be once this PR is merged.

You'll also see a few required checks now no longer pass, because their names have been changed. I'll update those once this PR is accepted.

There's a few other suites that take more than 5 minutes as well, so I've opted to also split those up.