-

Notifications

You must be signed in to change notification settings - Fork 6.1k

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

DQN solution results peak at ~35 reward #30

Comments

|

I've been seeing the same and I haven't quite figured out what's wrong yet (debugging turnaround time is quite long). I recently implemented A3C and also saw results peak earlier than they should. The solutions share some of the code so right now my best guess is that it could be something related to the image preprocessing code or the way consecutive states are stacked before being fed to the CNN. Perhaps I'm doing something wrong there and throwing away information. If you have time to look into that of course it'd be super appreciated. |

|

I saw the same on a3c after 1.9 million steps: And attributed it to the fact that I only used 4 learners (2 are hyper threads, only 2 real cores). In the paper, the training is orders of magnitude slower due to less stochasticity from fewer agents. The variance remained huge too, and it plateaus maybe too quickly in about 38 of score. I started reviewing DQN first (though I got a high level view of how A3c is implemented too and will come back to it later), and made some dumb questions so far, as I was seeing the same level of performance. One thing that would help diagnose performance is building the graph so we can inspect it in TensorBoard. Other than that, it could be many things. The most impactful is exactly how the backdrop is behaving, and (for me) if the Gather operation and the way the optimization is requested result in exactly what is wanted. Probably, it is, but that's where I am looking now. |

|

Thanks for helping out.

If the backprop calculation was wrong I think the model wouldn't learn at all. It seems unlikely that it starts learning but bottoms out with an incorrect gradient. Unlikely, but not impossible. It's definitely worth looking into, but it'd be harder to debug than looking at the preprocessing. A simple thing to try would be to multiply with a mask matrix (a one hot vector) instead of using Adding the graph to Tensorboard should be simple. It can be passed to the saver that is created in the code, e.g. |

|

Thanks for the responses. So I manually performed some environment steps and displayed their preprocessed forms. An example is below. It looks OK to me. The paper also mentions taking the max of the current and previous frames for each pixel location in order to handle flickering in some games. It seems to be unnecessary here since I didn't observe the ball disappearing between one step and the next. I'm happy to check out the stacking code next. Looking at TensorFlow's Convolution documentation, is it possible that information is being lost by passing the state histories to |

I think you are right in that the history is being reduced to one representation after the first convolutional layer, but I think that's how it's supposed to be - You want to combine all the historic frames to create a new representation. If you used I do agree that it seems likely that something is wrong in the CNN though... It could be a missing bias term (there is no bias initializer also in the fully connected layer), wrong initializer, etc.. |

|

I just looked at the paper again:

In my code the final hidden layer does not have a ReLU activation (or bias term). Maybe that's it? |

|

Nice catch. That does seem like it would limit the network's expressiveness. Unless you already have something running, I'll go ahead and fire up an instance and give it a shot with ReLU on the hidden fully connected layer. Can also add biases to both fully connected layers (probably initialized to zero?). |

|

I'm not running it right now so it'd be great if you could try. In my experience the biases never helped much but adding them won't hurt. Zero-initialized should be fine. |

The first (a) is regarding the Gather operation instead of the one_hot (that you mentioned, Danny), the second (b) is in asking the optimizer to think of vars in relation to multiple different examples (instead of vars we want fixed in general across an entire mini batch, which is usually how it will "think", that the same rules apply to the entire mini-batch). a) The Gather operation operates very differently than a one hot. The Gather tells the optimizer to disregard completely the impact on the actions not taken as if they didn't affect the total cost. And it results in updates that disregard that we have placed a lot of effort on fine tuning the other variables because on the next state they may be the right action, and we want Q to provide a good estimate of those state, action values. But with Gather (instead of one hot) the optimizer reduces the cost in one action, without balancing the need to not change the value estimates for the other actions. So, regardless of how Gather works, a one hot is needed so that when the optimizer starts looking at the derivatives, it takes into account the other actions cost, and thus tries to find the direction which improves the current action cost (lowering it) while affecting the other action values (ie. current output value) as little as possible. b) The other is regarding the mini-batch. I am not sure how TensorFlow mini-batch works, but it may be possible that it's not designed to have Variables corresponding to different examples within a single Batch. Another way to say it is that I think mini-batch assumes you don't change the variables to be fixed between examples within a batch, and one way to look at it is to notice how the optimizer always works across Trainable Vars, and the List of Variables that minimize takes as argument, most likely expect to be vars used across the mini match for each example. eg. you may be optimizing only x1 and not x2. But may not work if you want to optimize x1 in the first example of the batch and then x2 in the second example of the batch. This I only suspect must be so, as in many other context mini-batch is derived from a cost function that is static (not fixing vars across the batch). I hope I am not saying something too stupid. If so apologies. Bottom line is that reward and gradient clipping (I just noticed tensorflow makes that easy to implement), ending episode when life is lost, using a one hot instead of gather, and ReLU at the final fully connected layer, may combined boost learning significantly, here, and in what may apply from here to a3c (then I care the most about). |

I just edited my response which was wrong. I really don't know how conv usually combines the channels. Simplest case is averaging, in cs231 hey say sum as in many other resources. The history isn't necessarily lost, and will be spread along the filters. |

|

Unfortunately, it seems to have plateaued again. Currently at episode 3866. Here's the code I used: |

|

Is something like this possible? If the action is not taken, set the target value be equal to the current Q prediction for that action. For the (one) Action taken, set the target y value be the one of our target value q(s',a'). Or does it need a one hot? If possible, then self.y_pl is filled with the TD target we calculated (using s',a') when the index corresponds to the action taken, and all other y targets (actions not chosen) are filled with just the predicted value from self.predictions (same value in Q vs target y). Then just: |

|

Thanks for the comments. Here are a few thoughts:

Good point. Likely to make a difference for some of the games.

This won't make a difference in learning ability, it'll only help you avoid exploding gradients. So as long as you don't get NaN values for your gradients this is not necessary. It's easy to add though.

Really good point and may be the key here. This could make a huge difference. I looked at the paper again and I actually can't find details on this. Can you point me to it?

I don't think this is right. It is correct to only take into the account the current action. Remember that the goal of the value function approximator is the predict the total value of a state action pair,

I'm not sure if I understand this point. Can you explain more? However, I'm pretty confident that the optimization is right. So I think it may be worth looking at the reward structure of the environment and what they do in the original paper... I'll try to refactor the code on the weekend to look at the gradients in Tensorboard. That should give some insight into what's going on. |

|

Denny,

In the paper, under Methods, Training, they mention this:

I've seen in some forums that they take this for granted, and they tried it in at least Breakout and it made a large difference. SKIP THIS - It's just background on how I was confused...Regarding the optimization, thanks for taking the time to explain. I highly appreciate it, and will sit on it and try to become more familiar with the concepts. My comment was inspired by comment by Karpathy that implemented REINFORCE.js and that in that page literally mentions under Bell & Whistles, Modeling Q(s,a):

But I see how I miss-read it. It confuses me a bit that having 10 outputs can be the same as having 10 networks dedicated to each action (not that both don't work, but how the 10 outputs can be as expressive). I am also probably mixing things...such as when doing classification with softmax, we set labels for all classes, the wrong ones we send closer to 0 and the one that was right towards 1. So it trains the network to not only output something closer to one for the right label, but also trains the other labels to be closer to 0. In this case (DQN) I though similarly, that our "0" for the other actions was whatever value the function estimates (which is not relevant in the current state, but we don't want to change much because those action where not taken), thus I thought their equivalent to "0" was to set them to the value the function estimates, and the "1" was the cost we actually calculate for the action taken. I know I am still pulling my hair, ... :-) |

Hm, but that doesn't mean the end of an episode happens when a life is lost. It could also mean that they use the life counter to end the episode once the count reaches 0. It's a bit ambiguous. It's definitely worth trying out. |

|

I'm having a hard time wrapping my head around the impact of ending an episode at the loss of a life considering that training uses random samples of replay memory rather than just the current episode's experience. Starting a new episode is not intrinsically punishing, is it? A negative reward for losing a life is obviously desirable though. I'd love to be wrong because this would be an easy win if it does make a difference. |

|

On another note, I manually performed a few steps (fire, left, left) while printing out the processed state stack one depth (time step) at a time, and it checks out. The stacking code looks good to me. |

|

@nerdoid I'm not 100% sure either, but here are some thoughts:

I know it's a bit far fetched of an explanation and I don't have a clear notion of how it affects things mathematically, but it's definitely worth trying. |

|

@fferreres I think your main confusion is that you are mixing up policy based methods (like REINFORCE and actor critic) with value-based methods like Q-Learning. In policy-based methods you do need to take all actions into account because the predictions are a probability distribution. However, in the DQN code we are not even using a softmax ;) |

|

Just to clarify the above: By not ending the episode when a life is lost you are basically teaching the agent that upon losing the visual state is reset and it can accumulate more reward in the same episode starting from scratch. In a way you are even encouraging it to get into states that lead to a loss of life because it learns that these states lead to a visual reset, but not end of episode, which is good for getting more reward. So I do believe this can make a huge difference. Does that make sense? |

|

Ahhh, yeah that makes a lot of sense. Thanks. I wonder then about the relative impact of doing a full episode restart vs. just reinitializing the state stack and anything else like that. Either way it's worth trying. Although I do still have this nagging feeling that the environment could just give a large negative reward for losing a life. Is that incorrect? |

I think the key is that the targets are set to 0 when an life is lost, i.e. this line here: So when a life is lost

It could. I agree this should also improve things but the learning problem would still be confusing to the estimator. The Q value (no matter if negative or positive) depends on how many lives you have left, but that information is nowhere in the feature representation. |

|

I added a wrapper: 18528d0#diff-14c9132835285c0345296e54d07e7eb2 I'll try to run this for A3C, would be great if you could try it for Q-Learning. Crossing my fingers that this fixes it ;) |

|

Awesome! Yes, I will give this a go for the Q-Learning in just a bit here. |

|

@dennybritz, yeah, I have things mixed up. Too many things to try to understand too quickly (new to Python, Numpy, TensorFlow, vim, Machine Learning, RL, refresh statistics/prob 101 :-) |

|

Not sure if either of you have encountered this yet, but when a gym monitor is active, attempting to reset the environment when the episode is technically still running results in an error with message: "you cannot call reset() unless the episode is over." Here is the offending line. A few ideas come to mind:

The first option seems less hacky to me, but I might be overlooking something. |

|

Here are a few threads discussing low scores vs paper. It can be a source of troubleshooting ideas: https://groups.google.com/forum/m/#!topic/deep-q-learning/JV384mQcylo https://docs.google.com/spreadsheets/d/1nJIvkLOFk_zEfejS-Z-pHn5OGg-wDYJ8GI5Xn2wsKIk/htmlview I re-read all the DQN.py (not the notebook) and assuming all np slices and advanced indexing and functions operating over axis are ok, I still can't find what could be causing the score to flatten at ~35. I think troubleshooting may be time consuming but it is also a good learning experience Insome of the posts some mention doing a max among consecutive frames, some that the stacking is for consecutive frames I've seen a karpathy post explaining policy gradients and he did difference of frames. This was reported regarding loss of life:

|

|

Thanks for researching this. I made a bunch of changes to the DQN code on a separate branch. It now reset the env when losing a life, it writes gradient summaries and the graph to Tensorboard, and has gradient clipping: https://github.com/dennybritz/reinforcement-learning/compare/dqn?expand=1#diff-67242013ab205792d1514be55451053c I'm re-running it now. If this doesn't "fix" it the in my opinion most likely source of error is the frame processing. Looking at the threads:

I can't imagine that each of these makes a huge difference, but who knows. Here's the full paragraph: |

|

Something entirely plausible is that we didn't try "hard enough". Look for example at breakout in this page: https://github.com/Jabberwockyll/deep_rl_ale/wiki It remains close to 0 even at 4 million steps, then starts climbing but beed millions and millions to make a difference. Also, in many of the kearning charts there are long periods of diminishing scores, but they recover later. It takes a million sometimes to do that. Our tries are about 2-3 millions. I may have to check the reward curves from the paper, but maybe there's nothing wrong, if the plateu is actually just the policy taking a "nap". Unfortunately, I am stuck with an Apple Air (slow). Maybe with $10 I could try a3c on a 32 cpu processor...not very familiar with aws though but a spot instance for a day would do it |

|

I'd stick with trying DQN instead of A3C for now - there are fewer potential sources of error. If the implementation you linked to works correctly (it seems like it does) it should be easy to figure out what the difference is:

Looking at it again there another difference from my code. They take 4 steps in the environment before making an update. I update after each step. That's not shown in the algorithm section in the paper, but it's mentioned in the appendix. So that's another thing to add. Good point about the training time. If it takes 10M steps that's 2.5M updates (assuming you update only after 4 steps) and that'd be like 2-3 days looking at the current training speed I have on my GPU. |

|

Marking loss of life as termination of the game can bring big improvement in some game (at least in SpaceInvader as I tested). I think the intuition is that, if not, the q-value for the same state under different number of lives should be different but the agent cannot differentiate this difference given only the raw pixel input. In spaceinvader, this can increase the score from ~600 to ~2000 with other variates controlled. |

|

@dennybritz Hi, I think I met the same problem as you, I tried in Open AI Gym baseline BreakoutNoFrameskip-v4, and the reward converges to around 15[In DQN nature paper, it's around 400]. Did you figure out the problem, if possible, pease give me some suggestion about this. |

|

Did you get a chance to read this, https://github.com/openai/baselines ?

Seong-Joon Park, Managing Director

Move *sf. *

…On Sun, Dec 17, 2017 at 5:41 PM, fuxianh ***@***.***> wrote:

@dennybritz <https://github.com/dennybritz> Hi, I think I met the same

problem as you, I tried in Open AI Gym baseline BreakoutNoFrameskip-v4, and

the reward converges to around 15[In DQN nature paper, it's around 400].

Did you figure out the problem, if possible, pease give me some suggestion

about this.

—

You are receiving this because you are subscribed to this thread.

Reply to this email directly, view it on GitHub

<#30 (comment)>,

or mute the thread

<https://github.com/notifications/unsubscribe-auth/ABAN9R0IfQn6eyb3AY9UX1eVtj16o9AGks5tBNO7gaJpZM4Ky0f->

.

|

|

I've found more success with ACKTR than with Open AI's deepq stuff. Here's a video of it getting 424: breakout. This wasn't an especially good score, it was just a random run I recorded to show a friend. I've seen it win the round and score over 500. |

|

In case anyone still would like to see a working DQN stuff, I have a pretty

good implementation here:

https://github.com/hengyuan-hu/rainbow

It implements several very powerful extensions like distributional DQN,

which has a very smooth learning curve on many Atari games.

…On Wed, Jan 10, 2018 at 3:21 PM Jesse Pangburn ***@***.***> wrote:

I've found more success with ACKTR than with Open AI's deepq stuff. Here's

a video of it getting 424: breakout

<https://www.dropbox.com/s/ax11s81m7rq50xu/breakout.mp4?dl=0>. This

wasn't an especially good score, it was just a random run I recorded to

show a friend. I've seen it win the round and score over 500.

—

You are receiving this because you commented.

Reply to this email directly, view it on GitHub

<#30 (comment)>,

or mute the thread

<https://github.com/notifications/unsubscribe-auth/AEglZTuPeqDIHwIPF8wtYKx_QZyHDz12ks5tJGUIgaJpZM4Ky0f->

.

|

|

@dennybritz firstly, thank you for creating this easy to follow DeepRL repo. I've been using it as a reference to code up my own DQN implementation for Breakout in PyTorch. Is the current avg_score that the agent is achieving still saturating around 30? In your dqn implementation the Experience Replay buffer has a size=500,000 right, I feel that the size of the Replay is very critical to replicating DeepMind's performance on these games. would like to hear your thoughts on this? Have you tried increasing Replay size to 1,000,000 as suggested in the paper? |

... in certain implementation and certain tasks. Breakout is in fact very stable given a good implementation of either DQN or A3C. And DQN is also quite stable compared to policy-based algorithms like A3C or PPO. |

|

@ppwwyyxx Notice in your implementation: gradproc.GlobalNormClip(10)This may be very important .... |

|

IIRC that line of code has no visible effect on training breakout as the gradients are far from large enough to reach that threshold. I'll remove it. |

|

@ppwwyyxx I tried Adam optimizer with epsilon=1e-2 same as your setting, it works. |

|

I tested with the gym environment Breakout-v4 and found that the env.ale.lives() will not change instantly after losing a life (i.e. the ball disappears from the screen). Instead, the value will change roughly 8 steps after a life is lost. Could somebody verify what I said? If this is true, then the episode will end (according to whether a life is lost) at a fake terminal state that is 8 steps after the true terminal state. This is probably one reason why DQN using gym Atari environment cannot get high enough scores. |

Larger epsilon is needed here to solve the problem dennybritz#30

|

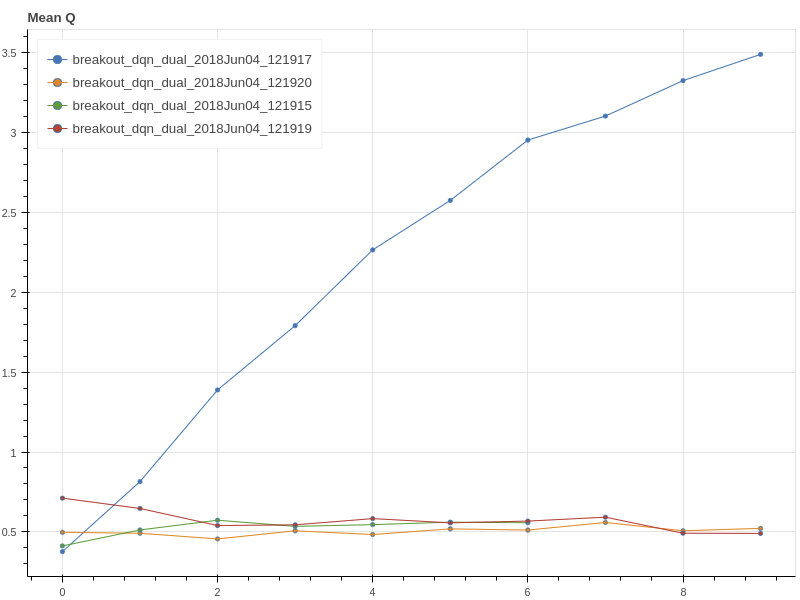

I've done a brief search over RMSProp momentum hyperparameters and I believe it could be the source of the issue. I ran three models for 2000 episodes each. The number of frames varies based on the length of the episodes in each test. For scale, the red model ran for around 997,000 frames while the other two ran for around 700k. The momentum values were as follows: Because red performed best at 0.5, I will run a full training cycle and report back with my findings. It seems strange to use a momentum value of 0.0 while the paper uses 0.95. However, I feel the logic here could be that momentum doesn't work well on a moving target but I haven't seen hard evidence of this being the case. |

|

@fuxianh I met the same problem as you when running the DQN code from Open AI Gym baseline on BreakoutNoFrameskip-v4. Have you solved this problem and could you share the experiences?Thank you very much! |

|

hey guys, I've been working on implementing my own version of DQN recently and I encountered the same problem: the average reward (over the last 100 rounds in my plot) peaked at around 35 for Breakout. I used Adam with a learning rate of 0.00005 (see blue curve in the plot below). Reducing the learning rate to 0.00001 increased the reward to ~50 (see violet curve). I then got the reward to > 140 by passing the terminal flag to the replay memory when a life is lost (without resetting the game), so it does make a huge difference also for Breakout. Today I went over the entire code again, also outputting some intermediate results. A few hours later at dinner it struck me that I saw 6 Q values this morning while I had the feeling that it should be 4. A quick test confirms that for DeepMind used a minimal set of 4 actions: I assume that this subtle difference could have a huge effect, as it alters the tasks difficulty. I will immediately try to run my code with the minimal set of 4 actions and post the result as soon as I know :) Fabio |

|

I recently implemented DQN myself and initially encountered the same problem: The reward reached a plateau at around 35 in the Breakout environment. I certainly found DQN to be quite fiddly to get working. There are lots of small details to get right or it just doesn't work well but after many experiments, the agent now reaches an average training reward per episode (averaged over the last 100 episodes) of ~300 and an average evaluation reward per episode (averaged over 10000 frames as suggested by Mnih et al. 2013) of slightly less than 400. I commented my code (in a single jupyter nb) as best as I could so that it hopefully is as easy as possible to follow along if you are interested. Opposed to previous posts in this thread I also did not find dqn to be very sensitive to the random seed: My hints:

I hope you can get it to work in your own implementation, good luck!! |

|

Has anyone solved this problem for denny's code? |

|

Is there anything to do to solve the problem? Should we use the deterministic environment, like 'BreakoutDeterministic-v4' and should we change the optimizer from RMSprop to Adam since it seem s that DeepMind change to using Adam in their rainbow paper. |

|

If you want to try to improve Denny's code, I suggest you start with the following:

Keep us updated, if you try these suggestions :) |

|

From what I can see, this behavior might be due to the size of replay memory used. Replay memory plays a very critical role in the performance of DQN (read extended table-3 of the original paper https://daiwk.github.io/assets/dqn.pdf). I also implemented DQN (in Pytorch) with replay memory size of 80,000. After training the model for 12000 episodes (more than 6.5 M steps), it could only reach the evaluation score of around 15. So, my guess is that Denny's code might also be having the same problem. For those who have seen/solved this or similar issues, it would be great if you can shed some light on whether you observed the same behavior. |

|

Hello everyone, interesting thread here. I would like some help to clarify my confusion here:

Thanks a lot if anyone can confirm on this! |

|

Hello. I have tried many different things before, including changing the optimizer to what the paper has said, or preprocess the frames as closs as possible to the paper did, but still has a low value. The key thing I found is set "done" flag after every time the agent loses its life. This is super important. I have some benchmark results in https://github.com/wetliu/dqn_pytorch. Thank you for the help in this thread. I deeply appreciate it! |

|

Sorry for reviving this thread again, but did anyone figure out what the issue was? I'm attempting the same task (Breakout) and my train reward is stuck at 15-20 at 10M steps. I'm using a replay buffer of 200k, 4 gradient updates per iteration, 5e-4 as the learning rate. Also using the He initializer as @fg91 mentioned in his comment, DDQN and all other optimizations mentioned as well. I'm not sure if I'm doing it wrong or I just need to wait to train it more. |

|

@GauravBhagchandani The key thing I found is set "done" flag after every time the agent loses its life. This is super important. |

|

@wetliu Thanks for the reply! I have done that as well, hasn't made much of a difference. It's such a pain because the training takes so long for any small change. Do you know how many steps are needed to reach at least 100+ on the train score? |

|

@GauravBhagchandani I have tried a bunch of environments after that update and can successfully train the models with less steps reported in the paper. You can refer the code here https://github.com/wetliu/dqn_pytorch. |

|

I got past the 20 train reward barrier. I was using SGD as the optimizer with 0.9 as the momentum. I switched to Adam with an epsilon of 1e-4 and that seemed to do the trick for me. It's currently training but I'm at 6.7M samples so far with train rewards around 30-40 and test up to 300. Currently, the model gets stuck after it tunnels through and breaks a lot of the blocks. It just stops moving. I think that'll get resolved as it trains more. Update: It plateaued at 30-40 for even 20M steps. I'm a bit lost now on what to do to fix this. It's really annoying. @wetliu Is there any chance I could show you my code and get some guidance? |

Hi Denny,

Thanks for this wonderful resource. It's been hugely helpful. Can you say what your results are when training the DQN solution? I've been unable to reproduce the results of the DeepMind paper. I'm using your DQN solution below, although I did try mine first :)

While training, the TensorBoard "episode_reward" graph peaks at an average reward of ~35, and then tapers off. When I run the final checkpoint with a fully greedy policy (no epsilon), I get similar rewards.

The DeepMind paper cites rewards around 400.

I have tried 1) implementing the error clipping from the paper, 2) changing the RMSProp arguments to (I think) reflect those from the paper, 3) changing the "replay_memory_size" and "epsilon_decay_steps" to the paper's 1,000,000, 4) enabling all six agent actions from the Open AI Gym. Still average reward peaks at 35.

Any pointers would be greatly appreciated.

Screenshot is of my still-running training session at episode 4878 with all above modifications to dqn.py:

The text was updated successfully, but these errors were encountered: