-

Notifications

You must be signed in to change notification settings - Fork 16.7k

[schema] Updating the tables schema #5449

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Changes from all commits

File filter

Filter by extension

Conversations

Jump to

Diff view

Diff view

There are no files selected for viewing

| Original file line number | Diff line number | Diff line change |

|---|---|---|

|

|

@@ -27,14 +27,14 @@ | |

| from flask_babel import gettext as __ | ||

| from flask_babel import lazy_gettext as _ | ||

| from wtforms.ext.sqlalchemy.fields import QuerySelectField | ||

| from wtforms.validators import Regexp | ||

|

|

||

| from superset import appbuilder, db, security_manager | ||

| from superset.connectors.base.views import DatasourceModelView | ||

| from superset.utils import core as utils | ||

| from superset.views.base import ( | ||

| DatasourceFilter, | ||

| DeleteMixin, | ||

| get_datasource_exist_error_msg, | ||

| ListWidgetWithCheckboxes, | ||

| SupersetModelView, | ||

| YamlExportMixin, | ||

|

|

@@ -303,6 +303,11 @@ class TableModelView(DatasourceModelView, DeleteMixin, YamlExportMixin): # noqa | |

| "template_params": _("Template parameters"), | ||

| "modified": _("Modified"), | ||

| } | ||

| validators_columns = { | ||

|

||

| "table_name": [ | ||

| Regexp(r"^[^\.]+$", message=_("Table name cannot contain a schema")) | ||

| ] | ||

| } | ||

|

|

||

| edit_form_extra_fields = { | ||

| "database": QuerySelectField( | ||

|

|

@@ -313,14 +318,10 @@ class TableModelView(DatasourceModelView, DeleteMixin, YamlExportMixin): # noqa | |

| } | ||

|

|

||

| def pre_add(self, table): | ||

| with db.session.no_autoflush: | ||

| table_query = db.session.query(models.SqlaTable).filter( | ||

| models.SqlaTable.table_name == table.table_name, | ||

| models.SqlaTable.schema == table.schema, | ||

| models.SqlaTable.database_id == table.database.id, | ||

| ) | ||

| if db.session.query(table_query.exists()).scalar(): | ||

| raise Exception(get_datasource_exist_error_msg(table.full_name)) | ||

|

|

||

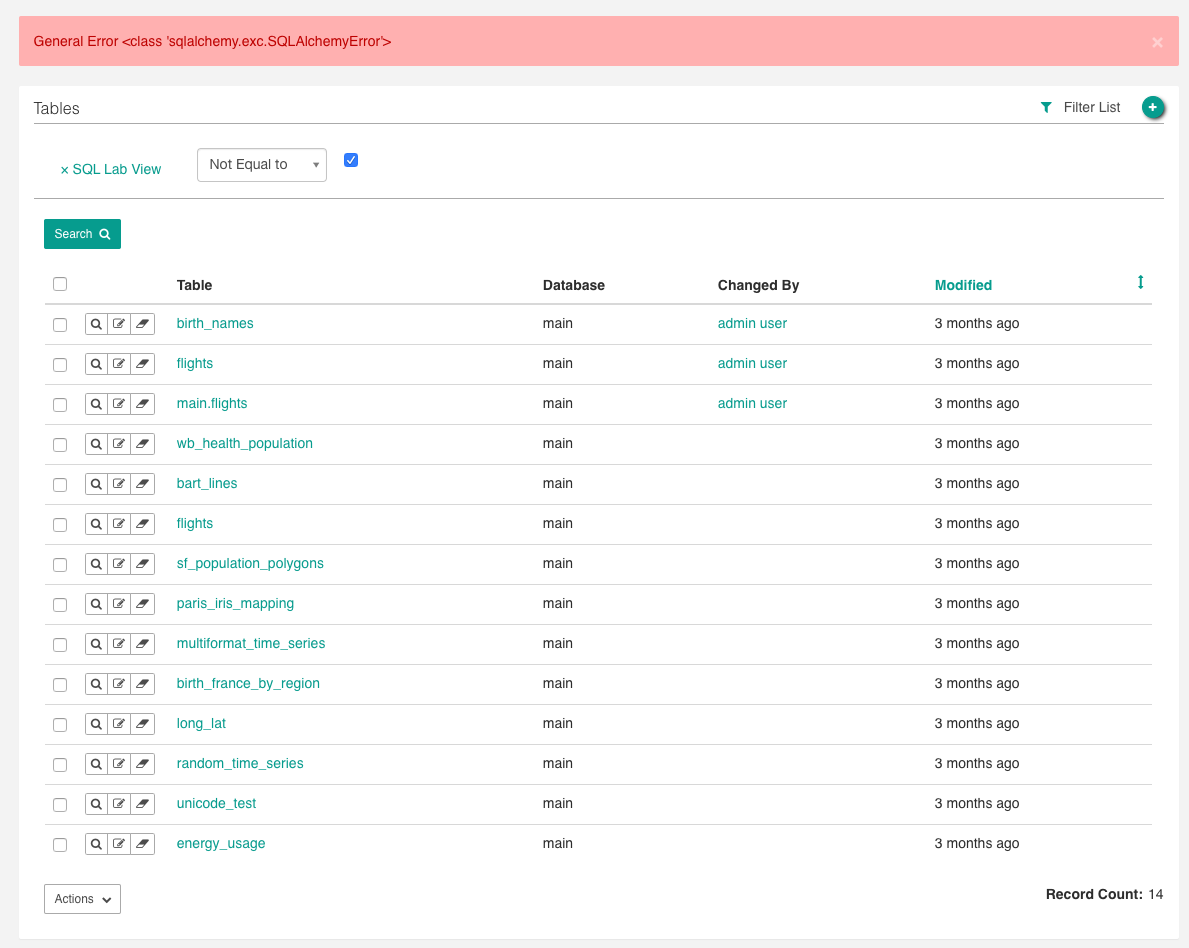

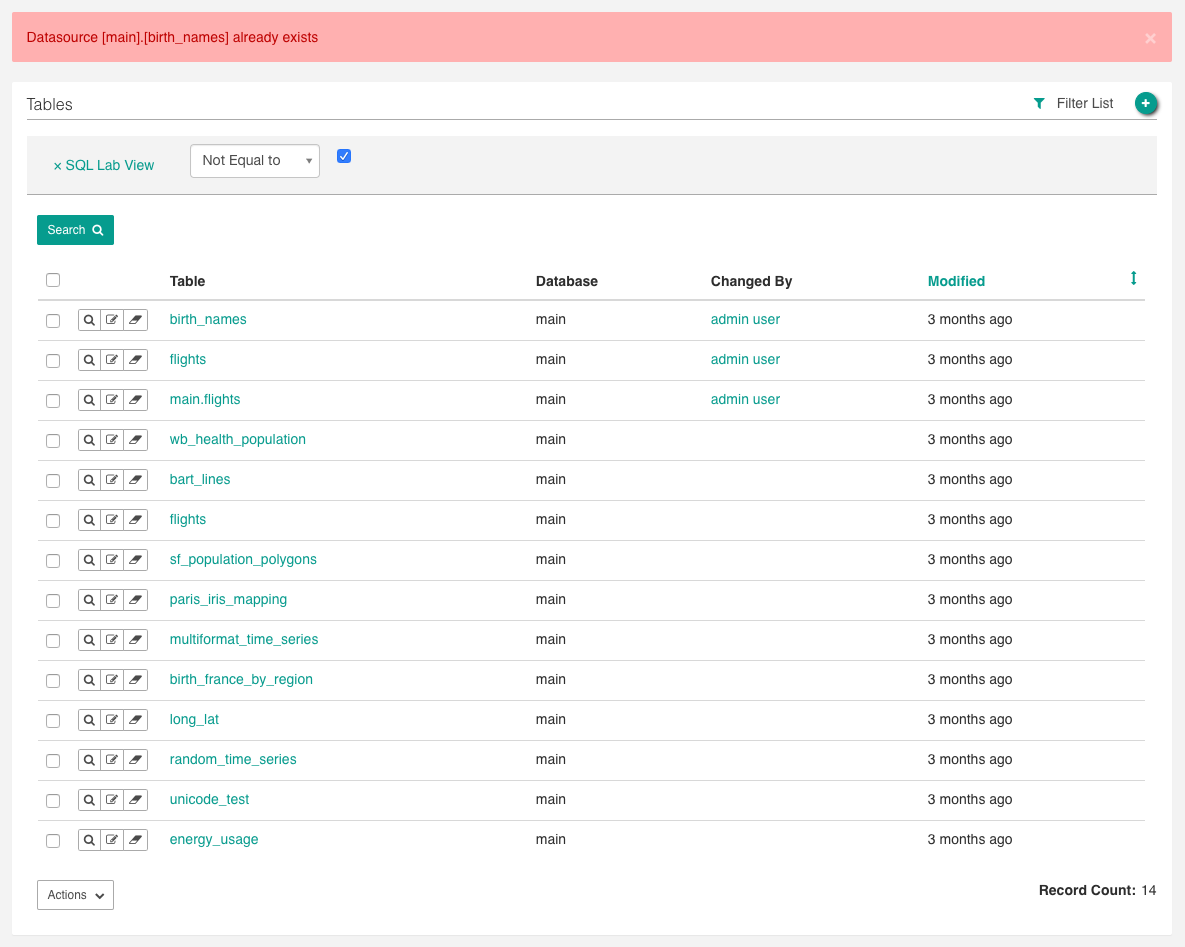

| # Although the listener exists this is necessary to ensure that FAB flashes the | ||

| # specific message as opposed to "General Error <class.Exception>". | ||

| models.SqlaTable.before_insert(None, None, table) | ||

|

||

|

|

||

| # Fail before adding if the table can't be found | ||

| try: | ||

|

|

||

| Original file line number | Diff line number | Diff line change |

|---|---|---|

| @@ -0,0 +1,57 @@ | ||

| # Licensed to the Apache Software Foundation (ASF) under one | ||

| # or more contributor license agreements. See the NOTICE file | ||

| # distributed with this work for additional information | ||

| # regarding copyright ownership. The ASF licenses this file | ||

| # to you under the Apache License, Version 2.0 (the | ||

| # "License"); you may not use this file except in compliance | ||

| # with the License. You may obtain a copy of the License at | ||

| # | ||

| # http://www.apache.org/licenses/LICENSE-2.0 | ||

| # | ||

| # Unless required by applicable law or agreed to in writing, | ||

| # software distributed under the License is distributed on an | ||

| # "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY | ||

| # KIND, either express or implied. See the License for the | ||

| # specific language governing permissions and limitations | ||

| # under the License. | ||

| """update tables | ||

|

|

||

| Revision ID: 1d73b15530e7 | ||

| Revises: b6fa807eac07 | ||

| Create Date: 2018-07-20 11:36:04.535859 | ||

|

|

||

| """ | ||

| from alembic import op | ||

| from sqlalchemy import engine | ||

| from sqlalchemy.exc import OperationalError, ProgrammingError | ||

|

|

||

| from superset.utils.core import generic_find_uq_constraint_name | ||

|

|

||

| # revision identifiers, used by Alembic. | ||

| revision = "1d73b15530e7" | ||

| down_revision = "b6fa807eac07" | ||

|

|

||

| conv = {"uq": "uq_%(table_name)s_%(column_0_name)s"} | ||

|

|

||

|

|

||

| def upgrade(): | ||

| bind = op.get_bind() | ||

| insp = engine.reflection.Inspector.from_engine(bind) | ||

|

|

||

| # Drop the uniqueness constraint if it exists. | ||

| try: | ||

| with op.batch_alter_table("tables", naming_convention=conv) as batch_op: | ||

| batch_op.drop_constraint( | ||

| generic_find_uq_constraint_name("tables", {"table_name"}, insp) | ||

| or "uq_tables_table_name", | ||

| type_="unique", | ||

| ) | ||

| except (ProgrammingError, OperationalError): | ||

| pass | ||

|

|

||

|

|

||

| def downgrade(): | ||

|

|

||

| # Re-add the uniqueness constraint. | ||

| with op.batch_alter_table("tables", naming_convention=conv) as batch_op: | ||

| batch_op.create_unique_constraint("uq_tables_table_name", ["table_name"]) |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

@mistercrunch I'm really not a fan of this but the import_from_dict method uses the uniqueness constraint add add additionally filters to match the relevant record in the database.