-

Notifications

You must be signed in to change notification settings - Fork 29k

[SPARK-24645][SQL] Skip parsing when csvColumnPruning enabled and partitions scanned only #21631

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Conversation

|

cc: @HyukjinKwon @MaxGekk |

HyukjinKwon

left a comment

HyukjinKwon

left a comment

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

|

@HyukjinKwon sure, I'll do |

| withTempPath { path => | ||

| val dir = path.getAbsolutePath | ||

| spark.range(10).selectExpr("id % 2 AS p", "id").write.partitionBy("p").csv(dir) | ||

| spark.read.csv(dir).selectExpr("sum(p)").collect() |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

oh, I forgot to add assert here. I'll update soon.

|

As I described in #21625 (comment), I found another bug? (the case where I worked on this fix and made a patch to fix this; master...maropu:SPARK-24645-2 |

|

@maropu Could you confirm whether these two bugs are regressions in the master branch? |

|

yea, I think this is regressions because I checked that the query above passed in the master before this commit. |

|

Both? |

|

Test build #92284 has finished for PR 21631 at commit

|

|

Test build #92282 has finished for PR 21631 at commit

|

|

yea, I checked the two queries with/without column pruning in the master; The two queries is ok in v2.3.1 and in the master before the commit. |

|

retest this please |

|

Test build #92293 has finished for PR 21631 at commit

|

|

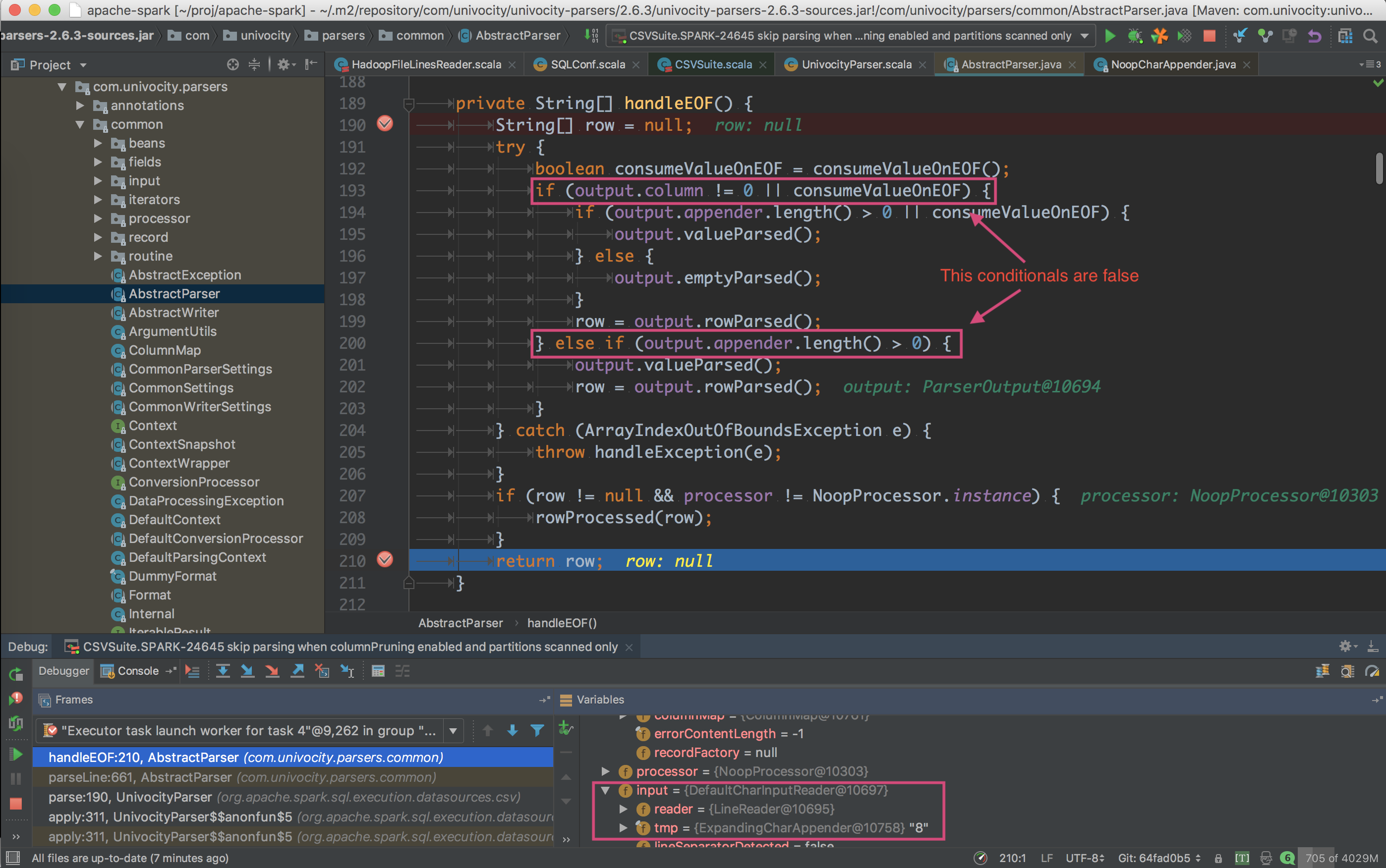

Looking at the if (tokens.length != schema.length) {where I think the right fix would be to check result of Just in case, I have checked the commit before my changes - it also doesn't contain checking returned value of |

|

Wouldn't it be better to check schema if it works instead of value per record? |

|

@MaxGekk yea, I noticed that behaviour. Probably, in case we set an empty array in |

|

It seems |

|

So you mean it's a bug in Univocity? that's another fix for a bug existing in Univocity then. We could work around this bug if it's clear that's a bug. I would suggest to open a bug there if we are not sure in any event. FWIW, their support is quite responsive when the issue is reported with a clear reproducer and descriptions. this issue sounds slightly orthogonal to the JIRA itself though. |

No, I mean we don't handle spark/sql/core/src/main/scala/org/apache/spark/sql/execution/datasources/FailureSafeParser.scala Lines 59 to 73 in 1a527bd

DropMalformedMode mode, for example, we could just drop the line instead of propagating NPE to user space.

|

|

I mean |

I will try to reproduce it on small example without Spark. I am not sure what the expected behavior should be if set of selected columns is empty. Should the |

| } | ||

| } | ||

|

|

||

| private lazy val doParse = if (schema.nonEmpty) { |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Do you really need lazy here. In most cases, time interval between calling of the constructor and the parse() method is pretty short. I don't think we win something here.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

yea, I missed. Thanks!

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

recheck: it seems parse is called in executor sides only, so we can add lazy here to avoid unnecessary instantiation?

|

Test build #92317 has finished for PR 21631 at commit

|

|

Here is the test for uniVocity parser: https://github.com/MaxGekk/univocity_tests . For the first line, |

|

v2.5.9 also have the same behaviour? Anyway, it'd be better to ask the author ;) I asked before and I got quick response. |

yes, it is the same.

ok. I will create an issue for uniVocity. |

|

@HyukjinKwon BTW, can you check this? |

|

LGTM. @MaxGekk please take a following action. Will help and check if it's needed. |

|

Merged to master. |

I have opened the issue for uniVocity parser: uniVocity/univocity-parsers#250 |

|

The bug has been already fixed in uniVocity |

|

oh, super quick fix ;) Thanks, @MaxGekk |

I have checked uniVocity 2.7.2, there is no problem on this version. |

|

@MaxGekk, thanks. mind opening a PR to upgrade? |

What changes were proposed in this pull request?

In the master, when

csvColumnPruning(implemented in this commit) enabled and partitions scanned only, it throws an exception below;This pr modified code to skip CSV parsing in the case.

How was this patch tested?

Added tests in

CSVSuite.