Leverage retworkx for apply_operation* inner loop#5267

Conversation

This commit migrates the inner loop for apply_operation_back and apply_operation_front to leverage retworkx's insert_node_between_multiple PyDiGraph method. This retworkx method performs essentially the same loop internally but executes faster. Depends on Qiskit/rustworkx#181 being included in a release.

|

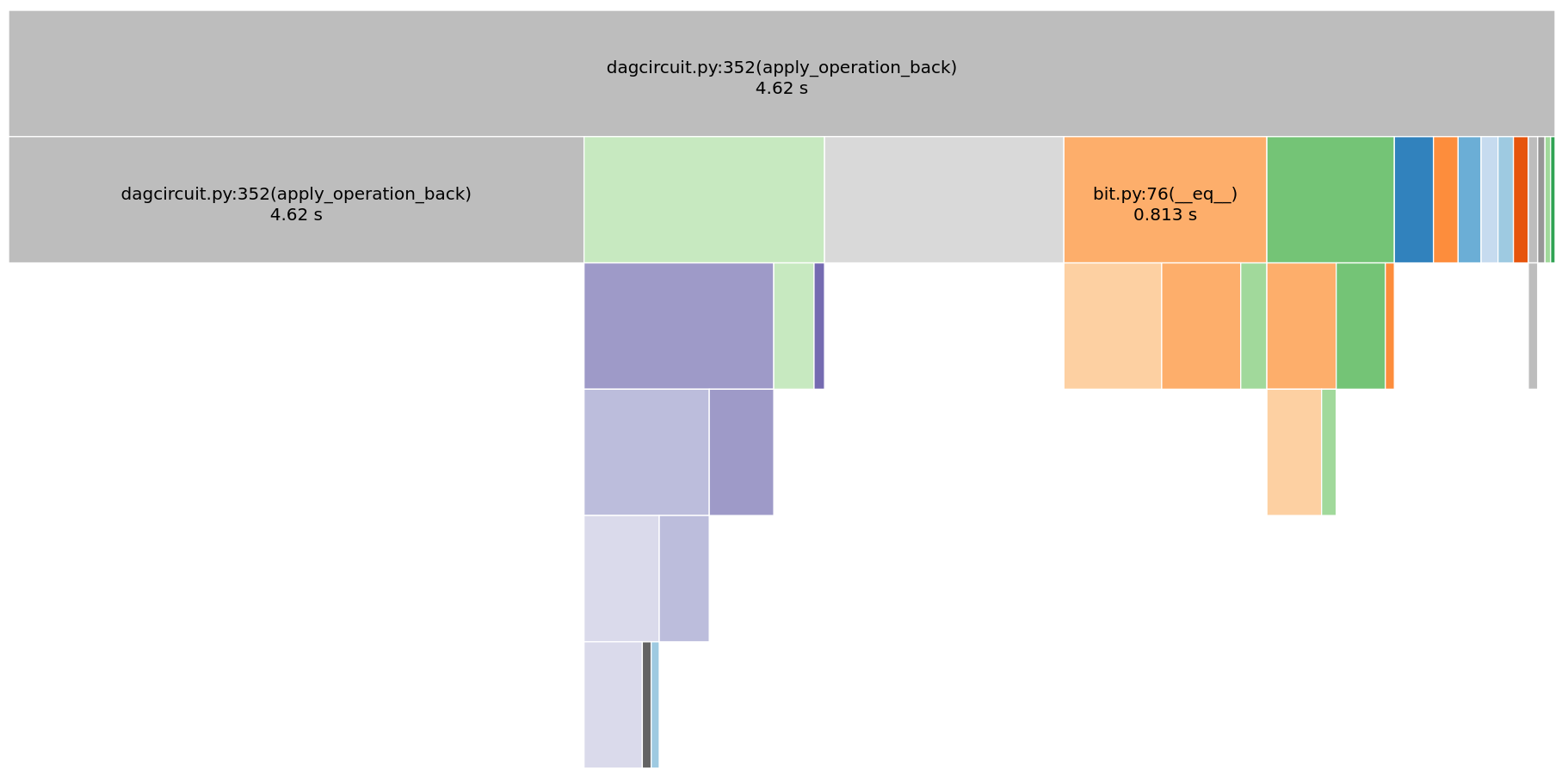

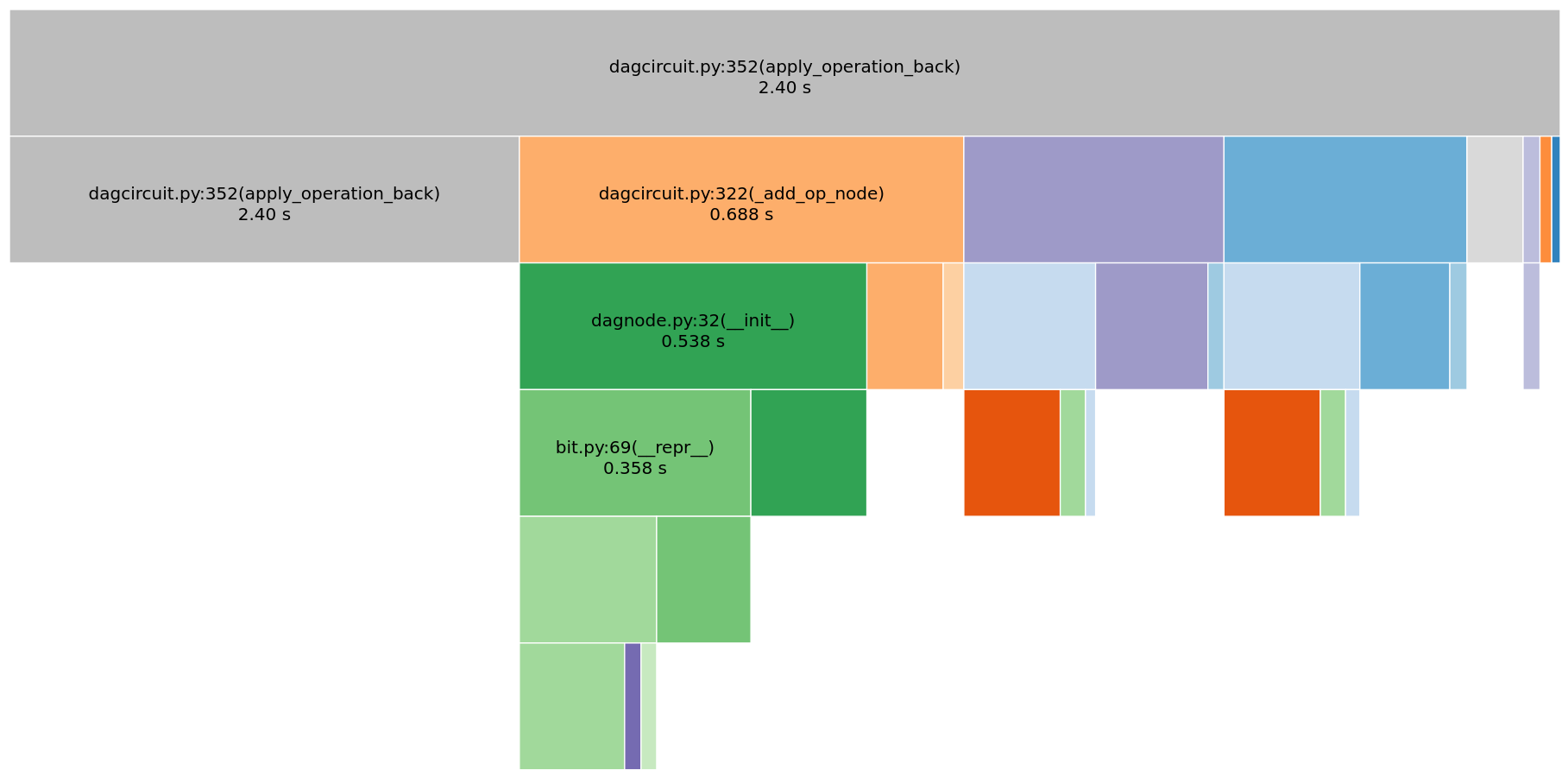

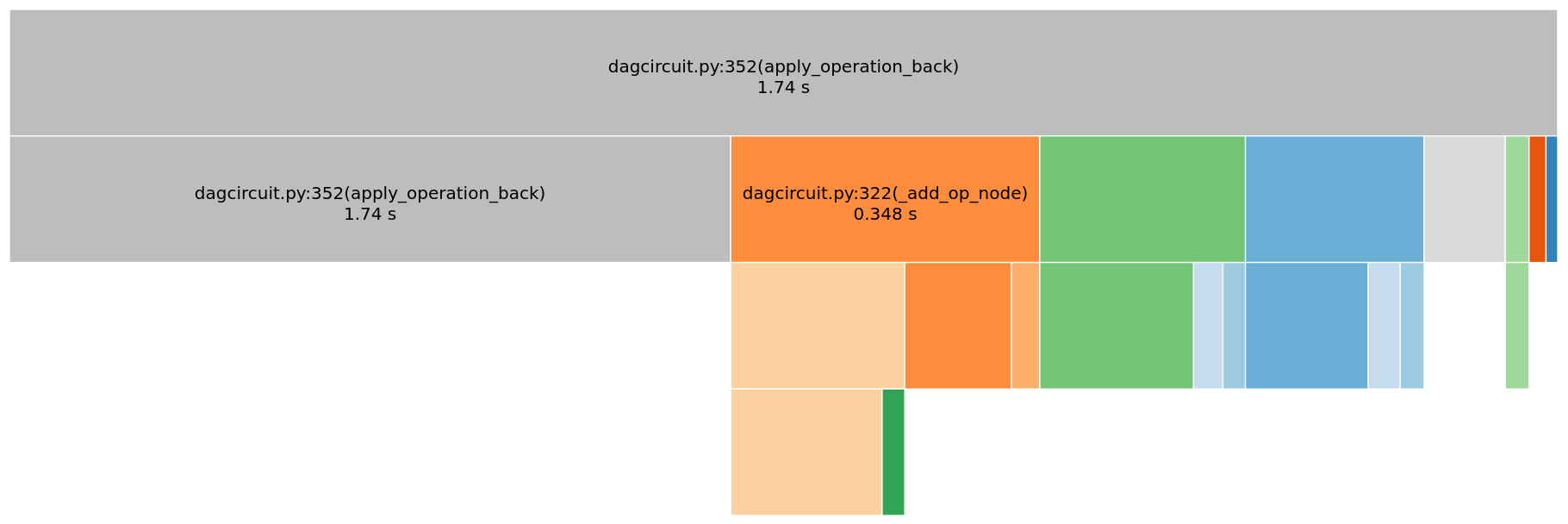

Just to show some initial numbers on this. It's showing a ~2X speed improvement for To this with this PR applied (and retworkx patched): The interesting things are after this PR the bottlenecks for apply operation back are a 3 way tie between calling I'm thinking that after this and #5272 we might be able to speed up the other 2 bottlenecks by changing the |

|

I added the |

|

retworkx 0.6.0 was released this morning, so this is no longer blocked. |

In sabre_swap the comprehension in _score_heuristic() with the basic heuristic starts to become a bottleneck as the size of the coupling map increases. This probably becomes the top bottleneck in a transpilation after Qiskit#5316, Qiskit#5183, Qiskit#5294, Qiskit#5267, and Qiskit#5272 are merged. This commit is an attempt to try and mitigate that a bit by using numpy native operations instead of a python comprehension in sum(). If this is insufficient we'll likely have to either avoid doing a sum like this or drop down to a lower level with cython or rust and operate on the array directly to remove this bottleneck.

* Use numpy sum instead of comprehension in _score_heuristic In sabre_swap the comprehension in _score_heuristic() with the basic heuristic starts to become a bottleneck as the size of the coupling map increases. This probably becomes the top bottleneck in a transpilation after #5316, #5183, #5294, #5267, and #5272 are merged. This commit is an attempt to try and mitigate that a bit by using numpy native operations instead of a python comprehension in sum(). If this is insufficient we'll likely have to either avoid doing a sum like this or drop down to a lower level with cython or rust and operate on the array directly to remove this bottleneck. * Make distance matrix a coupling map property * Fix tests * Fix lint Co-authored-by: mergify[bot] <37929162+mergify[bot]@users.noreply.github.com>

Summary

This commit migrates the inner loop for apply_operation_back and

apply_operation_front to leverage retworkx's

insert_node_between_multiple PyDiGraph method. This retworkx method

performs essentially the same loop internally but executes faster.

Details and comments

Depends on Qiskit/rustworkx#181 being included in

a release.