abed is an automated system for benchmarking machine learning algorithms. It is created for running experiments where it is desired to run multiple methods on multiple datasets using multiple parameters. It includes automated processing of result files into result tables and figures.

abed is available on PyPI:

$ pip install abed

abed was created as a way to automate all the tedious work necessary to

set up proper benchmarking experiments. It also removes much of the hassle by

using a single configuration file for the experimental setup. A core feature

of abed is that it doesn't care about which language the tested methods are

written in.

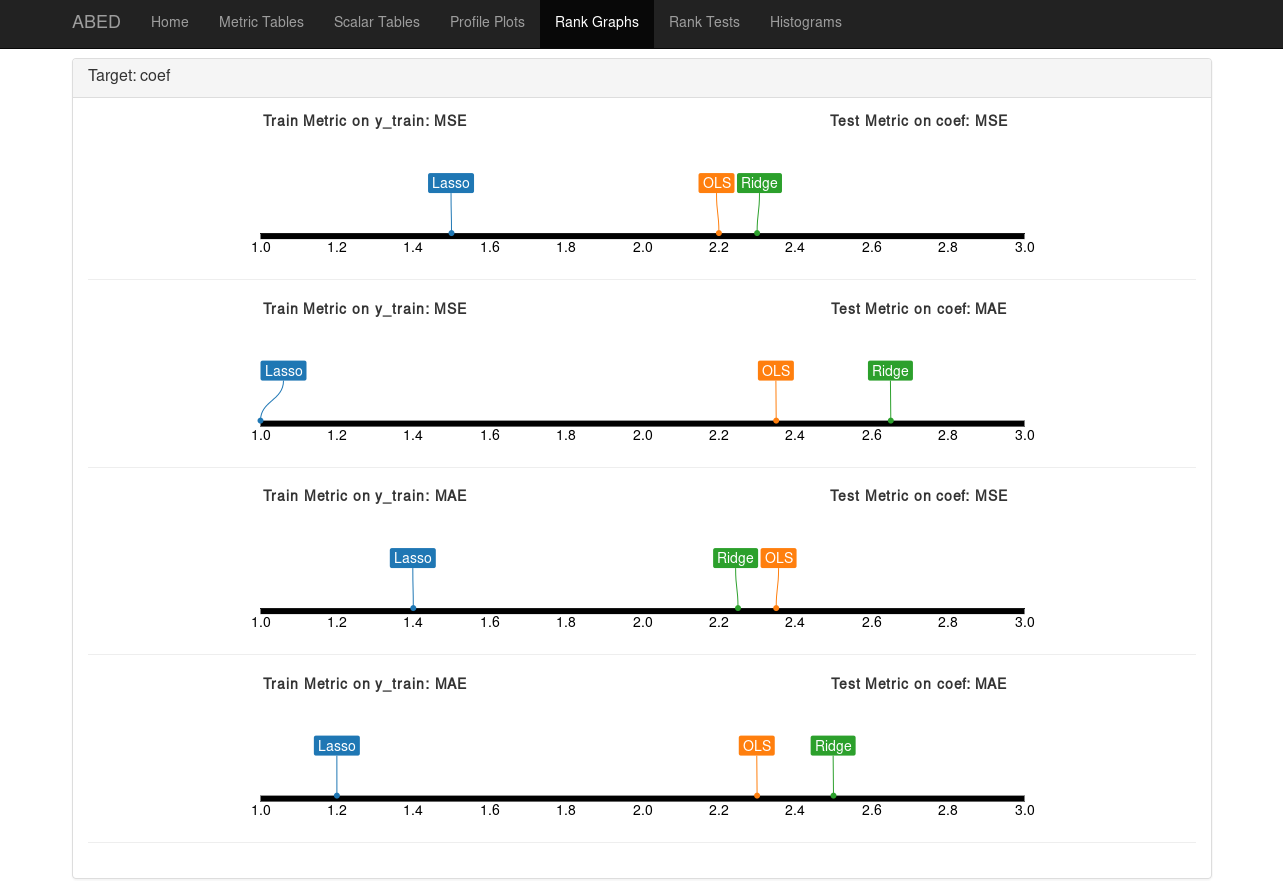

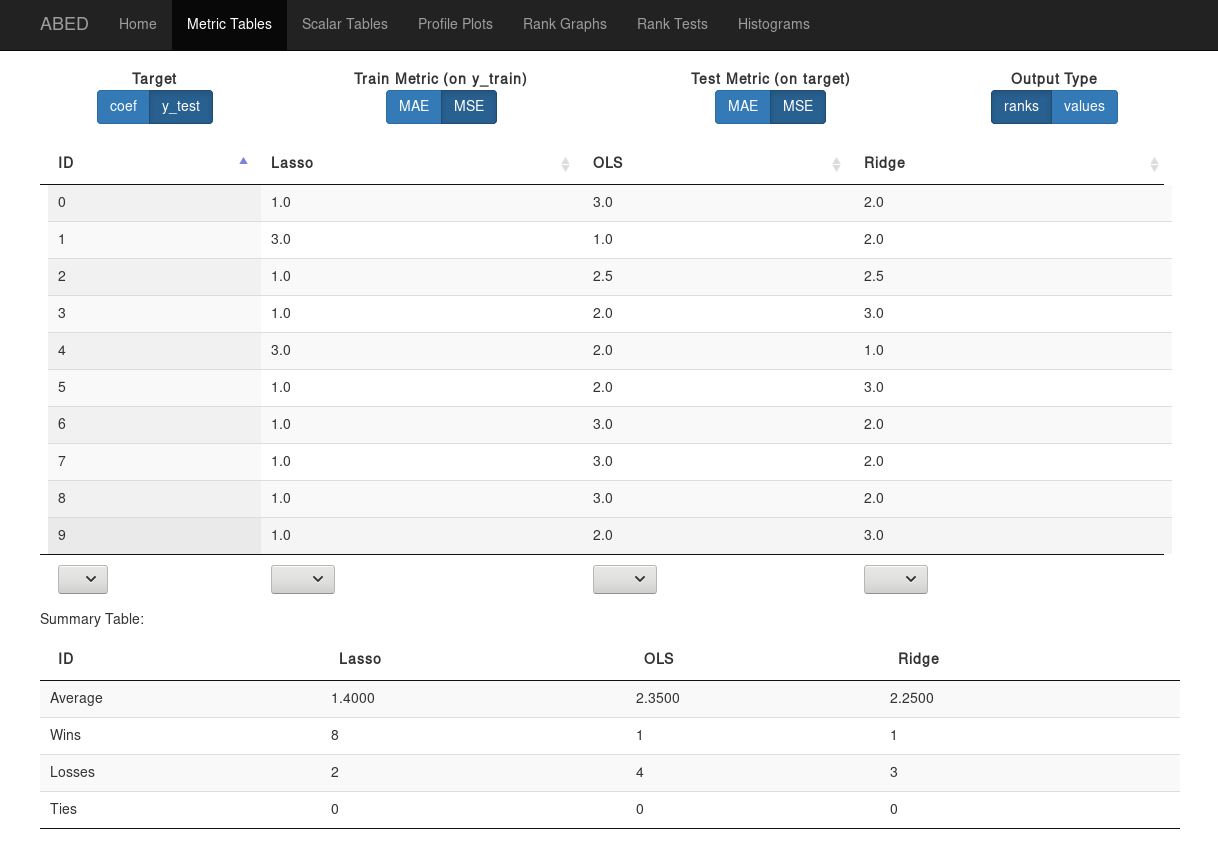

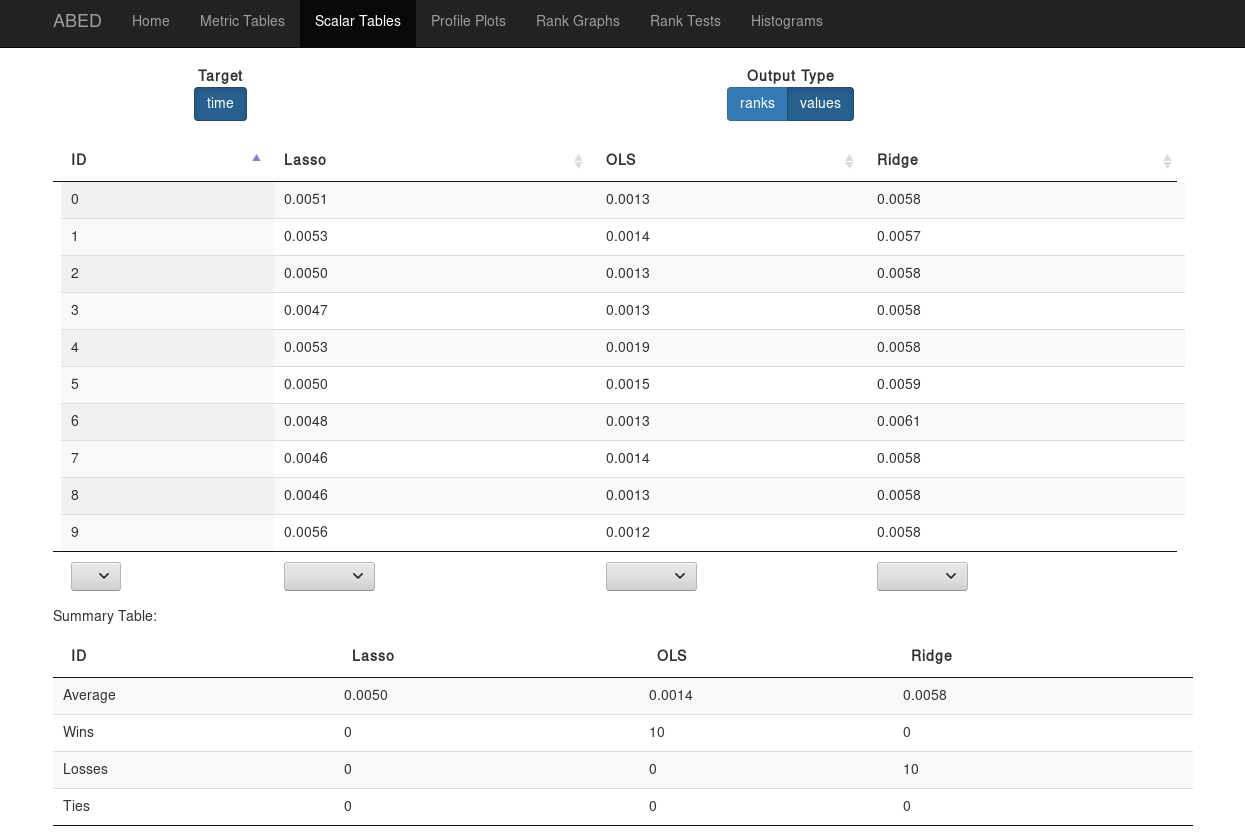

abed can create output tables as either simple txt files, or as html pages

using the excellent DataTables plugin. To support

offline operation the necessary DataTables files are packaged with abed.

For abed's documentation, see the documentation.

The current version of abed is very usable. However, it is still considered

beta software, as it is not yet completely documented and some robustness

improvements are planned. For a similar and more mature project which works

with R see: BatchExperiments.