-

-

Notifications

You must be signed in to change notification settings - Fork 6.9k

Add blog post: Achieving Sub-Millisecond Proxy Overhead #20309

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Merged

Merged

Changes from all commits

Commits

File filter

Filter by extension

Conversations

Failed to load comments.

Loading

Jump to

Jump to file

Failed to load files.

Loading

Diff view

Diff view

There are no files selected for viewing

92 changes: 92 additions & 0 deletions

92

docs/my-website/blog/sub_millisecond_proxy_overhead/index.md

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| Original file line number | Diff line number | Diff line change |

|---|---|---|

| @@ -0,0 +1,92 @@ | ||

| --- | ||

| slug: sub-millisecond-proxy-overhead | ||

| title: "Achieving Sub-Millisecond Proxy Overhead" | ||

| date: 2026-02-02T10:00:00 | ||

| authors: | ||

| - name: Alexsander Hamir | ||

| title: "Performance Engineer, LiteLLM" | ||

| url: https://www.linkedin.com/in/alexsander-baptista/ | ||

| image_url: https://github.com/AlexsanderHamir.png | ||

| - name: Krrish Dholakia | ||

| title: "CEO, LiteLLM" | ||

| url: https://www.linkedin.com/in/krish-d/ | ||

| image_url: https://pbs.twimg.com/profile_images/1298587542745358340/DZv3Oj-h_400x400.jpg | ||

| - name: Ishaan Jaff | ||

| title: "CTO, LiteLLM" | ||

| url: https://www.linkedin.com/in/reffajnaahsi/ | ||

| image_url: https://pbs.twimg.com/profile_images/1613813310264340481/lz54oEiB_400x400.jpg | ||

| description: "Our Q1 performance target and architectural direction for achieving sub-millisecond proxy overhead on modest hardware." | ||

| tags: [performance, architecture] | ||

| hide_table_of_contents: false | ||

| --- | ||

|

|

||

|  | ||

|

Contributor

There was a problem hiding this comment. Choose a reason for hiding this commentThe reason will be displayed to describe this comment to others. Learn more. Image URL points to a personal GitHub account ( Note: If this suggestion doesn't match your team's coding style, reply to this and let me know. I'll remember it for next time! Prompt To Fix With AIThis is a comment left during a code review.

Path: docs/my-website/blog/sub_millisecond_proxy_overhead/index.md

Line: 23:23

Comment:

Image URL points to a personal GitHub account (`AlexsanderHamir/assets`). Consider hosting in the official LiteLLM repository for long-term stability.

<sub>Note: If this suggestion doesn't match your team's coding style, reply to this and let me know. I'll remember it for next time!</sub>

How can I resolve this? If you propose a fix, please make it concise. |

||

|

|

||

| # Achieving Sub-Millisecond Proxy Overhead | ||

|

|

||

| ## Introduction | ||

|

|

||

| Our Q1 performance target is to aggressively move toward sub-millisecond proxy overhead on a single instance with 4 CPUs and 8 GB of RAM, and to continue pushing that boundary over time. Our broader goal is to make LiteLLM inexpensive to deploy, lightweight, and fast. This post outlines the architectural direction behind that effort. | ||

|

|

||

| Proxy overhead refers to the latency introduced by LiteLLM itself, independent of the upstream provider. | ||

|

|

||

| To measure it, we run the same workload directly against the provider and through LiteLLM at identical QPS (for example, 1,000 QPS) and compare the latency delta. To reduce noise, the load generator, LiteLLM, and a mock LLM endpoint all run on the same machine, ensuring the difference reflects proxy overhead rather than network latency. | ||

|

|

||

| --- | ||

|

|

||

| ## Where We're Coming From | ||

|

|

||

| Under the same benchmark originally conducted by [TensorZero](https://www.tensorzero.com/docs/gateway/benchmarks), LiteLLM previously failed at around 1,000 QPS. | ||

|

|

||

| That is no longer the case. Today, LiteLLM can be stress-tested at 1,000 QPS with no failures and can scale up to 5,000 QPS without failures on a 4-CPU, 8-GB RAM single instance setup. | ||

|

|

||

| This establishes a more up to date baseline and provides useful context as we continue working on proxy overhead and overall performance. | ||

|

|

||

| --- | ||

|

|

||

| ## Design Choice | ||

|

|

||

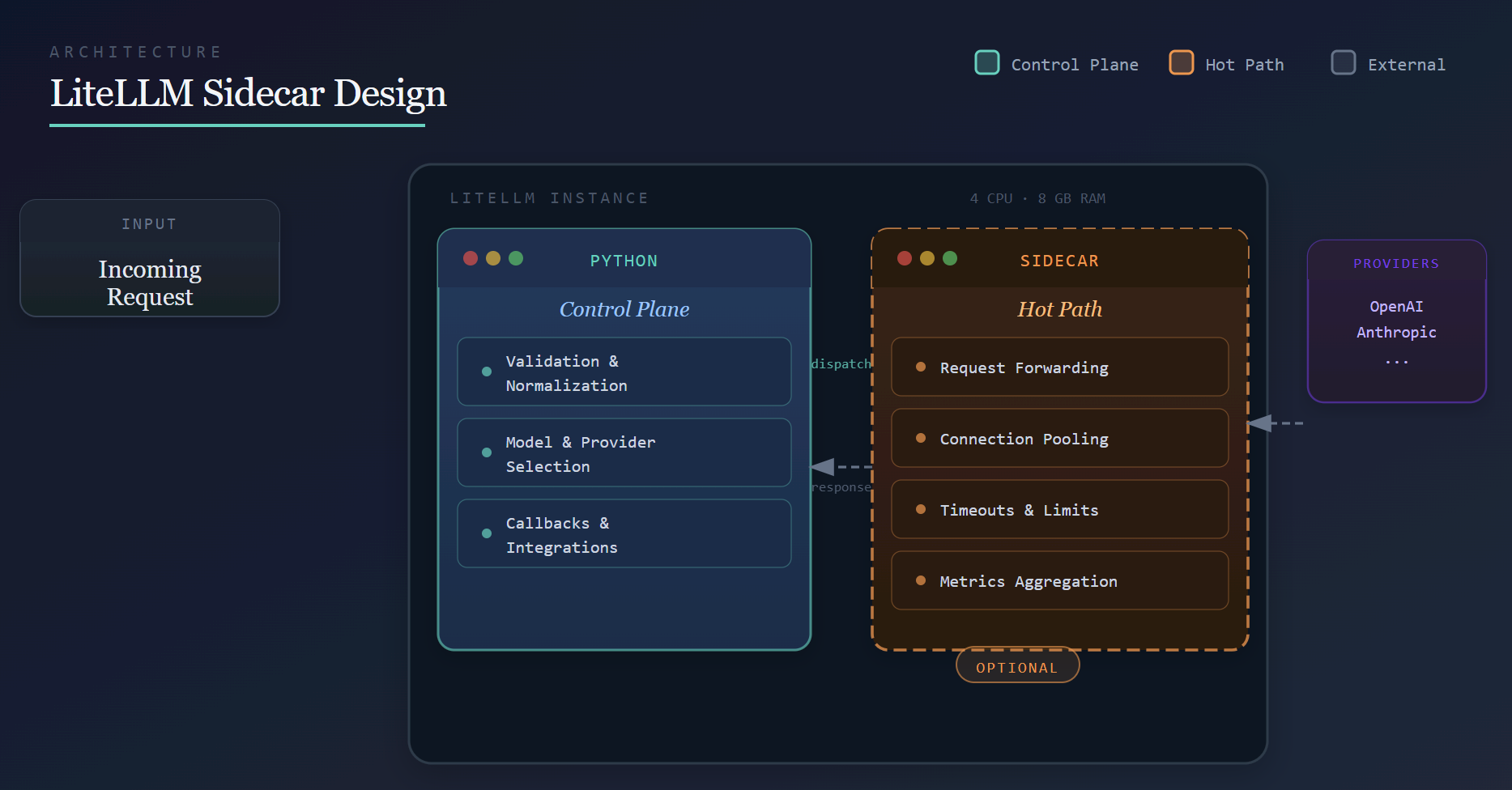

| Achieving sub-millisecond proxy overhead with a Python-based system requires being deliberate about where work happens. | ||

|

|

||

| Python is a strong fit for flexibility and extensibility: provider abstraction, configuration-driven routing, and a rich callback ecosystem. These are areas where development velocity and correctness matter more than raw throughput. | ||

|

|

||

| At higher request rates, however, certain classes of work become expensive when executed inside the Python process on every request. Rather than rewriting LiteLLM or introducing complex deployment requirements, we adopt an optional **sidecar architecture**. | ||

|

|

||

| This architectural change is how we intend to make LiteLLM **permanently fast**. While it supports our near-term performance targets, it is a long-term investment. | ||

|

|

||

| Python continues to own: | ||

|

|

||

| - Request validation and normalization | ||

| - Model and provider selection | ||

| - Callbacks and integrations | ||

|

|

||

| The sidecar owns **performance-critical execution**, such as: | ||

|

|

||

| - Efficient request forwarding | ||

| - Connection reuse and pooling | ||

| - Enforcing timeouts and limits | ||

| - Aggregating high-frequency metrics | ||

|

|

||

| This separation allows each component to focus on what it does best: Python acts as the control plane, while the sidecar handles the hot path. | ||

|

|

||

| --- | ||

|

|

||

| ### Why the Sidecar Is Optional | ||

|

|

||

| The sidecar is intentionally **optional**. | ||

|

|

||

| This allows us to ship it incrementally, validate it under real-world workloads, and avoid making it a hard dependency before it is fully battle-tested across all LiteLLM features. | ||

|

|

||

| Just as importantly, this ensures that self-hosting LiteLLM remains simple. The sidecar is bundled and started automatically, requires no additional infrastructure, and can be disabled entirely. From a user's perspective, LiteLLM continues to behave like a single service. | ||

|

|

||

| As of today, the sidecar is an optimization, not a requirement. | ||

|

|

||

| --- | ||

|

|

||

| ## Conclusion | ||

|

|

||

| Sub-millisecond proxy overhead is not achieved through a single optimization, but through architectural changes. | ||

|

|

||

| By keeping Python focused on orchestration and extensibility, and offloading performance-critical execution to a sidecar, we establish a foundation for making LiteLLM **permanently fast over time**—even on modest hardware such as a 1-CPU, 2-GB RAM instance, while keeping deployment and self-hosting simple. | ||

|

|

||

| This work extends beyond Q1, and we will continue sharing benchmarks and updates as the architecture evolves. | ||

Oops, something went wrong.

Add this suggestion to a batch that can be applied as a single commit.

This suggestion is invalid because no changes were made to the code.

Suggestions cannot be applied while the pull request is closed.

Suggestions cannot be applied while viewing a subset of changes.

Only one suggestion per line can be applied in a batch.

Add this suggestion to a batch that can be applied as a single commit.

Applying suggestions on deleted lines is not supported.

You must change the existing code in this line in order to create a valid suggestion.

Outdated suggestions cannot be applied.

This suggestion has been applied or marked resolved.

Suggestions cannot be applied from pending reviews.

Suggestions cannot be applied on multi-line comments.

Suggestions cannot be applied while the pull request is queued to merge.

Suggestion cannot be applied right now. Please check back later.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

The date is set to 2026-02-02, but today is 2026-02-03. Check if this is intentional or should be updated.

Prompt To Fix With AI